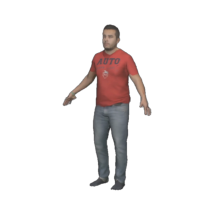

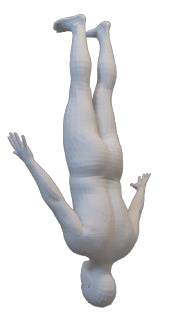

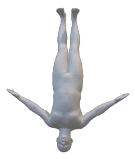

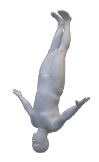

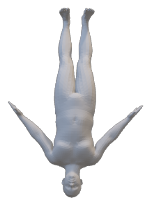

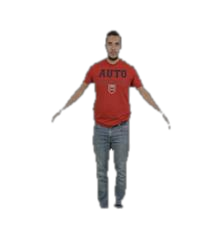

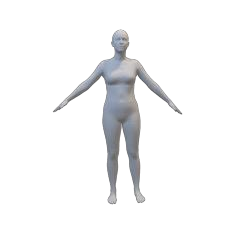

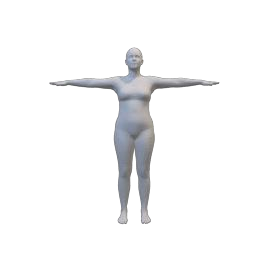

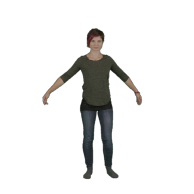

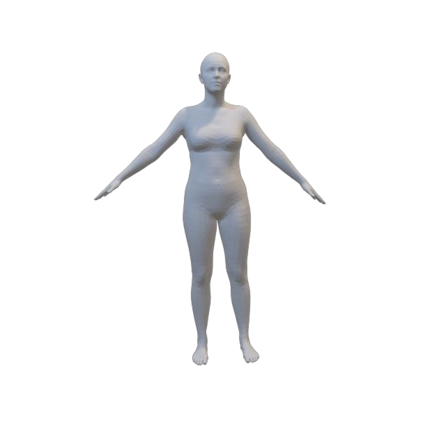

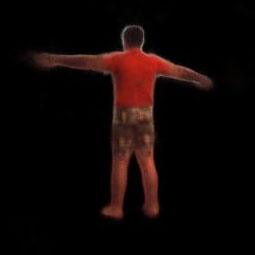

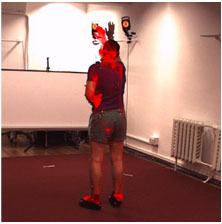

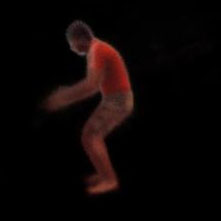

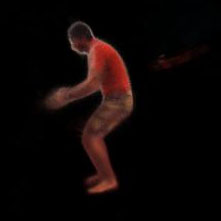

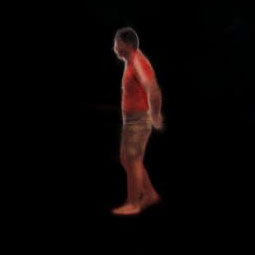

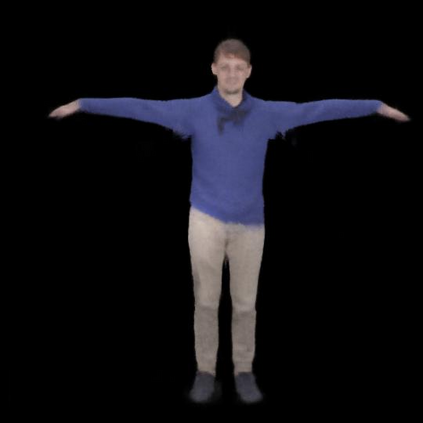

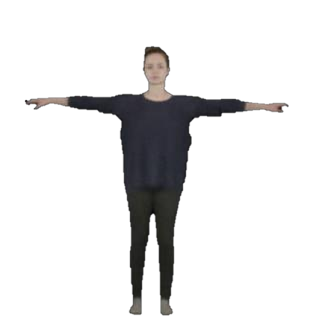

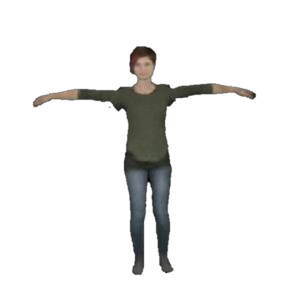

We present a novel paradigm of building an animatable 3D human representation from a monocular video input, such that it can be rendered in any unseen poses and views. Our method is based on a dynamic Neural Radiance Field (NeRF) rigged by a mesh-based parametric 3D human model serving as a geometry proxy. Previous methods usually rely on multi-view videos or accurate 3D geometry information as additional inputs; besides, most methods suffer from degraded quality when generalized to unseen poses. We identify that the key to generalization is a good input embedding for querying dynamic NeRF: A good input embedding should define an injective mapping in the full volumetric space, guided by surface mesh deformation under pose variation. Based on this observation, we propose to embed the input query with its relationship to local surface regions spanned by a set of geodesic nearest neighbors on mesh vertices. By including both position and relative distance information, our embedding defines a distance-preserved deformation mapping and generalizes well to unseen poses. To reduce the dependency on additional inputs, we first initialize per-frame 3D meshes using off-the-shelf tools and then propose a pipeline to jointly optimize NeRF and refine the initial mesh. Extensive experiments show our method can synthesize plausible human rendering results under unseen poses and views.

翻译:我们展示了一种新型模式,用单向视频输入来构建一个可想象的 3D 人类代表,这种模式可以用单向视频输入来构建一个可想象的 3D 人类代表,这样它就可以在任何看不见的外观和视图中实现。 我们的方法基于一个动态神经光谱辐射场(NeRF),由基于网状的参数3D 人类模型作为几何替代。 以往的方法通常依赖多视图视频或准确的 3D 几何信息作为附加投入; 此外,大多数方法在被普及到看不见的外观时质量都会退化。 我们的嵌入定义了一般化的关键在于为查询动态NERF提供一种良好的内嵌入: 良好的嵌入应定义一个在全体体体积空间的预测性绘图, 由地貌变异形的表面成形所引导。 基于这一观察,我们提议将输入的查询与其与本地表面区域的关系嵌入一个由一组地理特征相近邻的图像作为补充。 通过包含位置和相对距离的信息,我们嵌入定义了远程的畸形变图图图图, 并且将普通化到视觉显示。 为了减少对更多投入的依赖,我们最初的正版的版本的模型将展示,然后用直观的模型展示了人类的模型展示。