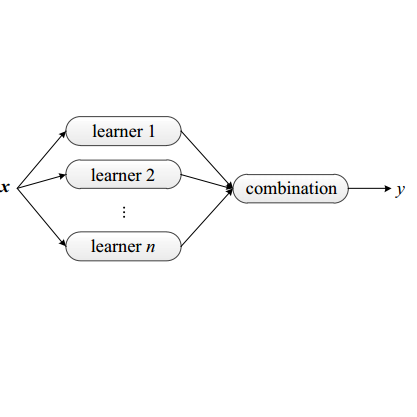

Deep ensemble learning has been shown to improve accuracy by training multiple neural networks and fusing their outputs. Ensemble learning has also been used to defend against membership inference attacks that undermine privacy. In this paper, we empirically demonstrate a trade-off between these two goals, namely accuracy and privacy (in terms of membership inference attacks), in deep ensembles. Using a wide range of datasets and model architectures, we show that the effectiveness of membership inference attacks also increases when ensembling improves accuracy. To better understand this trade-off, we study the impact of various factors such as prediction confidence and agreement between models that constitute the ensemble. Finally, we evaluate defenses against membership inference attacks based on regularization and differential privacy. We show that while these defenses can mitigate the effectiveness of the membership inference attack, they simultaneously degrade ensemble accuracy. We illustrate similar trade-off in more advanced and state-of-the-art ensembling techniques, such as snapshot ensembles and diversified ensemble networks. The source code is available in supplementary materials.

翻译:通过培训多种神经网络并粉碎其产出,深层的共性学习被证明可以提高准确性。综合学习还被用来防范破坏隐私的会籍推断攻击;在本文中,我们从经验上表明这两个目标之间的权衡,即精确性和隐私(在会籍推断攻击方面),在深层集合中。我们使用广泛的数据集和模型结构,表明成员推论攻击的效力在聚合时也会提高准确性。为了更好地了解这一权衡,我们研究了各种因素的影响,例如预测信心和构成共犯的模型之间的协议。最后,我们评估了基于正规化和差异隐私权的会籍推断攻击的抗辩。我们表明,虽然这些防御可以降低会籍攻击的效力,但同时也会降低共性攻击的准确性。我们用更先进和最先进的编组技术来说明类似的权衡,例如快照组合和多样化的组合网络。源代码可以在补充材料中找到。