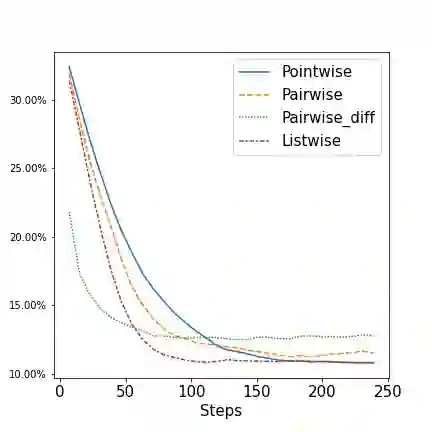

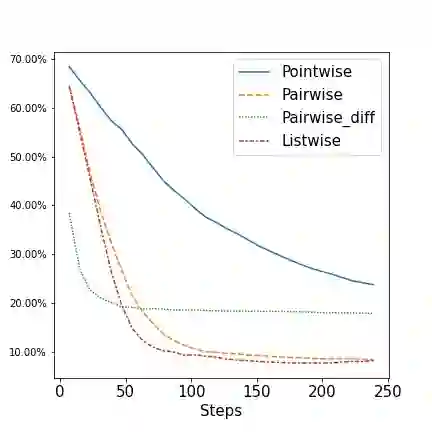

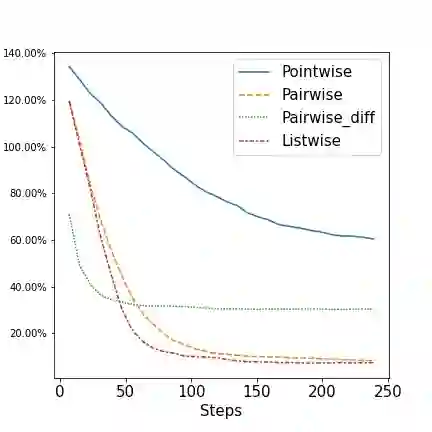

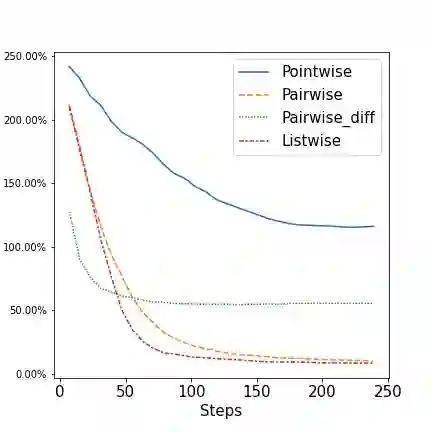

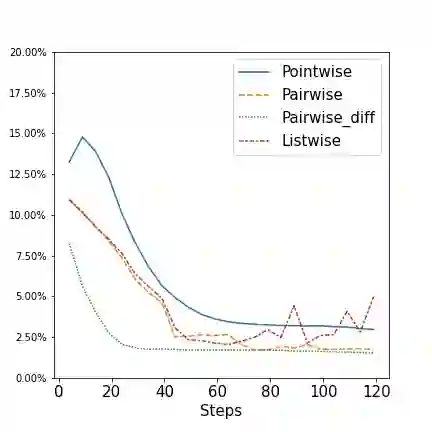

In the last years decision-focused learning framework, also known as predict-and-optimize, have received increasing attention. In this setting, the predictions of a machine learning model are used as estimated cost coefficients in the objective function of a discrete combinatorial optimization problem for decision making. Decision-focused learning proposes to train the ML models, often neural network models, by directly optimizing the quality of decisions made by the optimization solvers. Based on a recent work that proposed a noise contrastive estimation loss over a subset of the solution space, we observe that decision-focused learning can more generally be seen as a learning-to-rank problem, where the goal is to learn an objective function that ranks the feasible points correctly. This observation is independent of the optimization method used and of the form of the objective function. We develop pointwise, pairwise and listwise ranking loss functions, which can be differentiated in closed form given a subset of solutions. We empirically investigate the quality of our generic methods compared to existing decision-focused learning approaches with competitive results. Furthermore, controlling the subset of solutions allows controlling the runtime considerably, with limited effect on regret.

翻译:在过去几年中,注重决策的学习框架,又称预测和优化,受到越来越多的关注。在这一背景下,对机械学习模式的预测被作为决策中分离组合优化问题的客观功能中的成本估计系数。注重决策的学习建议通过直接优化优化优化优化解决方案解决者所作决定的质量,来培训ML模型,往往是神经网络模型。根据最近的一项工作,即提议对一组解决方案空间进行噪音对比性估计损失,我们发现,注重决策的学习可被更普遍地视为一个学习到排序的问题,其目标是学习正确排列可行点的客观功能。这一观察独立于所使用的优化方法和客观功能的形式。我们开发了精准、对称和列表式的损失排序功能,这些功能可以以封闭的形式进行区分,给一组解决方案带来一定的解决方案。我们实证地调查了我们通用方法的质量,与具有竞争性结果的现有注重决策的学习方法相比较。此外,控制解决方案的子集可以在很大程度上控制运行时间,对遗憾影响有限。