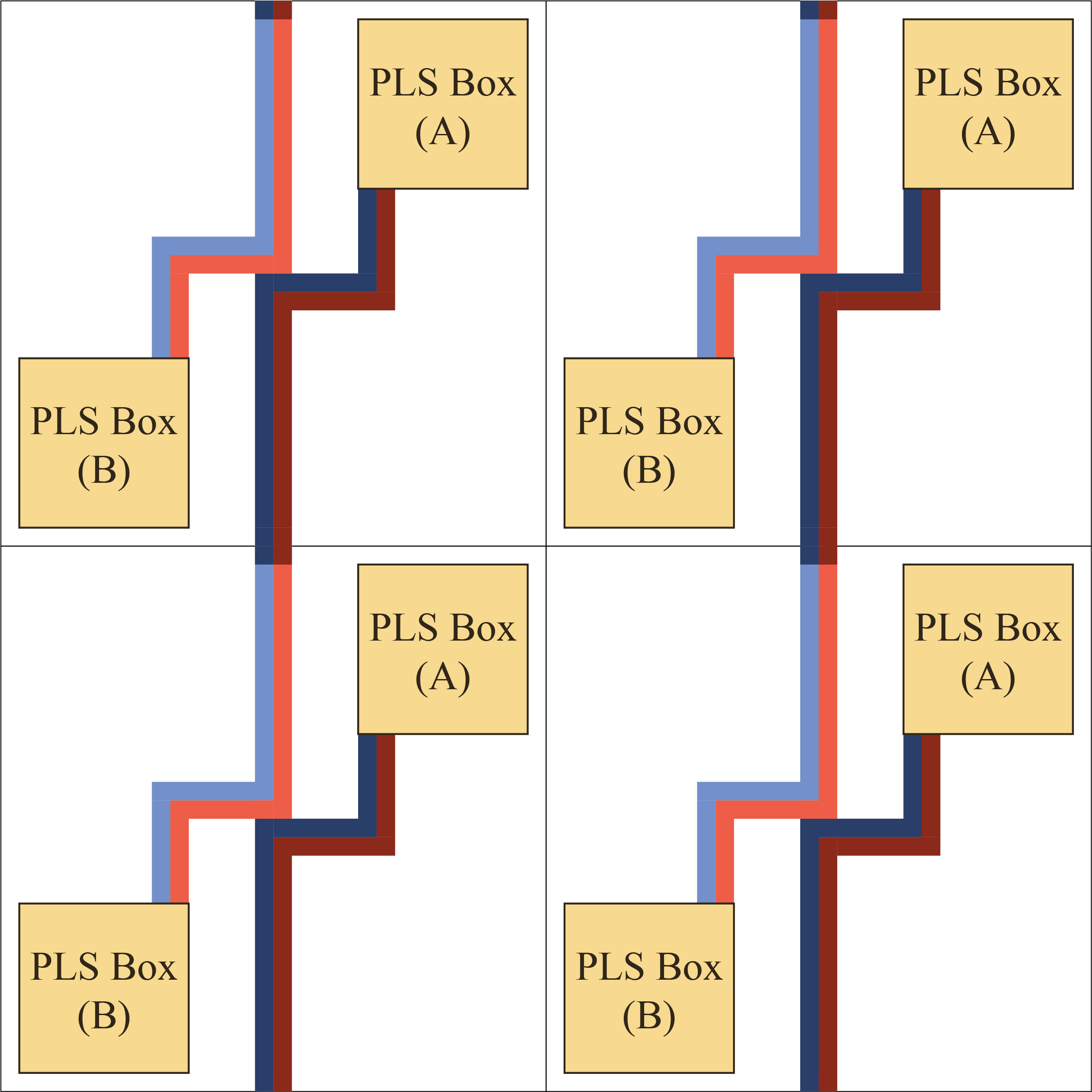

Finding approximate stationary points, i.e., points where the gradient is approximately zero, of non-convex but smooth objective functions $f$ over unrestricted $d$-dimensional domains is one of the most fundamental problems in classical non-convex optimization. Nevertheless, the computational and query complexity of this problem are still not well understood when the dimension $d$ of the problem is independent of the approximation error. In this paper, we show the following computational and query complexity results: 1. The problem of finding approximate stationary points over unrestricted domains is PLS-complete. 2. For $d = 2$, we provide a zero-order algorithm for finding $\varepsilon$-approximate stationary points that requires at most $O(1/\varepsilon)$ value queries to the objective function. 3. We show that any algorithm needs at least $\Omega(1/\varepsilon)$ queries to the objective function and/or its gradient to find $\varepsilon$-approximate stationary points when $d=2$. Combined with the above, this characterizes the query complexity of this problem to be $\Theta(1/\varepsilon)$. 4. For $d = 2$, we provide a zero-order algorithm for finding $\varepsilon$-KKT points in constrained optimization problems that requires at most $O(1/\sqrt{\varepsilon})$ value queries to the objective function. This closes the gap between the works of Bubeck and Mikulincer [2020] and Vavasis [1993] and characterizes the query complexity of this problem to be $\Theta(1/\sqrt{\varepsilon})$. 5. Combining our results with the recent result of Fearnley et al. [2022], we show that finding approximate KKT points in constrained optimization is reducible to finding approximate stationary points in unconstrained optimization but the converse is impossible.

翻译:暂无翻译