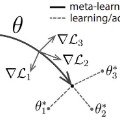

Natural language understanding(NLU) is challenging for finance due to the lack of annotated data and the specialized language in that domain. As a result, researchers have proposed to use pre-trained language model and multi-task learning to learn robust representations. However, aggressive fine-tuning often causes over-fitting and multi-task learning may favor tasks with significantly larger amounts data, etc. To address these problems, in this paper, we investigate model-agnostic meta-learning algorithm(MAML) in low-resource financial NLU tasks. Our contribution includes: 1. we explore the performance of MAML method with multiple types of tasks: GLUE datasets, SNLI, Sci-Tail and Financial PhraseBank; 2. we study the performance of MAML method with multiple single-type tasks: a real scenario stock price prediction problem with twitter text data. Our models achieve the state-of-the-art performance according to the experimental results, which demonstrate that our method can adapt fast and well to low-resource situations.

翻译:在金融领域,由于缺乏带有注释的数据集和专业化语言的存在,自然语言理解(NLU)具有挑战性。因此,研究人员提出使用预训练的语言模型和多任务学习来学习鲁棒性表示。然而,过度微调通常会导致过度拟合,而多任务学习则会支持具有显着较大数据量的任务等。为了解决这些问题,在本文中,我们探讨了在低资源金融NLU任务中的模型无关元学习算法(MAML)。我们的贡献包括:1.我们探索了使用MAML方法在多种类型的任务中的性能:GLUE数据集、SNLI、Sci-Tail和金融短语库;2.我们研究了MAML方法在单一类型任务上的表现情况:用推特文本数据进行股票价格预测。根据实验结果,我们的模型取得了最先进的性能,证明我们的方法可以快速、良好地适应低资源情况。