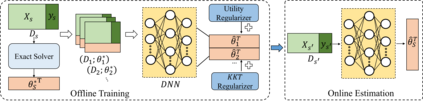

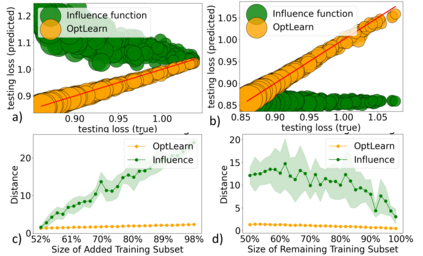

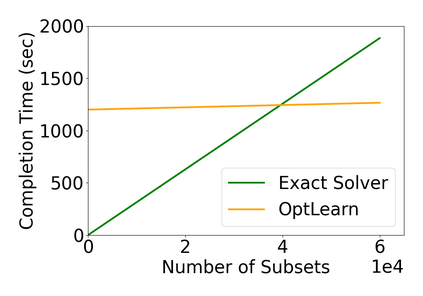

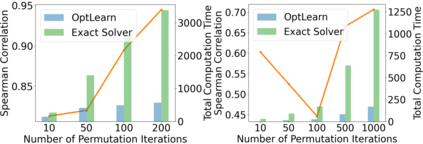

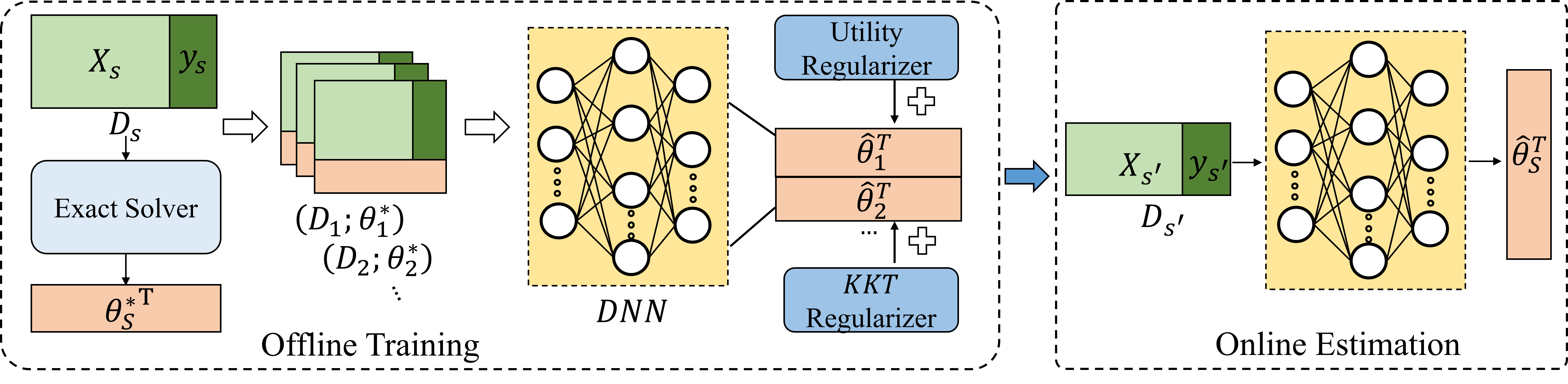

Machine learning (ML) models need to be frequently retrained on changing datasets in a wide variety of application scenarios, including data valuation and uncertainty quantification. To efficiently retrain the model, linear approximation methods such as influence function have been proposed to estimate the impact of data changes on model parameters. However, these methods become inaccurate for large dataset changes. In this work, we focus on convex learning problems and propose a general framework to learn to estimate optimized model parameters for different training sets using neural networks. We propose to enforce the predicted model parameters to obey optimality conditions and maintain utility through regularization techniques, which significantly improve generalization. Moreover, we rigorously characterize the expressive power of neural networks to approximate the optimizer of convex problems. Empirical results demonstrate the advantage of the proposed method in accurate and efficient model parameter estimation compared to the state-of-the-art.

翻译:机器学习(ML)模型需要经常在各种各样的应用假设中,包括数据估价和不确定性量化,在改变数据集方面进行再培训。为了有效地再培训模型,提出了诸如影响功能等线性近似方法,以估计数据变化对模型参数的影响。但是,这些方法对大型数据集变化来说变得不准确。在这项工作中,我们侧重于结节学习问题,并提出了一个一般框架,以学习如何估计使用神经网络对不同培训数据集的最佳模型参数。我们提议执行预测的模型参数,以达到最佳性条件,并通过正规化技术保持效用,大大改进了一般化。此外,我们严格地描述神经网络的表达力,以近似结节问题的最优化。经验性结果表明,拟议方法在准确和高效模型参数估计方面与最新技术相比具有优势。