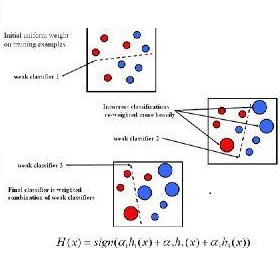

The principle of boosting in supervised learning involves combining multiple weak classifiers to obtain a stronger classifier. AdaBoost has the reputation to be a perfect example of this approach. We have previously shown that AdaBoost is not truly an optimization algorithm. This paper shows that AdaBoost is an algorithm in name only, as the resulting combination of weak classifiers can be explicitly calculated using a truth table. This study is carried out by considering a problem with two classes and is illustrated by the particular case of three binary classifiers and presents results in comparison with those from the implementation of AdaBoost algorithm of the Python library scikit-learn.

翻译:暂无翻译

相关内容

专知会员服务

34+阅读 · 2019年10月18日

专知会员服务

36+阅读 · 2019年10月17日