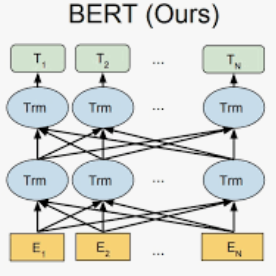

Sentence ordering aims to arrange the sentences of a given text in the correct order. Recent work frames it as a ranking problem and applies deep neural networks to it. In this work, we propose a new method, named BERT4SO, by fine-tuning BERT for sentence ordering. We concatenate all sentences and compute their representations by using multiple special tokens and carefully designed segment (interval) embeddings. The tokens across multiple sentences can attend to each other which greatly enhances their interactions. We also propose a margin-based listwise ranking loss based on ListMLE to facilitate the optimization process. Experimental results on five benchmark datasets demonstrate the effectiveness of our proposed method.

翻译:判决顺序旨在按正确的顺序排列给定文本的句子。 最近的工作框架将它作为一个排名问题, 并对其应用深层神经网络。 在这项工作中, 我们提出一个新的方法, 名为 BERT4SO, 通过微调 BERT 来命令判刑。 我们用多个特殊标记和精心设计的段( 中间) 嵌入来计算所有的判决并计算其表达方式。 跨多个句子的标语可以相互贴上, 大大增强它们的互动。 我们还提议了一个基于 ListMLE 的基于边际列表的排序损失, 以便利优化进程。 五个基准数据集的实验结果显示了我们拟议方法的有效性 。