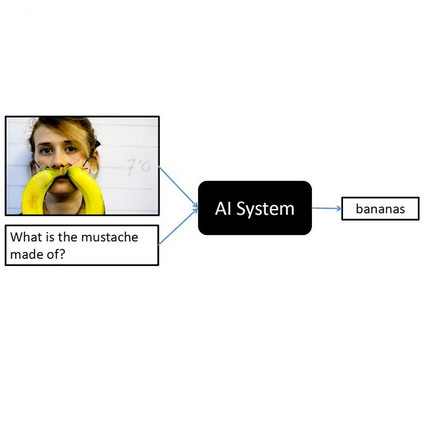

Scene graph generation from images is a task of great interest to applications such as robotics, because graphs are the main way to represent knowledge about the world and regulate human-robot interactions in tasks such as Visual Question Answering (VQA). Unfortunately, its corresponding area of machine learning is still relatively in its infancy, and the solutions currently offered do not specialize well in concrete usage scenarios. Specifically, they do not take existing "expert" knowledge about the domain world into account; and that might indeed be necessary in order to provide the level of reliability demanded by the use case scenarios. In this paper, we propose an initial approximation to a framework called Ontology-Guided Scene Graph Generation (OG-SGG), that can improve the performance of an existing machine learning based scene graph generator using prior knowledge supplied in the form of an ontology (specifically, using the axioms defined within); and we present results evaluated on a specific scenario founded in telepresence robotics. These results show quantitative and qualitative improvements in the generated scene graphs.

翻译:从图像中生成图象是机器人等应用非常感兴趣的一项任务,因为图表是展示世界知识并规范人类机器人在视觉问答(VQA)等任务中互动的主要方式。 不幸的是,其相应的机器学习领域仍然相对处于初级阶段,而目前提供的解决办法在具体使用设想中并不十分专业化。具体地说,它们没有考虑到现有关于域世界的“专家”知识;这也许确实是必要的,以便提供使用案例情景所要求的可靠性水平。在本文中,我们提议对一个称为Ontology-Guid Scenegraphage(OG-SGG)的框架进行初步近似,该框架能够利用以本体学形式(具体地说,使用内部定义的轴心)提供的先前知识,改进现有机器基于场景图的生成器的性能。我们介绍了基于远程定位机器人的特定情景的评估结果。这些结果显示了生成场景图的数量和质量改进。