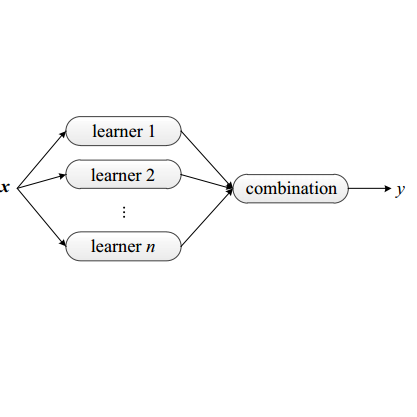

Distributed ensemble learning (DEL) involves training multiple models at distributed learners, and then combining their predictions to improve performance. Existing related studies focus on DEL algorithm design and optimization but ignore the important issue of incentives, without which self-interested learners may be unwilling to participate in DEL. We aim to fill this gap by presenting a first study on the incentive mechanism design for DEL. Our proposed mechanism specifies both the amount of training data and reward for learners with heterogeneous computation and communication costs. One design challenge is to have an accurate understanding regarding how learners' diversity (in terms of training data) affects the ensemble accuracy. To this end, we decompose the ensemble accuracy into a diversity-precision tradeoff to guide the mechanism design. Another challenge is that the mechanism design involves solving a mixed-integer program with a large search space. To this end, we propose an alternating algorithm that iteratively updates each learner's training data size and reward. We prove that under mild conditions, the algorithm converges. Numerical results using MNIST dataset show an interesting result: our proposed mechanism may prefer a lower level of learner diversity to achieve a higher ensemble accuracy.

翻译:暂无翻译