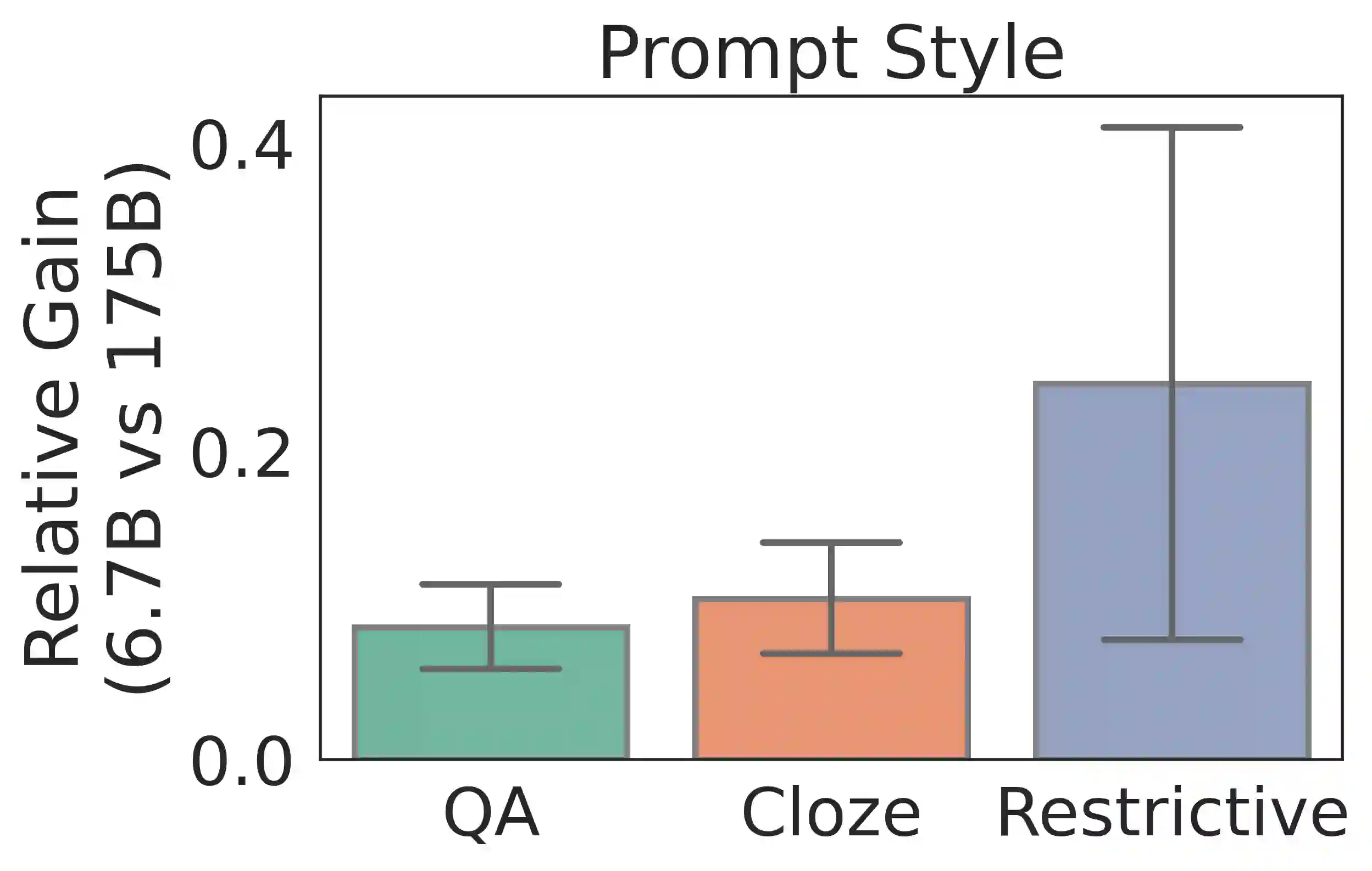

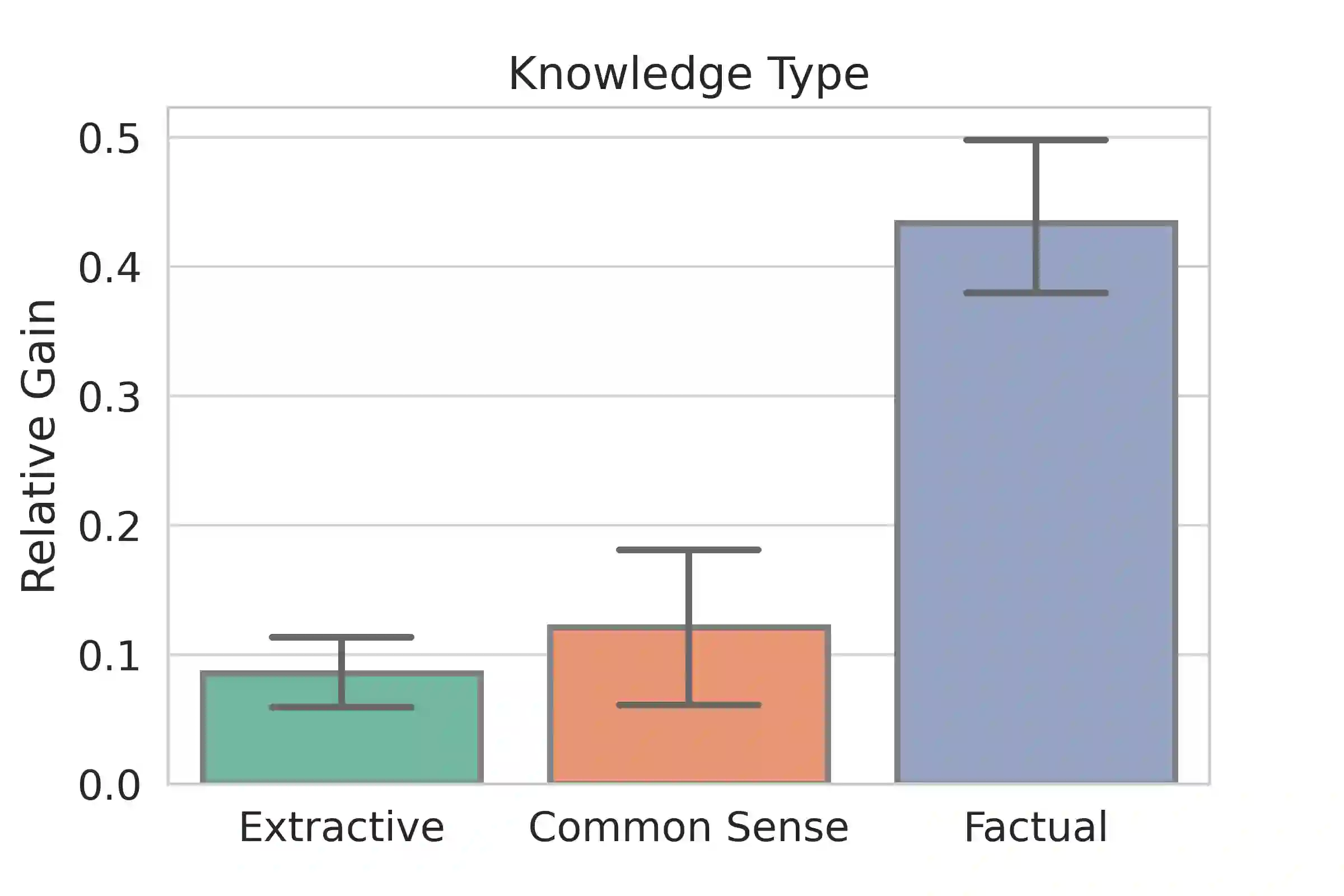

Large language models (LLMs) transfer well to new tasks out-of-the-box simply given a natural language prompt that demonstrates how to perform the task and no additional training. Prompting is a brittle process wherein small modifications to the prompt can cause large variations in the model predictions, and therefore significant effort is dedicated towards designing a painstakingly "perfect prompt" for a task. To mitigate the high degree of effort involved in prompt-design, we instead ask whether producing multiple effective, yet imperfect, prompts and aggregating them can lead to a high quality prompting strategy. Our observations motivate our proposed prompting method, ASK ME ANYTHING (AMA). We first develop an understanding of the effective prompt formats, finding that question-answering (QA) prompts, which encourage open-ended generation ("Who went to the park?") tend to outperform those that restrict the model outputs ("John went to the park. Output True or False."). Our approach recursively uses the LLM itself to transform task inputs to the effective QA format. We apply the collected prompts to obtain several noisy votes for the input's true label. We find that the prompts can have very different accuracies and complex dependencies and thus propose to use weak supervision, a procedure for combining the noisy predictions, to produce the final predictions for the inputs. We evaluate AMA across open-source model families (e.g., EleutherAI, BLOOM, OPT, and T0) and model sizes (125M-175B parameters), demonstrating an average performance lift of 10.2% over the few-shot baseline. This simple strategy enables the open-source GPT-J-6B model to match and exceed the performance of few-shot GPT3-175B on 15 of 20 popular benchmarks. Averaged across these tasks, the GPT-Neo-6B model outperforms few-shot GPT3-175B. We release our code here: https://github.com/HazyResearch/ama_prompting

翻译:大型语言模型( LLM ) 向新任务转移 125 高语言模型( LLM ), 只需以自然语言提示即可 125 - 框外的新任务 125 - 255 - 6 显示如何执行任务, 没有额外培训 。 提示是一个小过程, 微小修改快速可以导致模型预测中的大变异, 因此, 大量致力于设计一个艰难的“ 完美快速” 任务 。 为了减轻在即时设计中涉及的高度努力, 我们反问, 产生多重有效但不完善的、 提示和汇总它们能导致高质量的激励战略。 我们的观察激励了我们拟议的快速提示方法, ASK ME Anything( AMA AM ) 。 我们首先发展了对有效快速格式的理解, 发现解答( QM QM ) ( QM ) ( QM ) ( AS- AM ) ( ) ( AS- AM ) (A) (A) ( A. ASyalger mell modeal- modeal moveal laveal comlial comlistrational laveal laveil) laut the the the the listrations.