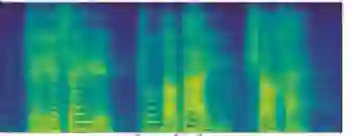

Silent Speech Decoding (SSD), based on articulatory neuromuscular activities, has become a prevalent task of Brain-Computer Interface (BCI) in recent years. Many works have been devoted to decoding surface electromyography (sEMG) from articulatory neuromuscular activities. However, restoring silent speech in tonal languages such as Mandarin Chinese is still difficult. This paper proposes an optimized Sequence-to-Sequence (Seq2Seq) approach to synthesize voice from the sEMG-based silent speech. We extract duration information to regulate the sEMG-based silent speech using the audio length. Then, we provide a deep-learning model with an encoder-decoder structure and a state-of-art vocoder to generate the audio waveform. Experiments based on six Mandarin Chinese speakers demonstrate that the proposed model can successfully decode silent speech in Mandarin Chinese and achieve a character error rate (CER) of 6.41% on average with human evaluation.

翻译:近些年来,基于神经神经神经肌肉活体的静音解析(SSD)已成为脑-计算机界面(BCI)的一项普遍任务,许多工作都致力于从神经肌肉活体的动脉神经肌肉活动中解码表面电子学(SEMG),然而,用中文等古语恢复静音仍很困难。本文建议采用最优化的序列到序列(Seq2Seq)法来合成基于SEMG的静音。我们利用音长提取时间信息来规范基于 SEMG 的静音。然后,我们提供一个带有编码解码器结构的深层学习模型和一个最先进的电码器来生成音波形。基于六门汉语中文发言者的实验表明,拟议的模型能够成功地解码中文的静音,并用人文评价平均达到6.41%的字符误差率(CER)。