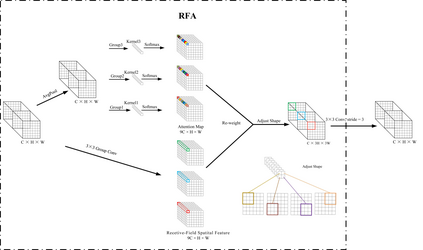

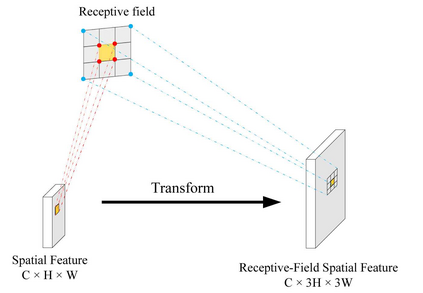

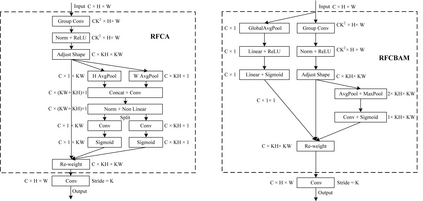

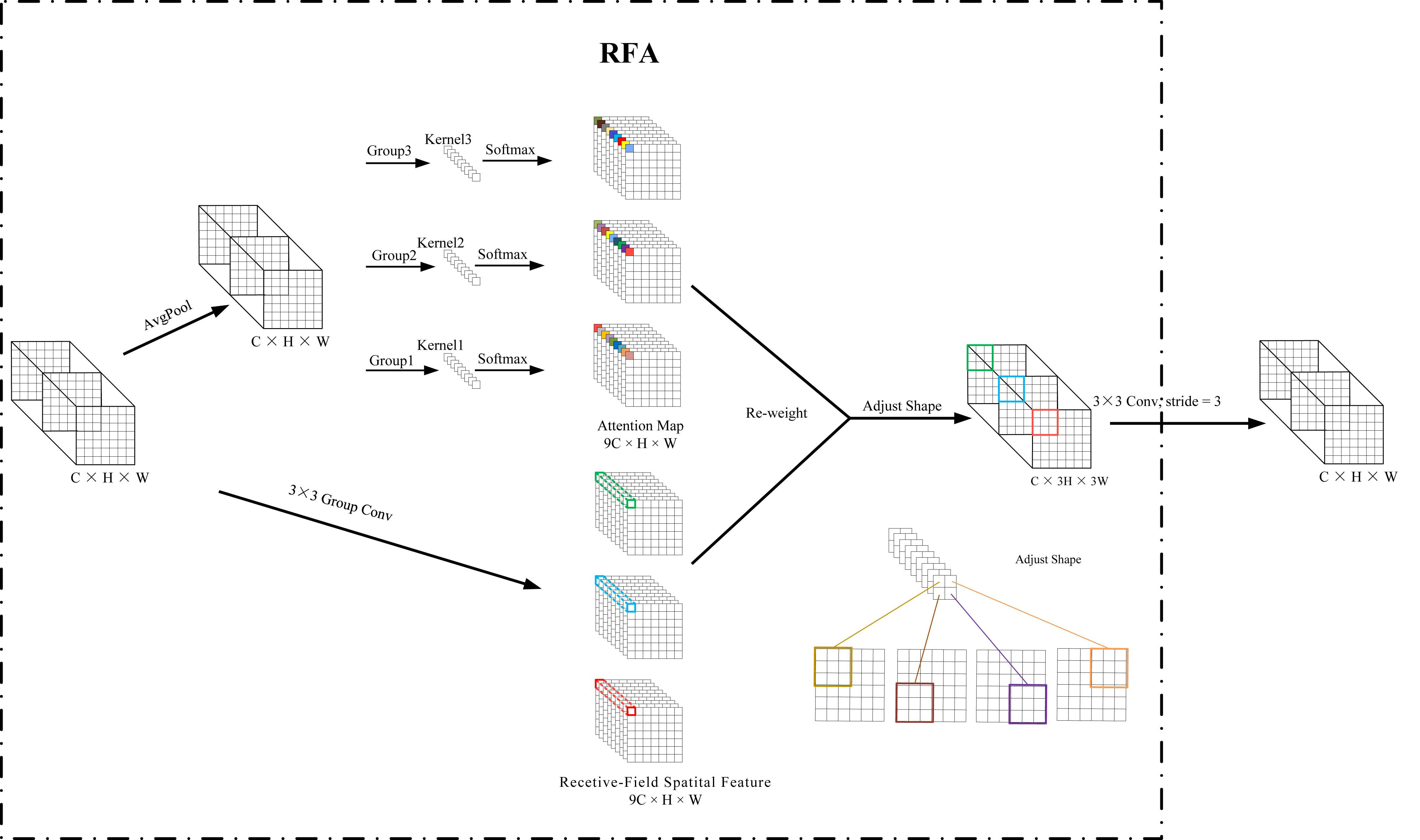

Spatial attention has been widely used to improve the performance of convolutional neural networks. However, it has certain limitations. In this paper, we propose a new perspective on the effectiveness of spatial attention, which is that the spatial attention mechanism essentially solves the problem of convolutional kernel parameter sharing. However, the information contained in the attention map generated by spatial attention is not sufficient for large-size convolutional kernels. Therefore, we propose a novel attention mechanism called Receptive-Field Attention (RFA). Existing spatial attention, such as Convolutional Block Attention Module (CBAM) and Coordinated Attention (CA) focus only on spatial features, which does not fully address the problem of convolutional kernel parameter sharing. In contrast, RFA not only focuses on the receptive-field spatial feature but also provides effective attention weights for large-size convolutional kernels. The Receptive-Field Attention convolutional operation (RFAConv), developed by RFA, represents a new approach to replace the standard convolution operation. It offers nearly negligible increment of computational cost and parameters, while significantly improving network performance. We conducted a series of experiments on ImageNet-1k, COCO, and VOC datasets to demonstrate the superiority of our approach. Of particular importance, we believe that it is time to shift focus from spatial features to receptive-field spatial features for current spatial attention mechanisms. In this way, we can further improve network performance and achieve even better results. The code and pre-trained models for the relevant tasks can be found at https://github.com/Liuchen1997/RFAConv.

翻译:空间注意力机制被广泛应用于改善卷积神经网络的性能。但是,它也存在一定的局限性。本文提出了一种关于空间注意力机制有效性的新观点:空间注意力机制本质上是解决卷积核参数共享的问题。然而,空间注意力机制生成的注意力图所包含的信息对于大尺寸的卷积核来说并不充足。因此,我们提出了一种新的注意力机制,名为感受野注意力机制(Receptive-Field Attention,简称 RFA)。现有的空间注意力机制,如卷积块注意力模块(Convolutional Block Attention Module,简称 CBAM)和协同注意力(Coordinated Attention,简称 CA),仅关注空间特征,不能完全解决卷积核参数共享的问题。相比之下,RFA不仅关注感受野空间特征,并且为大尺寸卷积核提供了有效的注意力权重。由 RFA 所开发的感受野注意力卷积操作(RFAConv)代表了一种替代标准卷积操作的新方法。它在几乎不增加计算成本和参数的同时,极大地提高了网络的性能。我们在 ImageNet-1k、COCO 和 VOC 数据集上进行了一系列实验,以证明我们方法的优越性。特别重要的是,我们认为现在是时候将注意力机制的重点从空间特征转移到感受野空间特征上了。通过这种方式,我们可以进一步提高网络性能,取得更好的结果。相关任务的代码和预训练模型可在 https://github.com/Liuchen1997/RFAConv 找到。