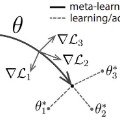

In the paper, we propose an effective and efficient Compositional Federated Learning (ComFedL) algorithm for solving a new compositional Federated Learning (FL) framework, which frequently appears in many machine learning problems with a hierarchical structure such as distributionally robust federated learning and model-agnostic meta learning (MAML). Moreover, we study the convergence analysis of our ComFedL algorithm under some mild conditions, and prove that it achieves a fast convergence rate of $O(\frac{1}{\sqrt{T}})$, where $T$ denotes the number of iteration. To the best of our knowledge, our algorithm is the first work to bridge federated learning with composition stochastic optimization. In particular, we first transform the distributionally robust FL (i.e., a minimax optimization problem) into a simple composition optimization problem by using KL divergence regularization. At the same time, we also first transform the distribution-agnostic MAML problem (i.e., a minimax optimization problem) into a simple composition optimization problem. Finally, we apply two popular machine learning tasks, i.e., distributionally robust FL and MAML to demonstrate the effectiveness of our algorithm.

翻译:在论文中,我们提出一个高效、高效和高效的联邦综合学习(ComFedL)算法(ComFedL)算法(ComFedL)用于解决一个新的组成联邦学习(FL)框架(FL)框架(FL),这个算法经常出现在许多机械学习问题中,如分布式强的联邦学习和模型-不可知性元学习(MAML)等等级结构。此外,我们还在一些温和条件下研究我们的ComFedL算法(ComFedL)趋同分析法(ComFMedL)的趋同分析,并证明它达到美元(frac{1unsqrt{T ⁇ )的快速趋同率($T$T),用美元表示迭代数。根据我们的知识,我们的算法,我们的算法,我们首先运用两种流行的机器,即MAML, 和MAL, 分配法(i.L. ) 来展示我们流行的算法效果。