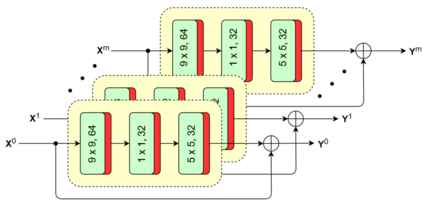

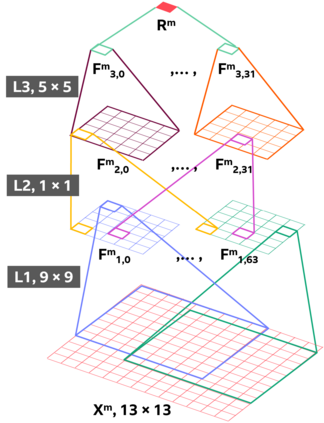

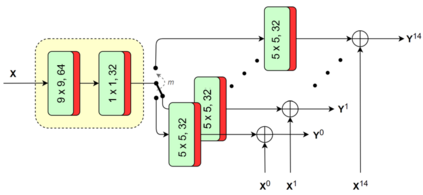

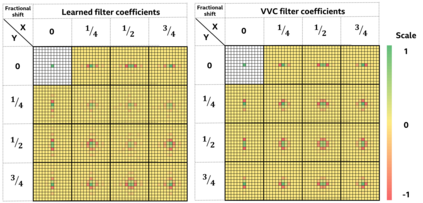

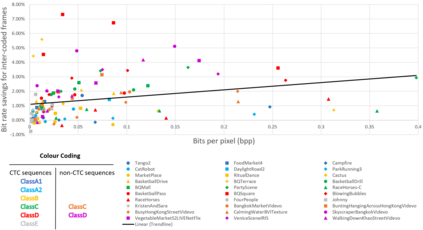

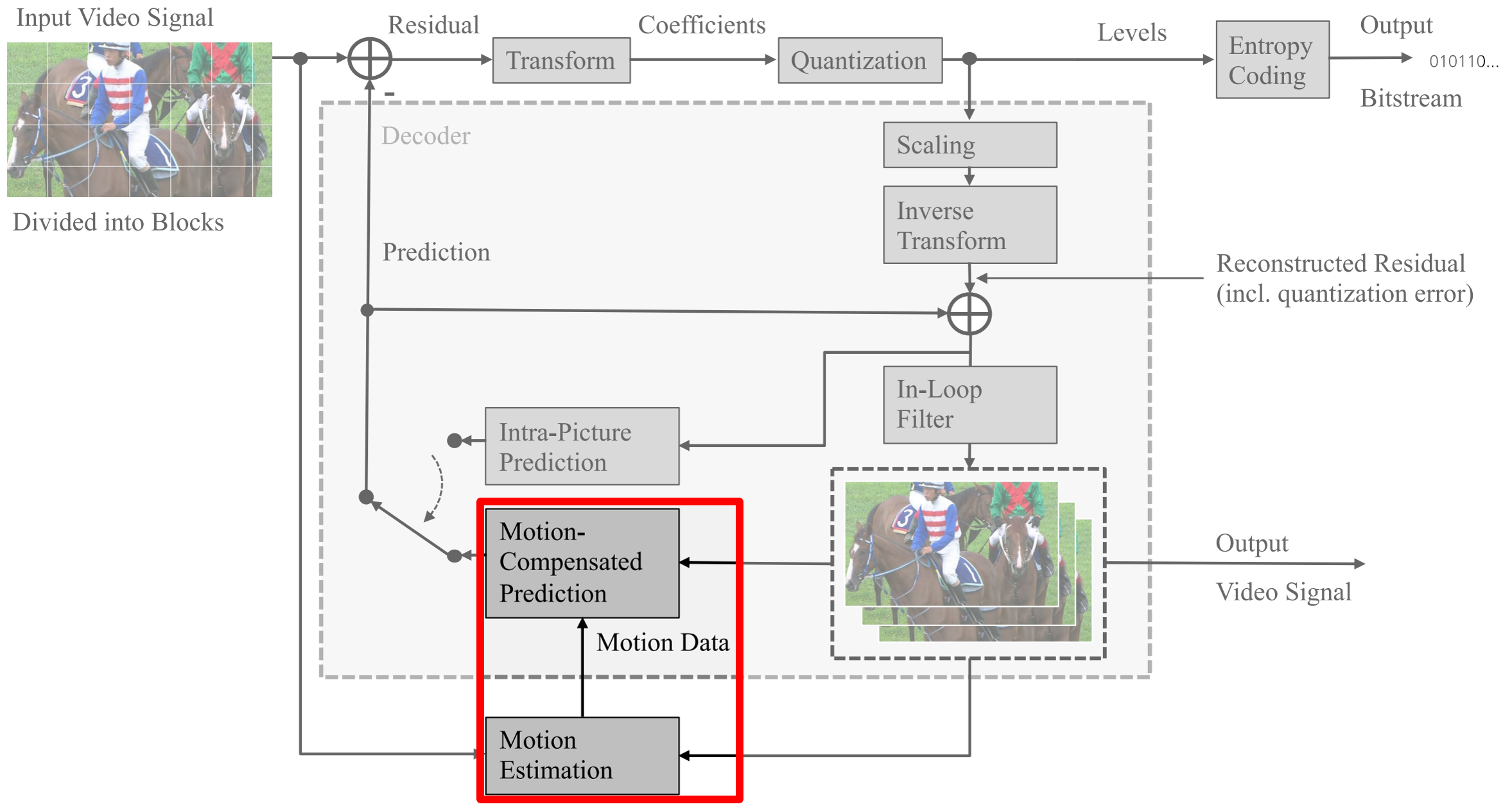

The versatility of recent machine learning approaches makes them ideal for improvement of next generation video compression solutions. Unfortunately, these approaches typically bring significant increases in computational complexity and are difficult to interpret into explainable models, affecting their potential for implementation within practical video coding applications. This paper introduces a novel explainable neural network-based inter-prediction scheme, to improve the interpolation of reference samples needed for fractional precision motion compensation. The approach requires a single neural network to be trained from which a full quarter-pixel interpolation filter set is derived, as the network is easily interpretable due to its linear structure. A novel training framework enables each network branch to resemble a specific fractional shift. This practical solution makes it very efficient to use alongside conventional video coding schemes. When implemented in the context of the state-of-the-art Versatile Video Coding (VVC) test model, 0.77%, 1.27% and 2.25% BD-rate savings can be achieved on average for lower resolution sequences under the random access, low-delay B and low-delay P configurations, respectively, while the complexity of the learned interpolation schemes is significantly reduced compared to the interpolation with full CNNs.

翻译:最近的机器学习方法的多功能性使得它们成为改进下一代视频压缩解决方案的理想。 不幸的是,这些方法通常会大大增加计算复杂性,难以被解释为可解释的模式,从而影响其在实际视频编码应用程序中实施的潜力。本文引入了一个新的解释性神经网络基于神经网络的跨孕计划,以改进分精确动作补偿所需的参考样本的内插。该方法要求培训单一神经网络,从中可以产生完整的四分之一平方的内插过滤器,因为网络由于其线性结构很容易解释。一个新的培训框架使每个网络分支都能够类似于特定的分数变化。这一实用解决方案使得与常规视频编码计划一起使用非常高效。在最新VERsatile视频编码(VC)测试模型中实施时,可以平均实现0.77 %、1.27%和2.25%的BD节率节约,因为随机访问、低delay B和低delay P配置下的低分辨率序列下,同时与全程比较了所了解的跨周期计划的复杂性。