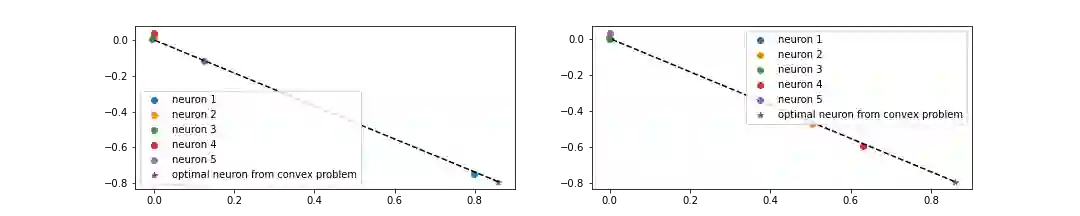

We prove that finding all globally optimal two-layer ReLU neural networks can be performed by solving a convex optimization program with cone constraints. Our analysis is novel, characterizes all optimal solutions, and does not leverage duality-based analysis which was recently used to lift neural network training into convex spaces. Given the set of solutions of our convex optimization program, we show how to construct exactly the entire set of optimal neural networks. We provide a detailed characterization of this optimal set and its invariant transformations. As additional consequences of our convex perspective, (i) we establish that Clarke stationary points found by stochastic gradient descent correspond to the global optimum of a subsampled convex problem (ii) we provide a polynomial-time algorithm for checking if a neural network is a global minimum of the training loss (iii) we provide an explicit construction of a continuous path between any neural network and the global minimum of its sublevel set and (iv) characterize the minimal size of the hidden layer so that the neural network optimization landscape has no spurious valleys. Overall, we provide a rich framework for studying the landscape of neural network training loss through convexity.

翻译:我们的分析是新颖的,具有所有最佳解决方案的特点,并且没有利用最近用来将神经网络培训提升到 convex 空间的基于双重性的分析。鉴于我们的 convex 优化方案的一套解决方案,我们展示了如何精确地构建全套最佳神经网络。我们对这一最佳神经网络及其内在变异性作了详细的描述。作为我们convex 观点的额外影响,我们建立了通过蒸发梯度下降发现的中央固定点,这与次抽样的锥体问题的全球最佳对应;(二)我们提供了一种多元时间算法,用于检查神经网络是否是全球最低培训损失的神经网络;(三)我们为任何神经网络及其子级全球最低值之间的连续路径提供了明确的构造,以及(四)描述隐藏层的最小尺寸,以便神经网络优化景观没有不直线性谷。总体而言,我们提供了一个丰富的网络框架,用于研究神经损失的景观。