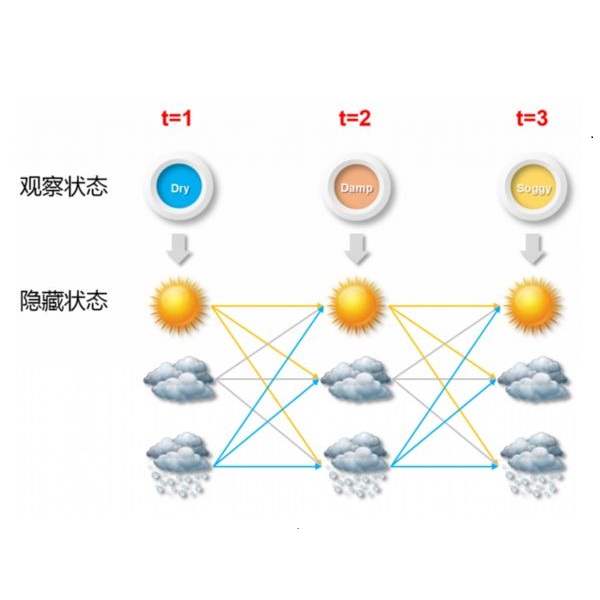

Mixtures of Hidden Markov Models (MHMMs) are frequently used for clustering of sequential data. An important aspect of MHMMs, as of any clustering approach, is that they can be interpretable, allowing for novel insights to be gained from the data. However, without a proper way of measuring interpretability, the evaluation of novel contributions is difficult and it becomes practically impossible to devise techniques that directly optimize this property. In this work, an information-theoretic measure (entropy) is proposed for interpretability of MHMMs, and based on that, a novel approach to improve model interpretability is proposed, i.e., an entropy-regularized Expectation Maximization (EM) algorithm. The new approach aims for reducing the entropy of the Markov chains (involving state transition matrices) within an MHMM, i.e., assigning higher weights to common state transitions during clustering. It is argued that this entropy reduction, in general, leads to improved interpretability since the most influential and important state transitions of the clusters can be more easily identified. An empirical investigation shows that it is possible to improve the interpretability of MHMMs, as measured by entropy, without sacrificing (but rather improving) clustering performance and computational costs, as measured by the v-measure and number of EM iterations, respectively.

翻译:在连续数据组群中,经常使用隐藏的Markov模型(MHMMMs)的混合体(MHMMMs)来对相继数据进行分组。作为任何集群方法,MHMMs的一个重要方面是,它们可以解释,以便从数据中获取新的见解;然而,如果没有适当的解释性衡量方法,评估新贡献是困难的,实际上无法设计出直接优化这种属性的技术。在这项工作中,为MHMMs的可解释性提议了一个信息-理论性测量(元素),并在此基础上,提出了改进模型可解释性的新颖方法,即:一种变正正的预期最大化算法。新的方法旨在减少Markov链(涉及国家过渡矩阵)在MHMMmmm(即对共同状态的过渡性矩阵)中的英特质,即在集群期间对普通状态的过渡赋予更高的权重。据论证,这种昆虫的减少可以提高可解释性,因为可以更容易地发现,因为最有影响力和最重要的国家集群的转换性,即提出了一种变现式的调查调查显示,通过MMMMMMM(测量的可改进性)的可改进性)的可计量和可计量的可改进性。