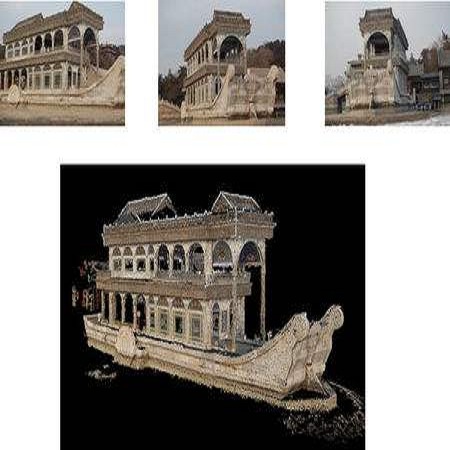

Real-time 3D reconstruction enables fast dense mapping of the environment which benefits numerous applications, such as navigation or live evaluation of an emergency. In contrast to most real-time capable approaches, our approach does not need an explicit depth sensor. Instead, we only rely on a video stream from a camera and its intrinsic calibration. By exploiting the self-motion of the unmanned aerial vehicle (UAV) flying with oblique view around buildings, we estimate both camera trajectory and depth for selected images with enough novel content. To create a 3D model of the scene, we rely on a three-stage processing chain. First, we estimate the rough camera trajectory using a simultaneous localization and mapping (SLAM) algorithm. Once a suitable constellation is found, we estimate depth for local bundles of images using a Multi-View Stereo (MVS) approach and then fuse this depth into a global surfel-based model. For our evaluation, we use 55 video sequences with diverse settings, consisting of both synthetic and real scenes. We evaluate not only the generated reconstruction but also the intermediate products and achieve competitive results both qualitatively and quantitatively. At the same time, our method can keep up with a 30 fps video for a resolution of 768x448 pixels.

翻译:实时 3D 重建使快速密集地绘制有利于多个应用环境的环境图,例如导航或对紧急情况进行现场评估等。 与大多数实时能力强的方法相比, 我们的方法并不需要一个清晰的深度传感器。 相反, 我们只依靠相机及其内在校准的视频流。 我们利用无人驾驶飞行器(UAV)在建筑物周围斜视飞行的自我移动,对摄像轨迹和深度进行估计, 以具有足够新内容的选定图像进行估计。 为了创建场景的3D模型, 我们依赖一个三阶段处理链。 首先, 我们使用同步的本地化和映射算法( SLAM)来估计粗相机轨迹。 一旦找到合适的星座, 我们就会使用多视角(MVS) 的方法来估计本地图像包的深度, 然后将这一深度整合成一个基于全球冲浪模型。 在我们的评估中, 我们使用由合成场景和真实场景组成的不同环境的55个视频序列。 我们不仅评估产生的重建结果, 而且还评估中间产品, 并实现质量和定量的竞争性结果。 与此同时, 我们的方法可以保持一个分辨率的30 fps4。