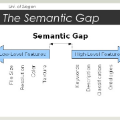

Attention mechanism has been used as an important component across Vision-and-Language(VL) tasks in order to bridge the semantic gap between visual and textual features. While attention has been widely used in VL tasks, it has not been examined the capability of different attention alignment calculation in bridging the semantic gap between visual and textual clues. In this research, we conduct a comprehensive analysis on understanding the role of attention alignment by looking into the attention score calculation methods and check how it actually represents the visual region's and textual token's significance for the global assessment. We also analyse the conditions which attention score calculation mechanism would be more (or less) interpretable, and which may impact the model performance on three different VL tasks, including visual question answering, text-to-image generation, text-and-image matching (both sentence and image retrieval). Our analysis is the first of its kind and provides useful insights of the importance of each attention alignment score calculation when applied at the training phase of VL tasks, commonly ignored in attention-based cross modal models, and/or pretrained models.

翻译:为了缩小视觉和文字特征之间的语义差距,在视觉和语言(VL)任务中,注意机制被用作一个重要的组成部分,以弥合视觉和文字特征之间的语义差距。虽然注意被广泛用于VL任务,但并没有研究在弥合视觉和文字线索之间的语义差距方面不同注意的调整计算能力。在这项研究中,我们进行了一项全面分析,通过研究注意分数计算方法来理解注意一致性的作用,并检查它如何实际代表视觉区域和文字象征对全球评估的重要性。我们还分析了注意分数计算机制更(或更少)可以解释的条件,这些条件可能影响模型在三种不同的VL任务上的性能,包括视觉回答、文字到图像的生成、文字和图像的匹配(包括句子和图像检索)。我们的分析是同类中的第一种,提供了有用的洞察力,说明在VL任务的培训阶段应用时,每个注意的调分数计算的重要性,这些在以注意力为基础的跨模范模型和/或预先训练模型中通常被忽视。