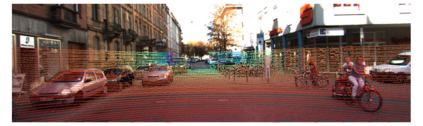

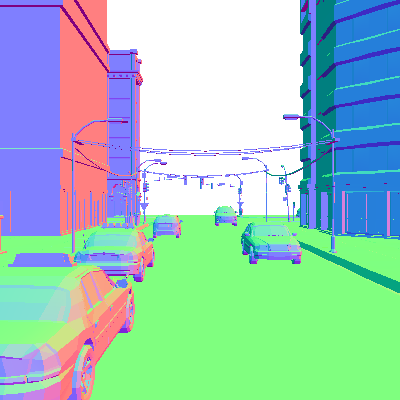

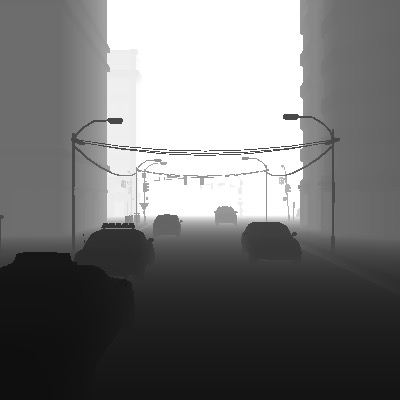

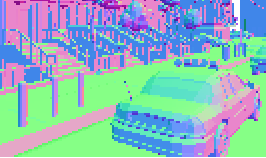

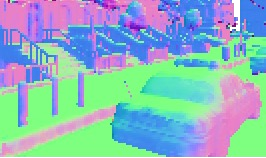

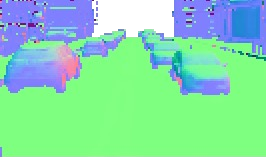

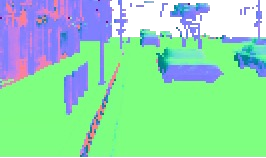

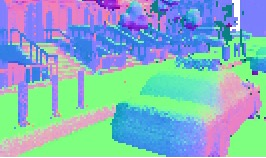

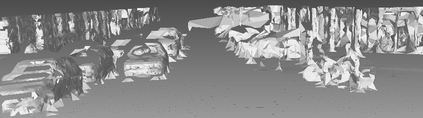

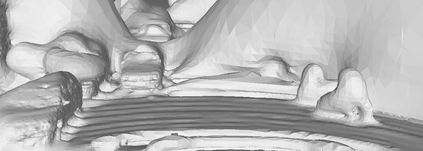

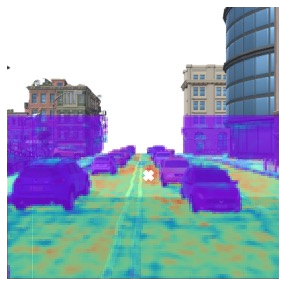

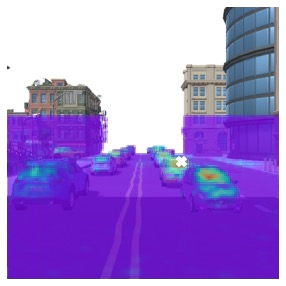

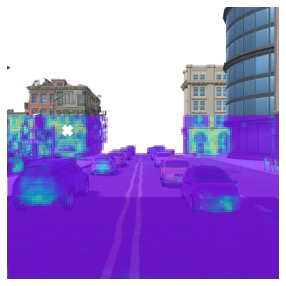

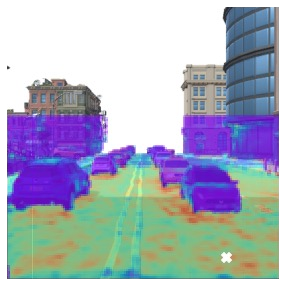

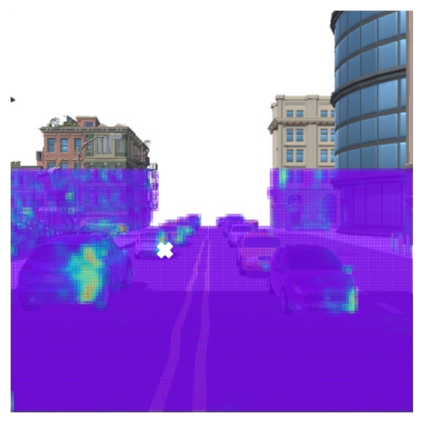

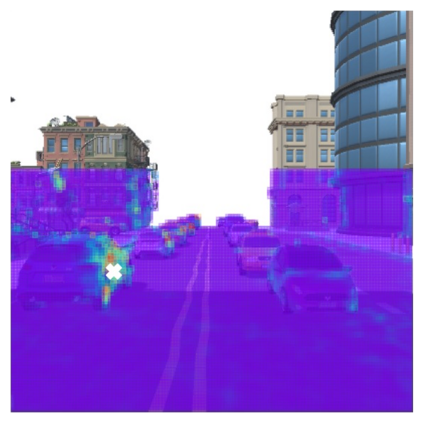

High-quality estimation of surface normal can help reduce ambiguity in many geometry understanding problems, such as collision avoidance and occlusion inference. This paper presents a technique for estimating the normal from 3D point clouds and 2D colour images. We have developed a transformer neural network that learns to utilise the hybrid information of visual semantic and 3D geometric data, as well as effective learning strategies. Compared to existing methods, the information fusion of the proposed method is more effective, which is supported by experiments. We have also built a simulation environment of outdoor traffic scenes in a 3D rendering engine to obtain annotated data to train the normal estimator. The model trained on synthetic data is tested on the real scenes in the KITTI dataset. And subsequent tasks built upon the estimated normal directions in the KITTI dataset show that the proposed estimator has advantage over existing methods.

翻译:暂无翻译