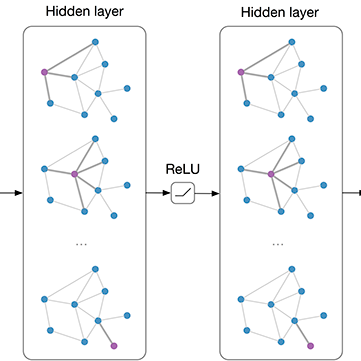

Increasing the depth of Graph Convolutional Networks (GCN), which in principal can permit more expressivity, is shown to incur detriment to the performance especially on node classification. The main cause of this issue lies in \emph{over-smoothing}. As its name implies, over-smoothing drives the output of GCN with the increase in network depth towards a space that contains limited distinguished information among nodes, leading to poor trainability and expressivity. Several works on refining the architecture of deep GCN have been proposed, but the improvement in performance is still marginal and it is still unknown in theory whether or not these refinements are able to relieve over-smoothing. In this paper, we first theoretically analyze the over-smoothing issue for a general family of prevailing GCNs, including generic GCN, GCN with bias, ResGCN, and APPNP. We prove that the over-smoothing of all these models is characterized by an universal process, i.e. all nodes converging to a cuboid of specific structure. Upon this universal theorem, we further propose DropEdge, a novel and flexible technique to alleviate over-smoothing. At its core, DropEdge randomly removes a certain number of edges from the input graph at each training epoch, acting like a data augmenter and also a message passing reducer. Furthermore, we theoretically demonstrate that DropEdge either reduces the convergence speed of over-smoothing for general GCNs or relieves the information loss caused by it. One group of experimental evaluations on simulated dataset has visualized the difference of over-smoothing between different GCNs as well as verifying the validity of our proposed theorems. Moreover, extensive experiments on several real benchmarks support that DropEdge consistently improves the performance on a variety of both shallow and deep GCNs.

翻译:平心而论, 平心而论, 平心而论, 平心而论, 平心而论, 平心而论, 平心而论, 表现仍然微不足道, 在理论中, 这些精细是否能够缓解过度的悬浮。 在本文中, 我们首先从理论上分析当前GCN总体系列的悬浮问题, 包括通用GCN、有偏差的GCN、ResGCN和APNP。 我们证明所有这些模型的超声波都呈现出一种普遍进程, 即: 平心而低调的GCN结构结构, 其性能的改善仍然微不足道, 在理论中, 这些精细微的精细度是否能够缓解过度的偏差。 在本文中,我们首先从理论上分析GCN的超声调问题, 将GCN的超声调问题推向上平面, 进一步降低GEOU的低位, 进一步降低GE值, 不断降低GE的深度的深度, 降低其速度, 降低总电图上的某些数据, 的深度, 不断降低其速度, 不断降低。