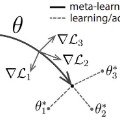

Learning general representations of text is a fundamental problem for many natural language understanding (NLU) tasks. Previously, researchers have proposed to use language model pre-training and multi-task learning to learn robust representations. However, these methods can achieve sub-optimal performance in low-resource scenarios. Inspired by the recent success of optimization-based meta-learning algorithms, in this paper, we explore the model-agnostic meta-learning algorithm (MAML) and its variants for low-resource NLU tasks. We validate our methods on the GLUE benchmark and show that our proposed models can outperform several strong baselines. We further empirically demonstrate that the learned representations can be adapted to new tasks efficiently and effectively.

翻译:许多自然语言理解(NLU)任务的一个根本问题是,对文本进行一般的学习表达,这是许多自然语言理解(NLU)任务的一个根本问题。以前,研究人员曾提议使用语言模式培训前和多任务学习来学习强健的演示。然而,这些方法可以在低资源情景下取得次优的绩效。我们受基于优化的元学习算法最近的成功的启发,在本文件中,我们探索了模型-不可知元学习算法(MAML)及其用于低资源NLU任务的变式。我们验证了我们在GLUE基准上的方法,并表明我们提议的模型可以超过若干强的基线。我们进一步从经验上证明,学到的演示方法可以高效率和有成效地适应新的任务。