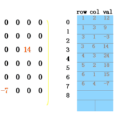

In this paper, we consider enhancing medical visual-language pre-training (VLP) with domain-specific knowledge, by exploiting the paired image-text reports from the radiological daily practice. In particular, we make the following contributions: First, unlike existing works that directly process the raw reports, we adopt a novel triplet extraction module to extract the medical-related information, avoiding unnecessary complexity from language grammar and enhancing the supervision signals; Second, we propose a novel triplet encoding module with entity translation by querying a knowledge base, to exploit the rich domain knowledge in medical field, and implicitly build relationships between medical entities in the language embedding space; Third, we propose to use a Transformer-based fusion model for spatially aligning the entity description with visual signals at the image patch level, enabling the ability for medical diagnosis; Fourth, we conduct thorough experiments to validate the effectiveness of our architecture, and benchmark on numerous public benchmarks, e.g., ChestX-ray14, RSNA Pneumonia, SIIM-ACR Pneumothorax, COVIDx CXR-2, COVID Rural, and EdemaSeverity. In both zero-shot and fine-tuning settings, our model has demonstrated strong performance compared with the former methods on disease classification and grounding.

翻译:本文考虑利用来自放射学日常实践的图文报告,将医学领域专业知识融入医学视觉-语言预训练(VLP)中。具体而言,我们做出以下贡献:首先,与直接处理原始报告的现有方法不同,我们采用一种新的三元组提取模块来提取与医学相关的信息,避免了语言语法的不必要复杂性,并增强了监督信号;其次,我们提出了一种全新的三元组编码模块,通过查询知识库进行实体翻译,以利用医学领域丰富的领域知识,并在语言嵌入空间中隐含地建立医学实体之间的关系;第三,我们提出使用基于Transformer的融合模型,在图像补丁级别上对实体描述与视觉信号进行空间对齐,从而实现医学诊断的能力;第四,我们开展了彻底的实验,以验证我们的架构的有效性,并在许多公共基准测试中进行基准测试,例如ChestX-ray14、RSNA Pneumonia、SIIM-ACR Pneumothorax、COVIDx CXR-2、COVID Rural和EdemaSeverity。无论是零-shot还是微调设置,我们的模型在疾病分类和语言视觉匹配方面都表现出强大的性能,与以前的方法相比更有优势。