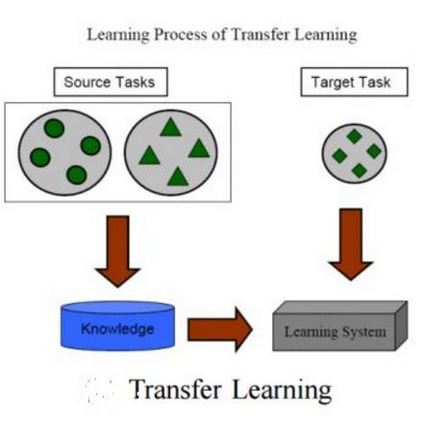

Powered prosthetic legs must anticipate the user's intent when switching between different locomotion modes (e.g., level walking, stair ascent/descent, ramp ascent/descent). Numerous data-driven classification techniques have demonstrated promising results for predicting user intent, but the performance of these intent prediction models on novel subjects remains undesirable. In other domains (e.g., image classification), transfer learning has improved classification accuracy by using previously learned features from a large dataset (i.e., pre-trained models) and then transferring this learned model to a new task where a smaller dataset is available. In this paper, we develop a deep convolutional neural network with intra-subject (subject-dependent) and inter-subject (subject-independent) validations based on a human locomotion dataset. We then apply transfer learning for the subject-independent model using a small portion (10%) of the data from the left-out subject. We compare the performance of these three models. Our results indicate that the transfer learning (TL) model outperforms the subject-independent (IND) model and is comparable to the subject-dependent (DEP) model (DEP Error: 0.74 $\pm$ 0.002%, IND Error: 11.59 $\pm$ 0.076%, TL Error: 3.57 $\pm$ 0.02% with 10% data). Moreover, as expected, transfer learning accuracy increases with the availability of more data from the left-out subject. We also evaluate the performance of the intent prediction system in various sensor configurations that may be available in a prosthetic leg application. Our results suggest that a thigh IMU on the the prosthesis is sufficient to predict locomotion intent in practice.

翻译:在不同的移动模式(如水平行走、楼梯升降/月亮、斜坡升升/月亮)之间转换时,必须预测用户的意图。许多数据驱动的分类技术在预测用户意图方面显示了有希望的结果,但这些意图预测模型在新主题方面的性能仍然不可取。在其他领域(如图像分类),转移学习通过使用大型数据集(如预培训模型)先前学到的特性提高了分类准确性,然后将这一学习的模型转移到可以提供较小数据集的新任务(如水平行走、楼梯升升升/月亮、斜坡升/月亮)。在本文件中,我们开发了一个由内部(依赖主体)和主体(依赖主体)之间(依赖主体)的深相导导神经网络。然后,我们用一个小部分(如图像分类)数据来应用独立主题模型(如图像分类)来提高分类的准确性能。我们比较这三种模型的性能。我们的结果还表明,转移模型(TL)比依赖主体(ING$$)的可用性能精确度(IMF$D)模型增加(IMF$3)的精确度测试结果:0.0.5:IMO(以IMF) 数据流数据变为10美元。