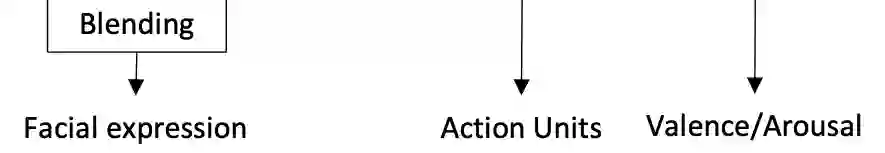

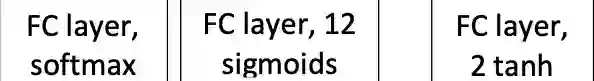

In this paper, we present the results of the HSE-NN team in the 4th competition on Affective Behavior Analysis in-the-wild (ABAW). The novel multi-task EfficientNet model is trained for simultaneous recognition of facial expressions and prediction of valence and arousal on static photos. The resulting MT-EmotiEffNet extracts visual features that are fed into simple feed-forward neural networks in the multi-task learning challenge. We obtain performance measure 1.3 on the validation set, which is significantly greater when compared to either performance of baseline (0.3) or existing models that are trained only on the s-Aff-Wild2 database. In the learning from synthetic data challenge, the quality of the original synthetic training set is increased by using the super-resolution techniques, such as Real-ESRGAN. Next, the MT-EmotiEffNet is fine-tuned on the new training set. The final prediction is a simple blending ensemble of pre-trained and fine-tuned MT-EmotiEffNets. Our average validation F1 score is 18% greater than the baseline convolutional neural network.

翻译:在本文中,我们介绍HSE-NN团队在第四场关于“消极行为分析”的竞赛(ABAW)中的成果。新颖的多任务高效网络模型经过培训,既能同时识别面部表情,又能预测静态照片的价值和振奋作用。由此产生的MT-EmotiEffNet提取了在多任务学习挑战中反馈到简单进取神经网络的视觉特征。我们在验证集上获得了业绩计量1.3,与基线(0.3)或仅通过S-Aff-Wild2数据库培训的现有模型相比,这一业绩计量要大得多。在从合成数据挑战中学习时,原始合成培训组合的质量通过使用超分辨率技术(如Real-ESRGAN)而提高。接下来,MT-EmotiEffNet对新培训集进行微调。最后的预测是一个简单的混合组合,预培训和微调MT-EmoffNet的组合(0.3)或仅用S-Aff-Wild2数据库培训的现有模型。在从合成数据挑战中学习时,通过使用超分辨率技术(例如Real-ESrangan),我们平均的F1确认F1网络评分数比18的18要大。