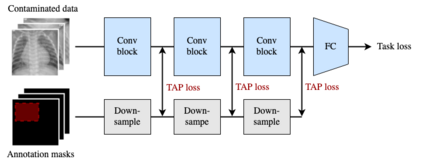

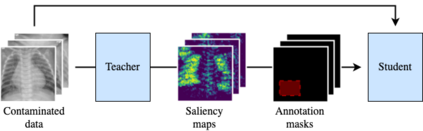

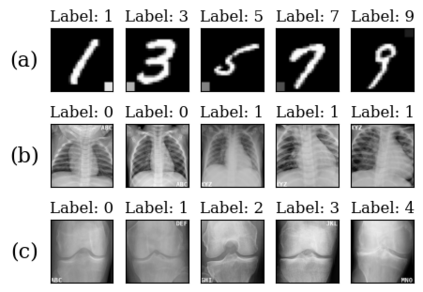

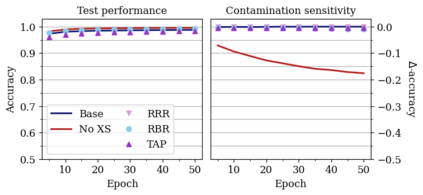

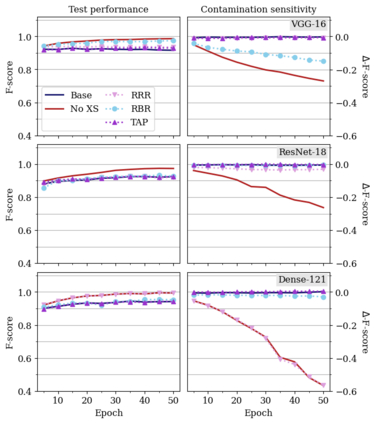

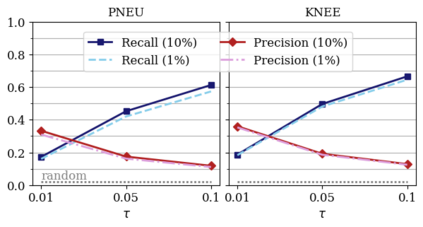

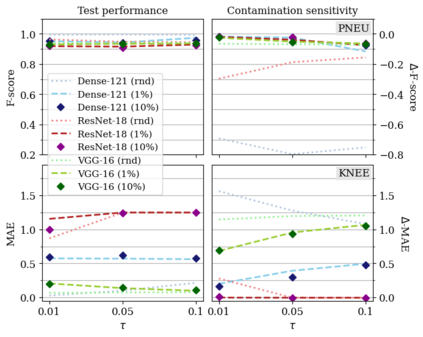

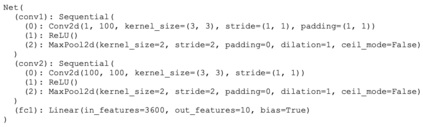

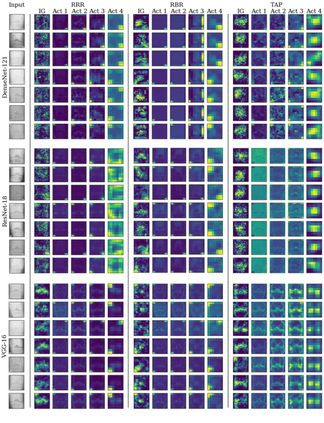

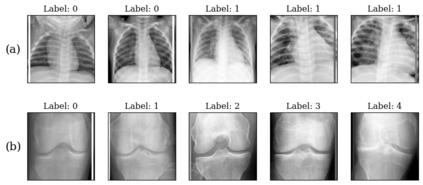

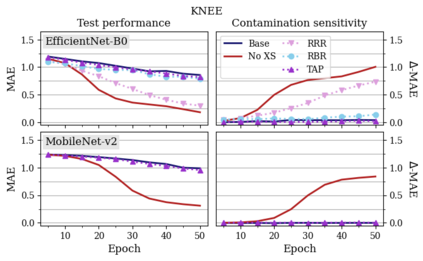

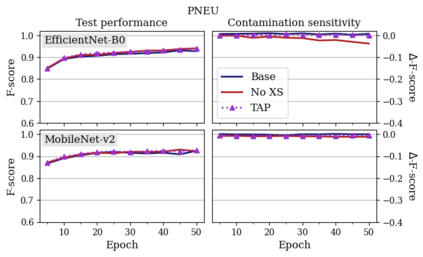

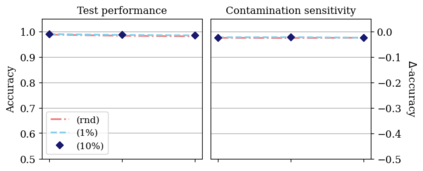

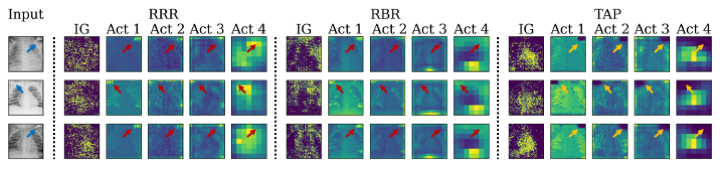

Neural networks (NNs) can learn to rely on spurious signals in the training data, leading to poor generalisation. Recent methods tackle this problem by training NNs with additional ground-truth annotations of such signals. These methods may, however, let spurious signals re-emerge in deep convolutional NNs (CNNs). We propose Targeted Activation Penalty (TAP), a new method tackling the same problem by penalising activations to control the re-emergence of spurious signals in deep CNNs, while also lowering training times and memory usage. In addition, ground-truth annotations can be expensive to obtain. We show that TAP still works well with annotations generated by pre-trained models as effective substitutes of ground-truth annotations. We demonstrate the power of TAP against two state-of-the-art baselines on the MNIST benchmark and on two clinical image datasets, using four different CNN architectures.

翻译:暂无翻译