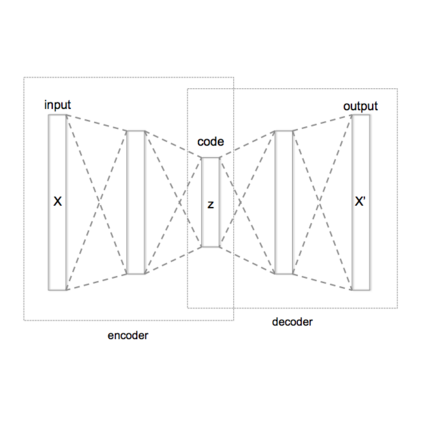

Generative models have emerged as a promising technique for producing high-quality images that are indistinguishable from real images. Generative adversarial networks (GANs) and variational autoencoders (VAEs) are two of the most prominent and widely studied generative models. GANs have demonstrated excellent performance in generating sharp realistic images and VAEs have shown strong abilities to generate diverse images. However, GANs suffer from ignoring a large portion of the possible output space which does not represent the full diversity of the target distribution, and VAEs tend to produce blurry images. To fully capitalize on the strengths of both models while mitigating their weaknesses, we employ a Bayesian non-parametric (BNP) approach to merge GANs and VAEs. Our procedure incorporates both Wasserstein and maximum mean discrepancy (MMD) measures in the loss function to enable effective learning of the latent space and generate diverse and high-quality samples. By fusing the discriminative power of GANs with the reconstruction capabilities of VAEs, our novel model achieves superior performance in various generative tasks, such as anomaly detection and data augmentation. Furthermore, we enhance the model's capability by employing an extra generator in the code space, which enables us to explore areas of the code space that the VAE might have overlooked. With a BNP perspective, we can model the data distribution using an infinite-dimensional space, which provides greater flexibility in the model and reduces the risk of overfitting. By utilizing this framework, we can enhance the performance of both GANs and VAEs to create a more robust generative model suitable for various applications.

翻译:暂无翻译