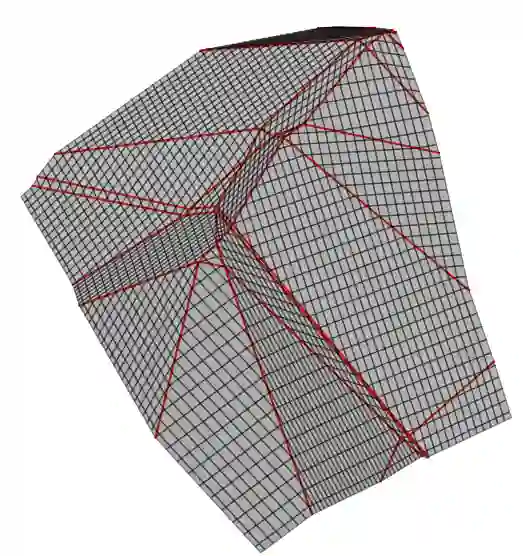

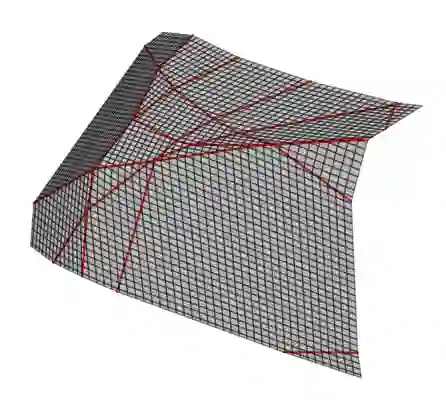

A big mystery in deep learning continues to be the ability of methods to generalize when the number of model parameters is larger than the number of training examples. In this work, we take a step towards a better understanding of the underlying phenomena of Deep Autoencoders (AEs), a mainstream deep learning solution for learning compressed, interpretable, and structured data representations. In particular, we interpret how AEs approximate the data manifold by exploiting their continuous piecewise affine structure. Our reformulation of AEs provides new insights into their mapping, reconstruction guarantees, as well as an interpretation of commonly used regularization techniques. We leverage these findings to derive two new regularizations that enable AEs to capture the inherent symmetry in the data. Our regularizations leverage recent advances in the group of transformation learning to enable AEs to better approximate the data manifold without explicitly defining the group underlying the manifold. Under the assumption that the symmetry of the data can be explained by a Lie group, we prove that the regularizations ensure the generalization of the corresponding AEs. A range of experimental evaluations demonstrate that our methods outperform other state-of-the-art regularization techniques.

翻译:深层学习的一个大奥秘仍然是当模型参数数量大于培训实例的数量时,如何运用方法来概括模型参数数量大于培训实例的数量。在这项工作中,我们迈出了一步,以更好地了解深自动编码器(AEs)的内在现象,这是学习压缩、可解释和结构化数据表述的一种主流深层学习解决方案。特别是,我们解释AEs如何通过利用其连续的片段近距离结构来接近数据多重。我们重新拟订的AEs提供了对其绘图、重建保障以及对常用的正规化技术的解释的新洞察力。我们利用这些发现得出了两种新的正规化,使AEs能够捕捉到数据中固有的对称。我们的正规化利用了转型学习组中最近的进展,使AEs能够更好地接近数据组合,而没有明确地界定构成该组合的组。根据一个谎言组可以解释数据对称的对称,我们证明这些正规化确保了相应的常规化的AEs。一系列实验性评估表明,我们的方法优于其他状态的正规化技术。