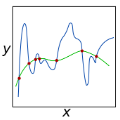

In recent years, a plethora of spectral graph neural networks (GNN) methods have utilized polynomial basis with learnable coefficients to achieve top-tier performances on many node-level tasks. Although various kinds of polynomial bases have been explored, each such method adopts a fixed polynomial basis which might not be the optimal choice for the given graph. Besides, we identify the so-called over-passing issue of these methods and show that it is somewhat rooted in their less-principled regularization strategy and unnormalized basis. In this paper, we make the first attempts to address these two issues. Leveraging Jacobi polynomials, we design a novel spectral GNN, LON-GNN, with Learnable OrthoNormal bases and prove that regularizing coefficients becomes equivalent to regularizing the norm of learned filter function now. We conduct extensive experiments on diverse graph datasets to evaluate the fitting and generalization capability of LON-GNN, where the results imply its superiority.

翻译:近年来,许多谱图神经网络 (GNN) 方法利用多项式基和可学习系数,在许多节点级任务中达到了最高级别的性能。虽然探索了各种各样类型的多项式基,但每种方法采用的固定多项式基可能不是给定图的最佳选择。此外,我们还发现了这些方法中的“过度传递”问题,并且证明了这个问题在不太规范的正则化策略和非归一化基础上存在。在本文中,我们首次尝试解决这两个问题。利用雅可比多项式,我们设计了一种新型谱 GNN,即 LON-GNN,具有可学习的正交归一基,并证明了正则化系数等同于现在正则化所学滤波器函数的范数。我们在各种图数据集上进行了广泛的实验,以评估 LON-GNN 的拟合和推广能力,结果表明其优越性。