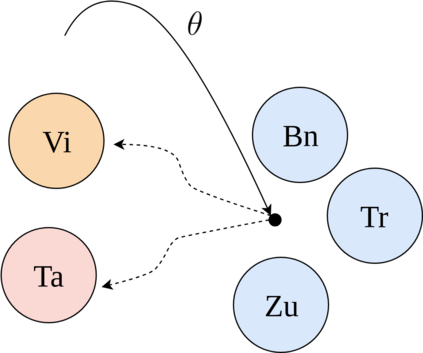

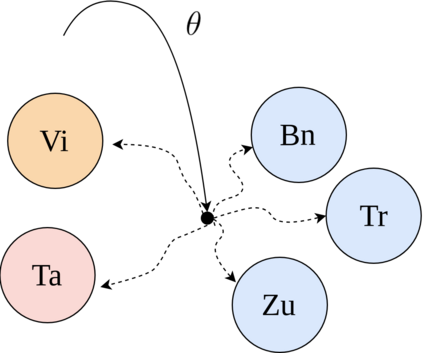

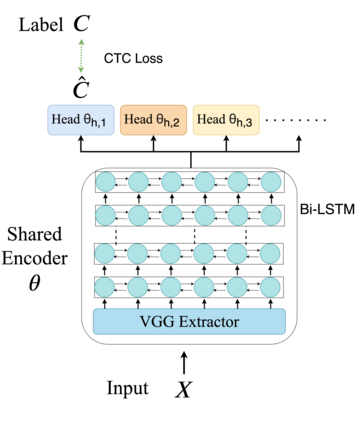

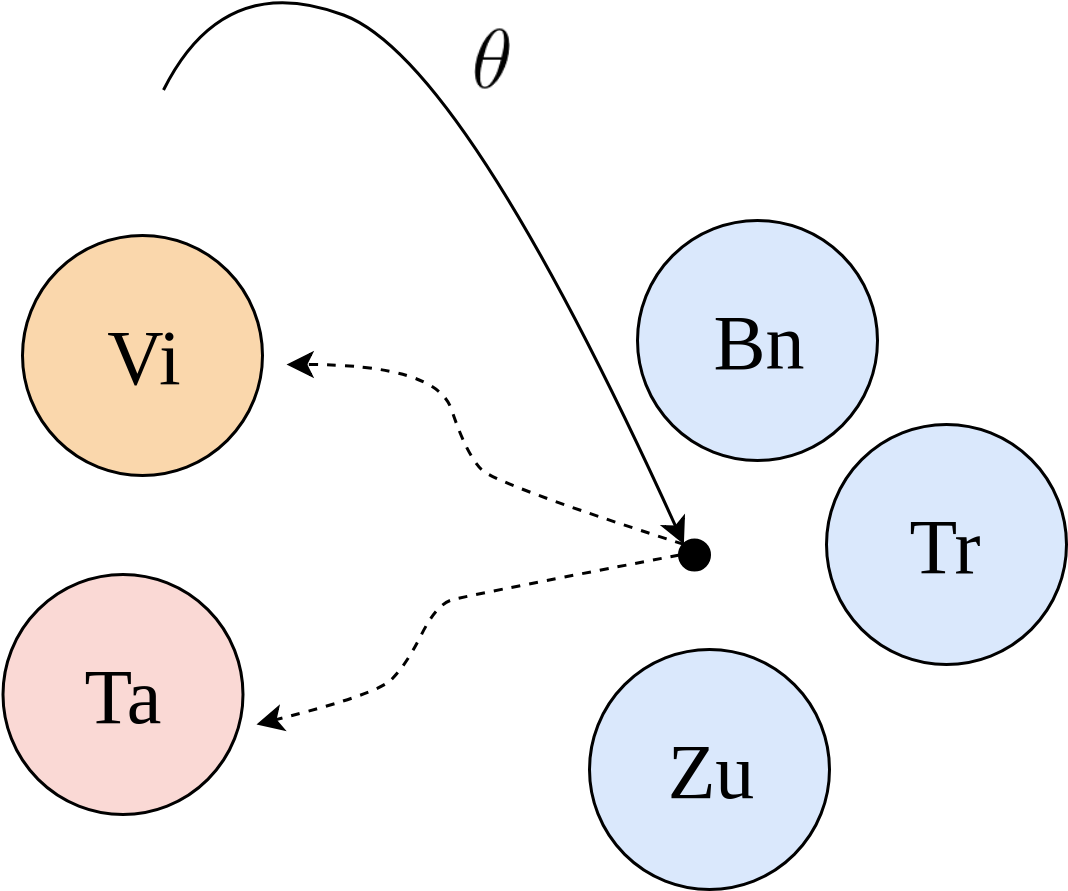

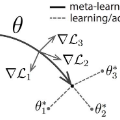

In this paper, we proposed to apply meta learning approach for low-resource automatic speech recognition (ASR). We formulated ASR for different languages as different tasks, and meta-learned the initialization parameters from many pretraining languages to achieve fast adaptation on unseen target language, via recently proposed model-agnostic meta learning algorithm (MAML). We evaluated the proposed approach using six languages as pretraining tasks and four languages as target tasks. Preliminary results showed that the proposed method, MetaASR, significantly outperforms the state-of-the-art multitask pretraining approach on all target languages with different combinations of pretraining languages. In addition, since MAML's model-agnostic property, this paper also opens new research direction of applying meta learning to more speech-related applications.

翻译:在这份文件中,我们提议对低资源自动语音识别采用元学习方法(ASR),我们为不同语言拟订了ASR,作为不同的任务,并从许多培训前语言中获取初始化参数,以便通过最近提议的模型 -- -- 不可知性元学习算法(MAML),迅速适应于看不见的目标语言。我们用六种语言作为培训前任务和四种语言作为目标任务对拟议方法进行了评估。初步结果表明,拟议的方法MetaASR大大优于所有目标语言的最新多任务预培训方法,同时采用了不同的培训前语言组合。此外,由于MAML的模型 -- -- 不可知性特性,本文件还开辟了将元学习应用于更多与语言相关的应用的新研究方向。