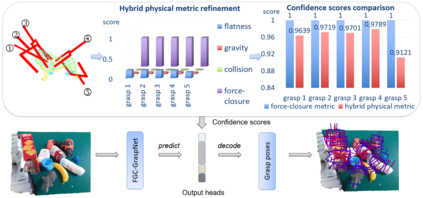

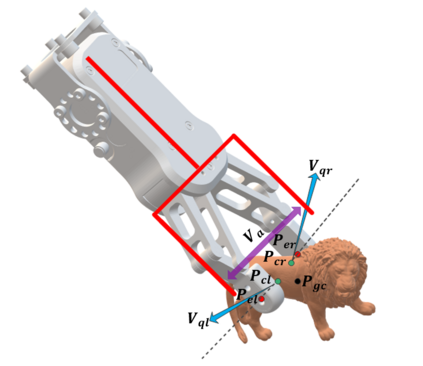

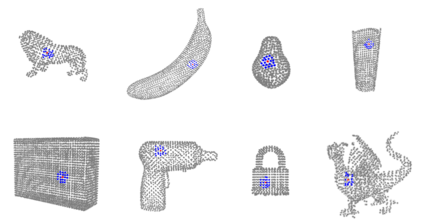

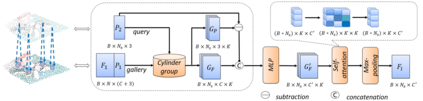

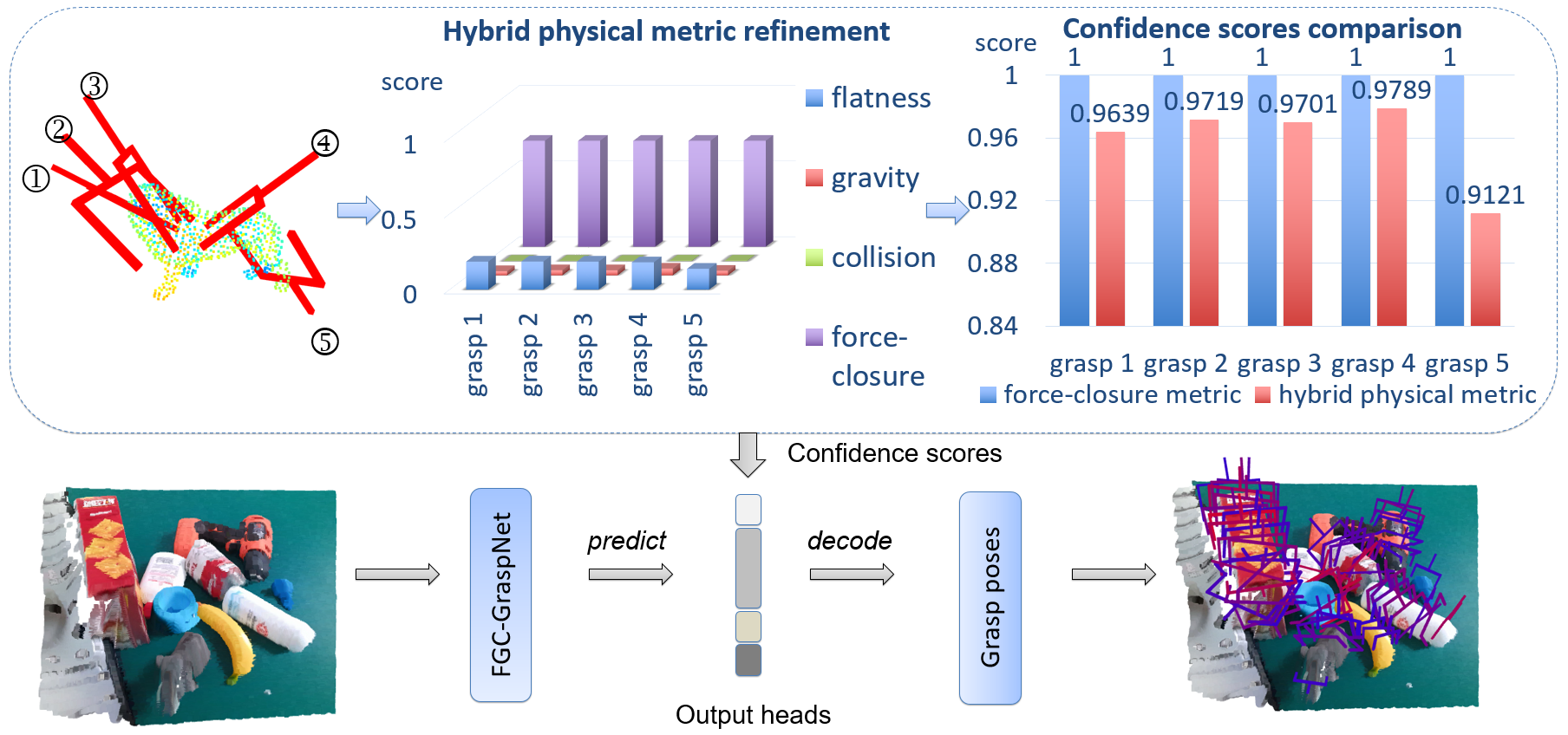

6-DoF grasp pose detection of multi-grasp and multi-object is a challenge task in the field of intelligent robot. To imitate human reasoning ability for grasping objects, data driven methods are widely studied. With the introduction of large-scale datasets, we discover that a single physical metric usually generates several discrete levels of grasp confidence scores, which cannot finely distinguish millions of grasp poses and leads to inaccurate prediction results. In this paper, we propose a hybrid physical metric to solve this evaluation insufficiency. First, we define a novel metric is based on the force-closure metric, supplemented by the measurement of the object flatness, gravity and collision. Second, we leverage this hybrid physical metric to generate elaborate confidence scores. Third, to learn the new confidence scores effectively, we design a multi-resolution network called Flatness Gravity Collision GraspNet (FGC-GraspNet). FGC-GraspNet proposes a multi-resolution features learning architecture for multiple tasks and introduces a new joint loss function that enhances the average precision of the grasp detection. The network evaluation and adequate real robot experiments demonstrate the effectiveness of our hybrid physical metric and FGC-GraspNet. Our method achieves 90.5\% success rate in real-world cluttered scenes. Our code is available at https://github.com/luyh20/FGC-GraspNet.

翻译:6- DoF 抓取是检测多grasp 和多球体是智能机器人领域的一项挑战任务。 为了模仿人类捕捉物体的推理能力,我们广泛研究数据驱动的方法。随着大规模数据集的引入,我们发现单一物理测量通常会产生数个独立的抓取信心分数,这无法细微区分数百万人的抓取构成,导致不准确的预测结果。在本文中,我们提议了一个混合物理测量标准来解决这一评价不足的问题。首先,我们定义了一个新的衡量标准,以力控度为基础,并辅之以对物体平坦度、重力和碰撞的测量。第二,我们利用这一混合物理测量方法来生成精细的自信分数。第三,为了有效地了解新的信任分数,我们设计了一个多分辨率网络,称为Flatness Gravity Grassion Graspet(FGC-GraspNet) (FGC-GraspNet) 。FGC-Graspnet建议了一个多分辨率学习结构, 并引入一个新的联合损失功能,提高抓取平均精确度。网络评估和适当的机器人实验显示我们混为实际物理测量/GRAmb/GRASPRSP/CRSPLSDR 方法在现实空间上的成功率。