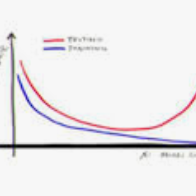

Regularization is commonly used for alleviating overfitting in machine learning. For convolutional neural networks (CNNs), regularization methods, such as DropBlock and Shake-Shake, have illustrated the improvement in the generalization performance. However, these methods lack a self-adaptive ability throughout training. That is, the regularization strength is fixed to a predefined schedule, and manual adjustments are required to adapt to various network architectures. In this paper, we propose a dynamic regularization method for CNNs. Specifically, we model the regularization strength as a function of the training loss. According to the change of the training loss, our method can dynamically adjust the regularization strength in the training procedure, thereby balancing the underfitting and overfitting of CNNs. With dynamic regularization, a large-scale model is automatically regularized by the strong perturbation, and vice versa. Experimental results show that the proposed method can improve the generalization capability on off-the-shelf network architectures and outperform state-of-the-art regularization methods.

翻译:常规化通常用于缓解机器学习中的过度配置。 对于革命神经网络(CNNs), 常规化方法,例如 DroppBlock 和 Shake-Shake 已经说明了一般化绩效的改善, 但是这些方法在整个培训过程中缺乏自我适应的能力, 也就是说, 正规化的强度固定在预先确定的时间安排上, 需要手工调整才能适应各种网络结构。 在本文中, 我们为CNN提出了动态规范化方法。 具体地说, 我们将正规化的强度作为培训损失的函数进行模型。 根据培训损失的变化, 我们的方法可以动态调整培训过程中的正规化强度, 从而平衡CNN的不完善和过度配置。 随着动态的正规化, 一个大型模型会自动通过强力的渗透实现常规化,反之亦然。 实验结果表明, 拟议的方法可以改善现成网络架构的通用能力, 以及超型的常规化方法。