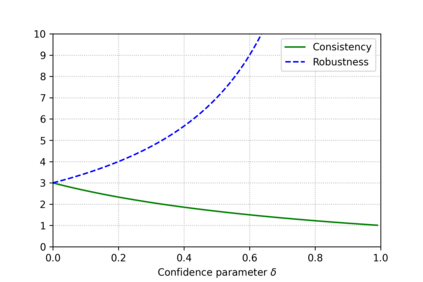

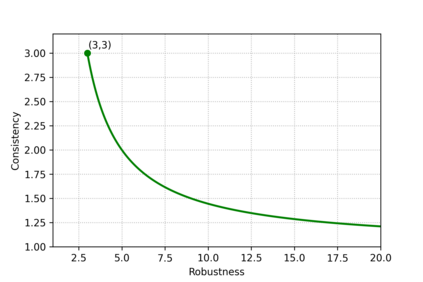

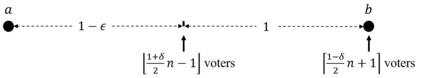

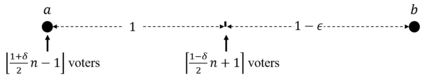

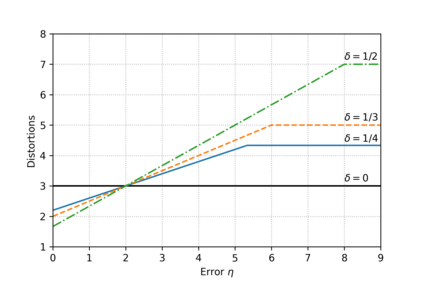

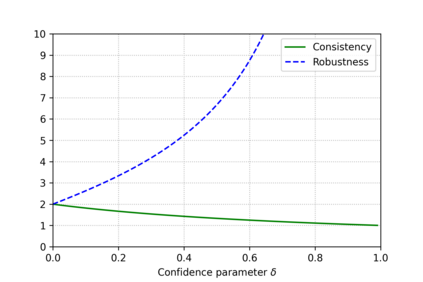

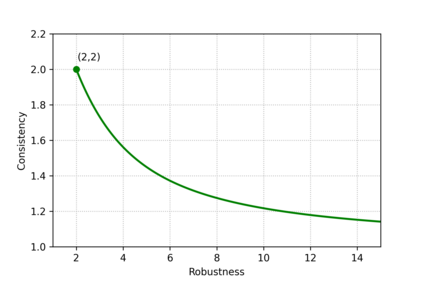

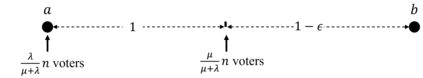

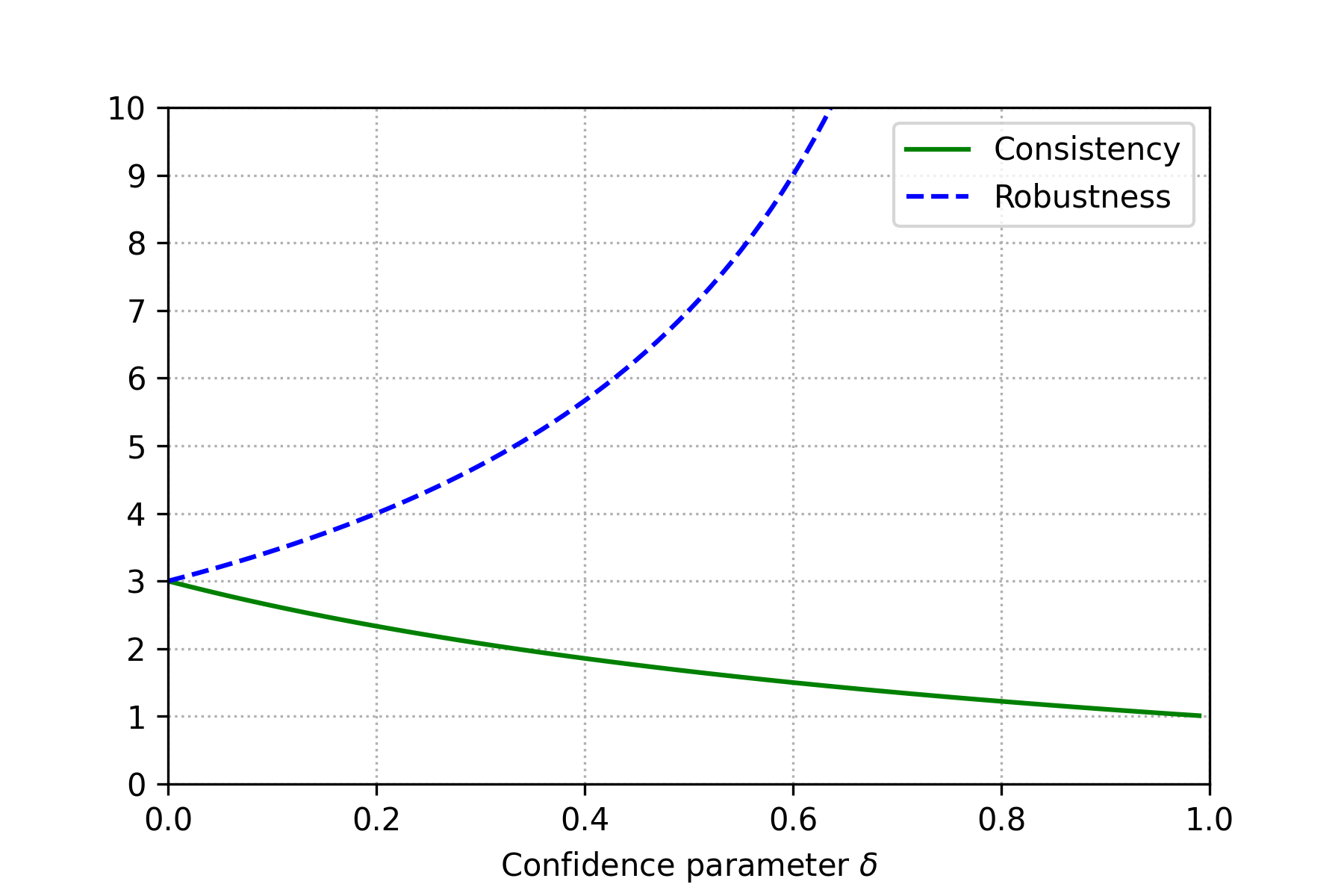

In the metric distortion problem there is a set of candidates $C$ and voters $V$ in the same metric space. The goal is to select a candidate minimizing the social cost: the sum of distances of the selected candidate from all the voters, and the challenge arises from the algorithm receiving only ordinaL input: each voter's ranking of candidate, while the objective function is cardinal, determined by the underlying metric. The distortion of an algorithm is its worst-case approximation factor of the optimal social cost. A key concept here is the (p,q)-veto core, with $p\in \Delta(V)$ and $q\in \Delta(C)$ being normalized weight vectors representing voters' veto power and candidates' support, respectively. The (p,q)-veto core corresponds to a set of winners from a specific class of deterministic algorithms. Notably, the optimal distortion of $3$ is obtained from this class, by selecting veto core candidates using uniform $p$ and $q$ proportional to candidates' plurality scores. Bounding the distortion of other algorithms from this class is an open problem. Our contribution is twofold. First, we establish upper bounds on the distortion of candidates from the (p,q)-veto core for arbitrary weight vectors $p$ and $q$. Second, we revisit the metric distortion problem through the \emph{learning-augmented} framework, which equips the algorithm with a (machine-learned) prediction regarding the optimal candidate. The quality of this prediction is unknown, and the goal is to optimize the algorithm's performance under accurate predictions (consistency), while simultaneously providing worst-case guarantees under arbitrarily inaccurate predictions (robustness). We propose an algorithm that chooses candidates from the (p,q)-veto core, using a prediction-guided q vector and, leveraging our distortion bounds, we prove that this algorithm achieves the optimal robustness-consistency trade-off.

翻译:暂无翻译