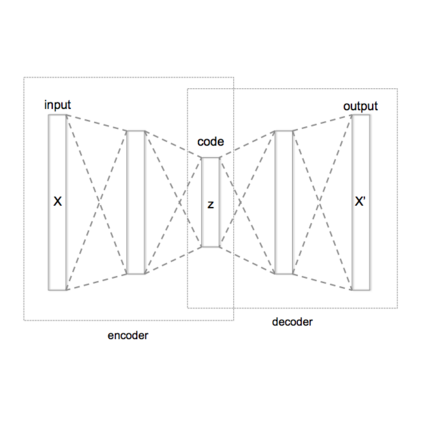

We address the problem of generating diverse 3D human motions from textual descriptions. This challenging task requires joint modeling of both modalities: understanding and extracting useful human-centric information from the text, and then generating plausible and realistic sequences of human poses. In contrast to most previous work which focuses on generating a single, deterministic, motion from a textual description, we design a variational approach that can produce multiple diverse human motions. We propose TEMOS, a text-conditioned generative model leveraging variational autoencoder (VAE) training with human motion data, in combination with a text encoder that produces distribution parameters compatible with the VAE latent space. We show the TEMOS framework can produce both skeleton-based animations as in prior work, as well more expressive SMPL body motions. We evaluate our approach on the KIT Motion-Language benchmark and, despite being relatively straightforward, demonstrate significant improvements over the state of the art. Code and models are available on our webpage.

翻译:我们处理从文字描述中产生不同的3D人类动作的问题。这项具有挑战性的任务需要两种模式的联合模型:理解和从文字中提取有用的以人为中心的信息,然后产生人造形的可信和现实的顺序。 与以往大多数侧重于产生单一的、决定性的、以文字描述为主的动作的工作相比,我们设计了一种可产生多种人类动作的变式方法。 我们提议TEMOS,一个以人类运动数据为杠杆的变式自动coder(VAE)培训以文字为主的变式变式模型,与产生与VAE潜在空间相容的分布参数的文本编码相结合。我们展示了TEMOS框架可以像以前的工作那样产生基于骨架的动画,以及更直观的SMPL体动作。我们评估了我们关于KIT Motion-Language基准的方法,尽管相对直截了事,但还是展示了与艺术状况相比的重大改进。 我们的网页上有代码和模型。