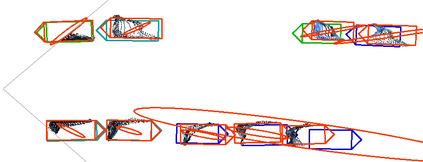

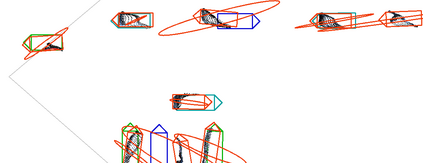

Object localization in 3D space is a challenging aspect in monocular 3D object detection. Recent advances in 6DoF pose estimation have shown that predicting dense 2D-3D correspondence maps between image and object 3D model and then estimating object pose via Perspective-n-Point (PnP) algorithm can achieve remarkable localization accuracy. Yet these methods rely on training with ground truth of object geometry, which is difficult to acquire in real outdoor scenes. To address this issue, we propose MonoRUn, a novel detection framework that learns dense correspondences and geometry in a self-supervised manner, with simple 3D bounding box annotations. To regress the pixel-related 3D object coordinates, we employ a regional reconstruction network with uncertainty awareness. For self-supervised training, the predicted 3D coordinates are projected back to the image plane. A Robust KL loss is proposed to minimize the uncertainty-weighted reprojection error. During testing phase, we exploit the network uncertainty by propagating it through all downstream modules. More specifically, the uncertainty-driven PnP algorithm is leveraged to estimate object pose and its covariance. Extensive experiments demonstrate that our proposed approach outperforms current state-of-the-art methods on KITTI benchmark.

翻译:3D 空间的天体定位是单外 3D 对象探测中一个具有挑战性的方面。 6DoF 的最近进步估计显示, 预测图像和对象 3D 模型之间密度的 2D-3D 对应地图, 然后通过透视- 点( PnP) 算法对天体进行估计, 可以实现显著的本地化准确性。 然而, 这些方法依赖于对天体位置的地面真实性的培训, 这在真实的户外场景中很难获得。 为了解决这个问题, 我们建议 MonorRUn, 这是一种以自我监督的方式学习密集的通信和几何学的新发现框架, 带有简单的 3D 捆绑框说明。 要返回像素相关的 3D 对象坐标, 我们使用一个具有不确定性意识的区域重建网络。 为了进行自我监督的培训, 预计的 3D 坐标将返回到图像平面。 robust KL 损失是要将不确定性加权的重新预测错误最小化。 在测试阶段, 我们通过所有下游模块来利用网络的不确定性。 。 更具体地, 由不确定性驱动的 PnP 参数算将用来估计对象的当前形状和基图法 。