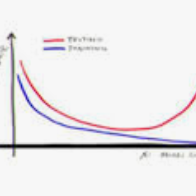

In this paper, we study the generalization performance of overparameterized 3-layer NTK models. We show that, for a specific set of ground-truth functions (which we refer to as the "learnable set"), the test error of the overfitted 3-layer NTK is upper bounded by an expression that decreases with the number of neurons of the two hidden layers. Different from 2-layer NTK where there exists only one hidden-layer, the 3-layer NTK involves interactions between two hidden-layers. Our upper bound reveals that, between the two hidden-layers, the test error descends faster with respect to the number of neurons in the second hidden-layer (the one closer to the output) than with respect to that in the first hidden-layer (the one closer to the input). We also show that the learnable set of 3-layer NTK without bias is no smaller than that of 2-layer NTK models with various choices of bias in the neurons. However, in terms of the actual generalization performance, our results suggest that 3-layer NTK is much less sensitive to the choices of bias than 2-layer NTK, especially when the input dimension is large.

翻译:在本文中,我们研究了三层NTK模型的超参数化 3 层 NTK 模型的通用性能。 我们显示,对于一组特定的地面真伪函数(我们称之为“可读集 ” ) 来说, 叠装三层 NTK 的测试错误比第一个隐藏层的神经元(更接近输入)的测试错误要大得多。 我们还表明,与仅存在一个隐藏层的2层 NTK 2层 NTK 模型不同, 3层 NTK 模型涉及两个隐藏层之间的相互作用。 我们的上层显示,在两个隐藏层之间,测试错误在第二个隐藏层(更接近输出层的)神经元数量方面,比第一个隐藏层(更接近输入层的神经元)的测试错误增加得更快。 我们还表明,3层NTK 的可学习的数据集并不小于2层 NTK 模型,在神经部中存在各种偏向性选择。 但是,在实际的概括性性表现方面,我们的结果表明,在第二个隐藏层NTK 的偏向性比2层的偏差要小得多。