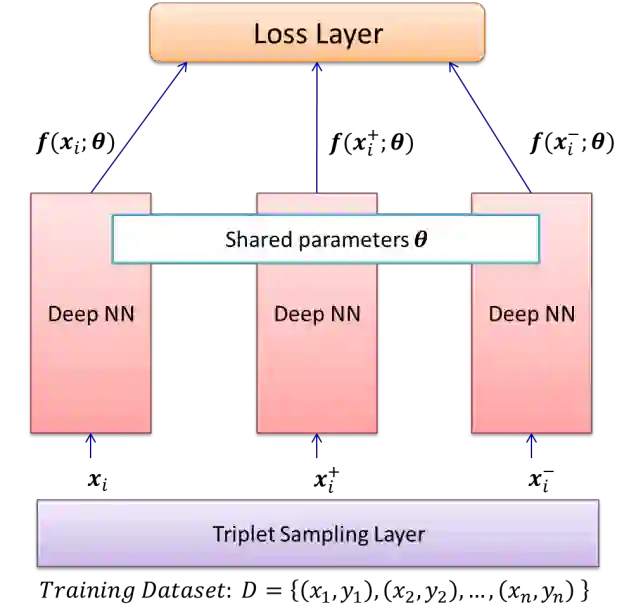

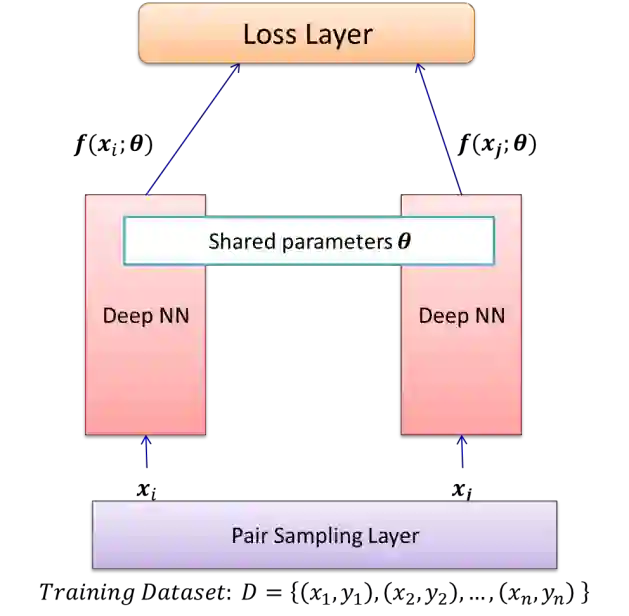

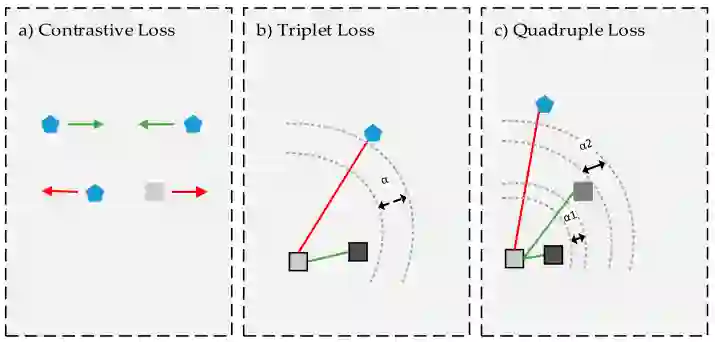

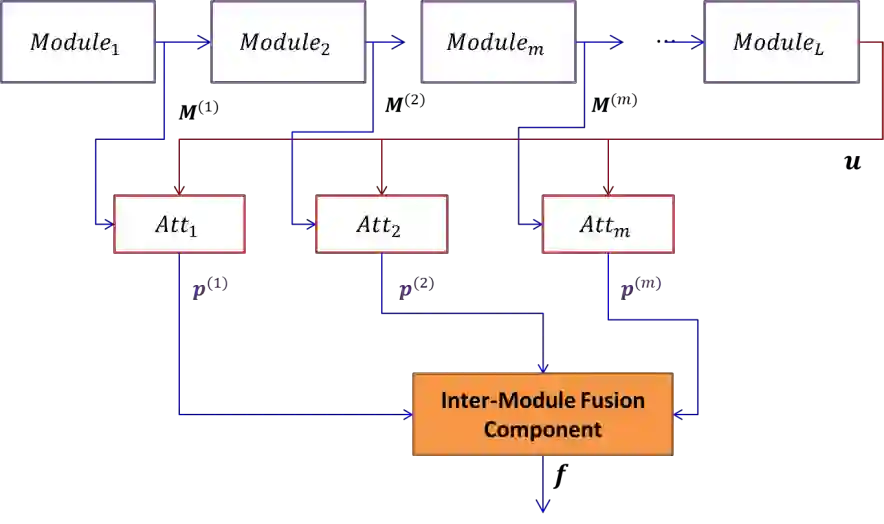

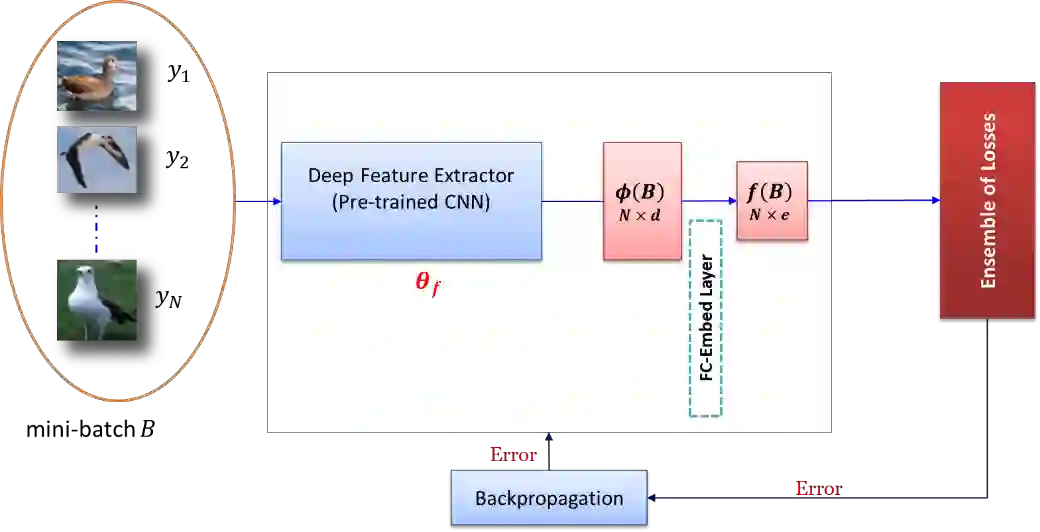

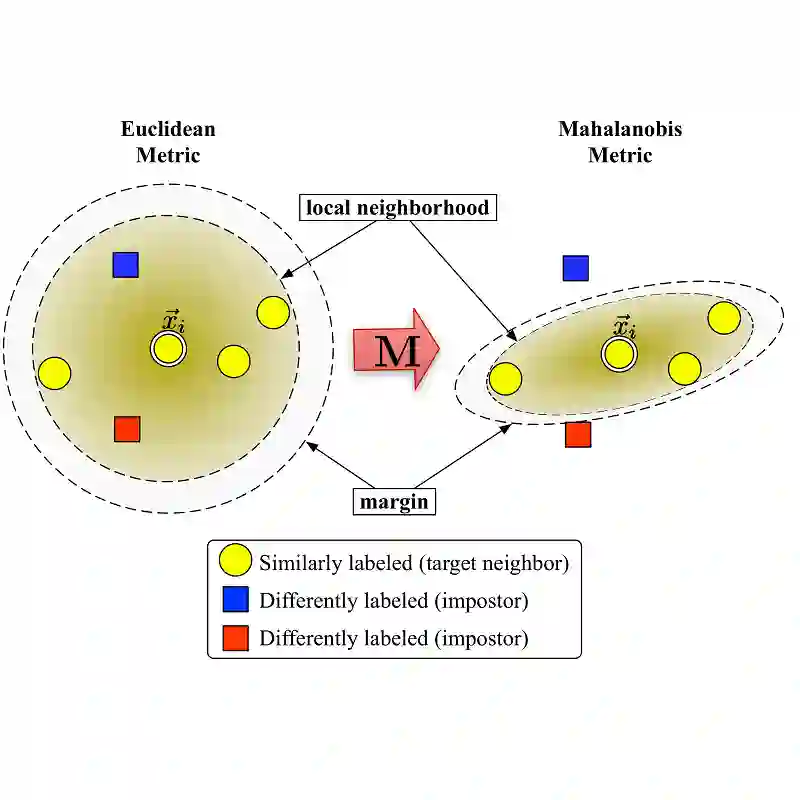

Deep Metric Learning (DML) learns a non-linear semantic embedding from input data that brings similar pairs together while keeps dissimilar data away from each other. To this end, many different methods are proposed in the last decade with promising results in various applications. The success of a DML algorithm greatly depends on its loss function. However, no loss function is perfect, and it deals only with some aspects of an optimal similarity embedding. Besides, the generalizability of the DML on unseen categories during the test stage is an important matter that is not considered by existing loss functions. To address these challenges, we propose novel approaches to combine different losses built on top of a shared deep feature extractor. The proposed ensemble of losses enforces the deep model to extract features that are consistent with all losses. Since the selected losses are diverse and each emphasizes different aspects of an optimal semantic embedding, our effective combining methods yield a considerable improvement over any individual loss and generalize well on unseen categories. Here, there is no limitation in choosing loss functions, and our methods can work with any set of existing ones. Besides, they can optimize each loss function as well as its weight in an end-to-end paradigm with no need to adjust any hyper-parameter. We evaluate our methods on some popular datasets from the machine vision domain in conventional Zero-Shot-Learning (ZSL) settings. The results are very encouraging and show that our methods outperform all baseline losses by a large margin in all datasets.

翻译:深磁学习( DML) 从输入数据中学习非线性语义嵌入。 输入数据将相似的对对相连接, 同时又使数据相互分离。 为此, 过去十年中提出了许多不同的方法, 在各种应用中取得了有希望的结果。 DML 算法的成功在很大程度上取决于其损失功能。 但是, 没有损失功能是完美的, 它只涉及最佳相似嵌入的某些方面。 此外, 在测试阶段, DML 在隐蔽类别上的一般性是一个重要的问题, 现有的损失函数没有考虑到这一点。 为了应对这些挑战, 我们提出了新颖的方法, 将共同的深功能提取器顶端上的各种损失合并起来。 拟议的损失堆集在过去十年中提出了与所有损失一致的深度模型。 由于选定的损失功能是多种多样的, 每一个都强调最佳的常规嵌入式嵌入的不同方面, 我们的有效组合方法使任何个人损失都大有改进, 并且对看不见的类别进行概括化。 在这里, 在选择损失函数时没有限制, 我们的方法可以与任何现有的一系列的大型特性提取。 此外, 它们可以优化每个损失模型的模型 显示我们的标准的模型的模型, 显示我们最接近的模型的模型 。