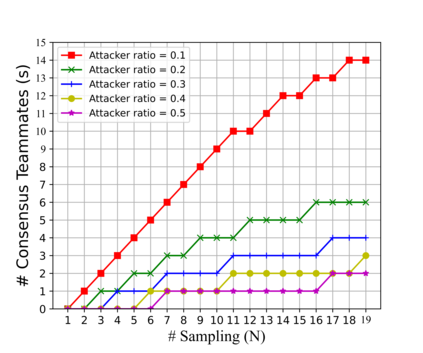

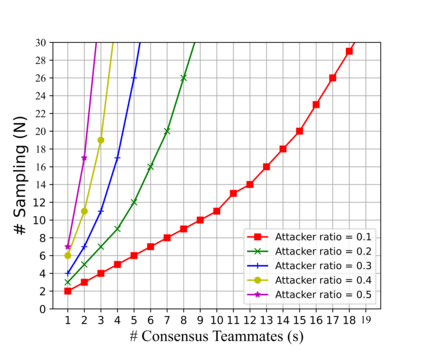

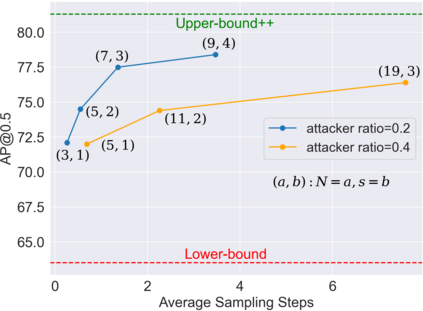

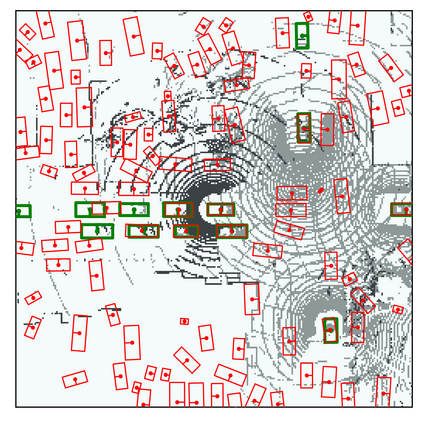

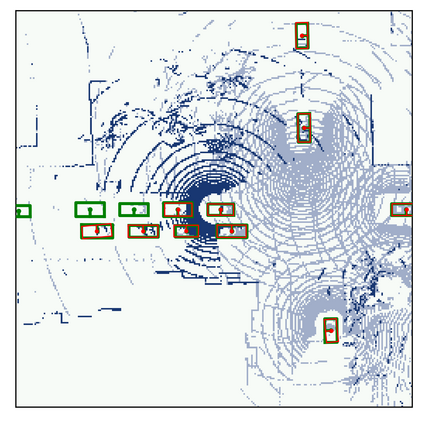

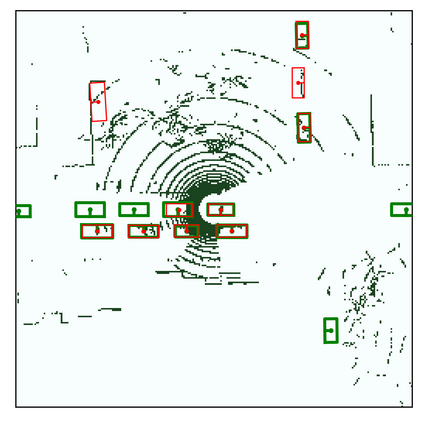

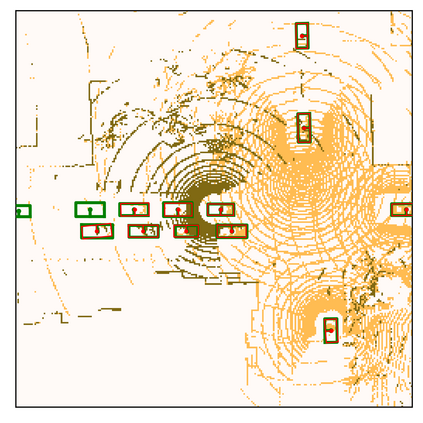

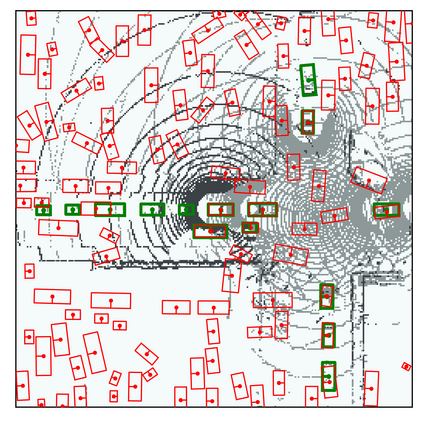

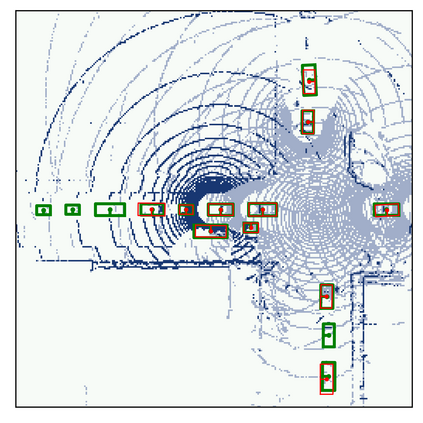

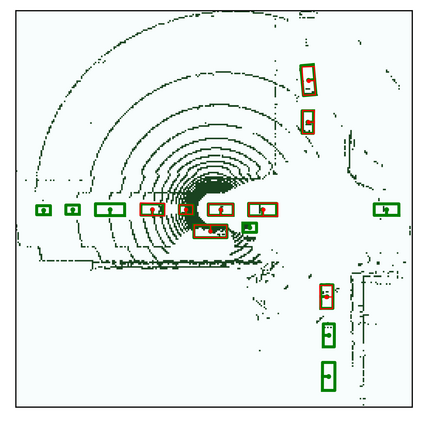

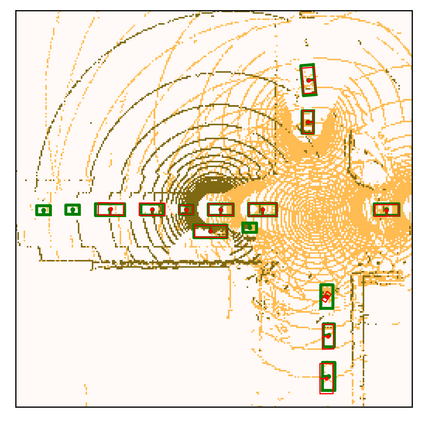

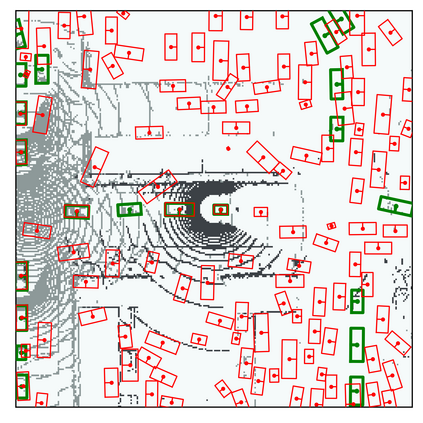

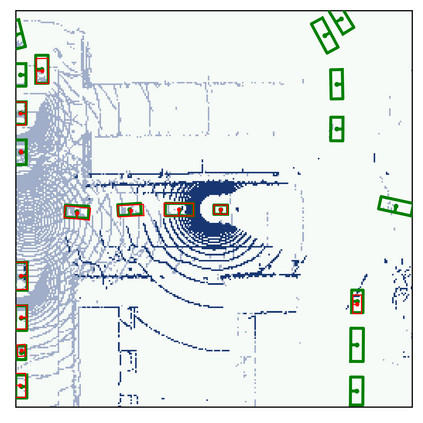

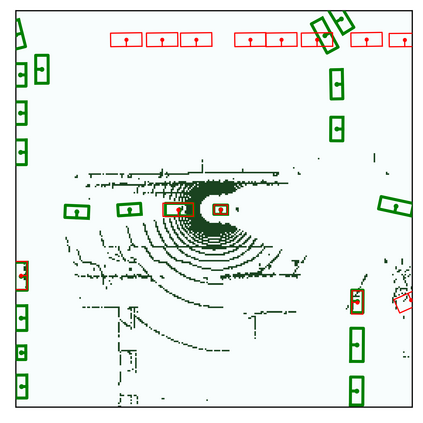

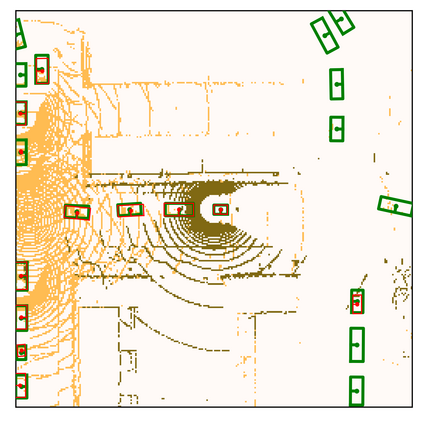

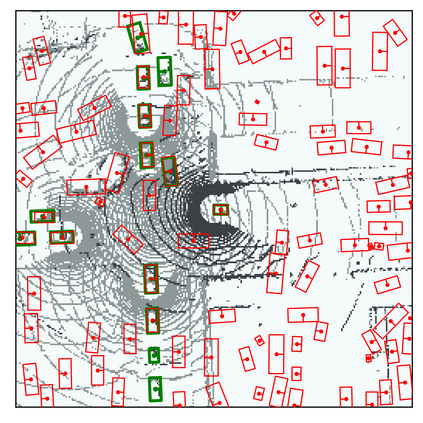

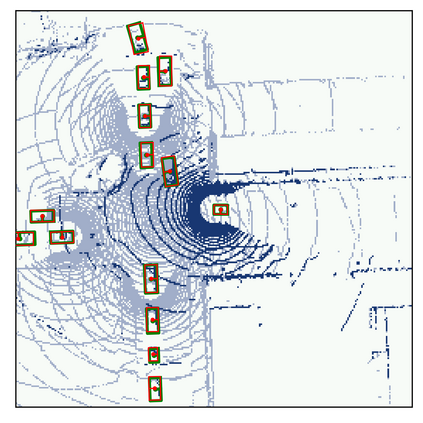

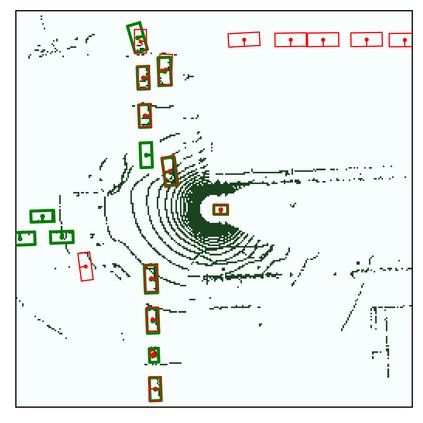

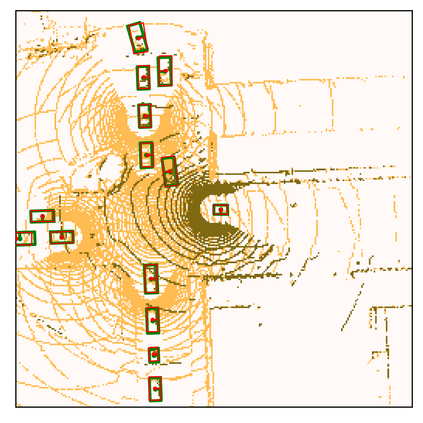

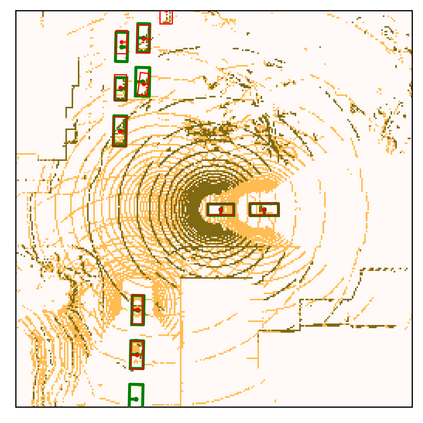

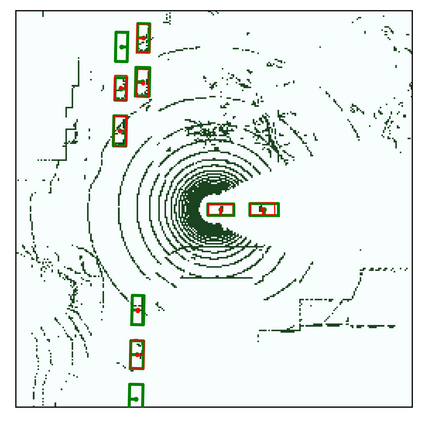

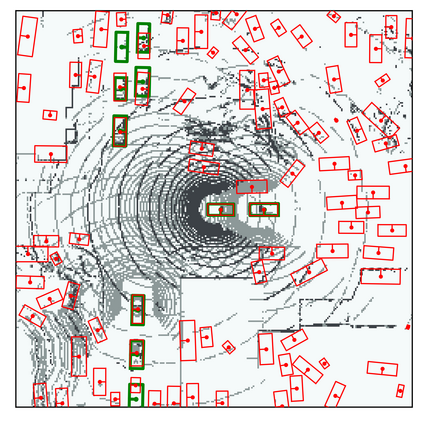

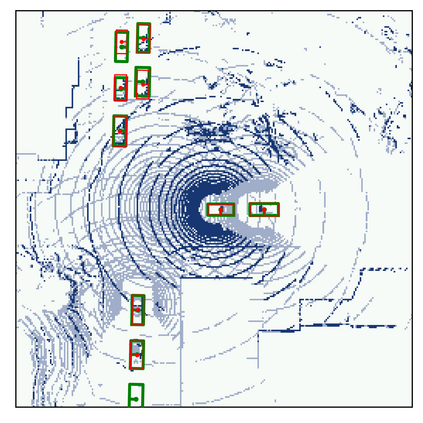

Multiple robots could perceive a scene (e.g., detect objects) collaboratively better than individuals, although easily suffer from adversarial attacks when using deep learning. This could be addressed by the adversarial defense, but its training requires the often-unknown attacking mechanism. Differently, we propose ROBOSAC, a novel sampling-based defense strategy generalizable to unseen attackers. Our key idea is that collaborative perception should lead to consensus rather than dissensus in results compared to individual perception. This leads to our hypothesize-and-verify framework: perception results with and without collaboration from a random subset of teammates are compared until reaching a consensus. In such a framework, more teammates in the sampled subset often entail better perception performance but require longer sampling time to reject potential attackers. Thus, we derive how many sampling trials are needed to ensure the desired size of an attacker-free subset, or equivalently, the maximum size of such a subset that we can successfully sample within a given number of trials. We validate our method on the task of collaborative 3D object detection in autonomous driving scenarios.

翻译:我们翻译的论文题目是《谁是卧底:一种通过一致达成来抵御对抗攻击的协同感知》,其摘要如下:在使用深度学习进行协同场景感知(例如,检测物体)时,多个机器人可以比单独感知更好,但容易受到对抗性攻击的影响。虽然可以使用对抗性防御来应对攻击,但这需要了解攻击机制,而这通常是未知的。因此,我们提出了 ROBOSAC,这是一种新颖的基于采样的防御策略,可以推广到看不见的攻击者。我们的关键思想是:合作感知应该导致结果一致而不是不一致,相对于个体感知,这具有更好的对抗性能。这导致了我们的“假设和验证”框架:由一个随机的小组成员子集进行协作或没有协作的感知结果进行比较,直到达成一致。在这样的框架下,更多的队友通常意味着更好的感知性能,但同时需要更长的采样时间来拒绝可能的攻击者。因此,我们推导出需要多少个采样试验来确保一个不受攻击的子集,或在给定的试验次数内,能够成功地采样出这样一个子集的最大大小。我们以自动驾驶场景下的3D目标检测为例,验证了我们的方法。