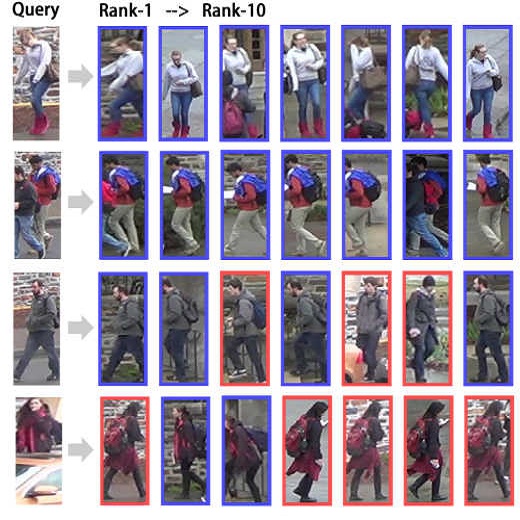

The attention mechanism is widely used in deep learning because of its excellent performance in neural networks without introducing additional information. However, in unsupervised person re-identification, the attention module represented by multi-headed self-attention suffers from attention spreading in the condition of non-ground truth. To solve this problem, we design pixel-level attention module to provide constraints for multi-headed self-attention. Meanwhile, for the trait that the identification targets of person re-identification data are all pedestrians in the samples, we design domain-level attention module to provide more comprehensive pedestrian features. We combine head-level, pixel-level and domain-level attention to propose multi-level attention block and validate its performance on for large person re-identification datasets (Market-1501, DukeMTMC-reID and MSMT17 and PersonX).

翻译:在深思熟虑中,关注机制被广泛使用,因为它在神经网络中的出色表现而没有引入更多信息,然而,在无人监督的人重新认同时,由多头自我关注代表的关注模块在非地面真相的条件下受到关注,在非地面真相的条件下受到关注。为解决这一问题,我们设计了像素关注模块,为多头自我关注提供制约。与此同时,由于个人再识别数据识别目标都是样本中的行人,我们设计了域级关注模块,以提供更全面的行人特征。我们将头层、像素层面和域级的关注结合起来,提出多层关注模块,并验证其在大型人再识别数据集(Market-1501、DukMCTMMC-reID和MSMT17和人X)上的性能。