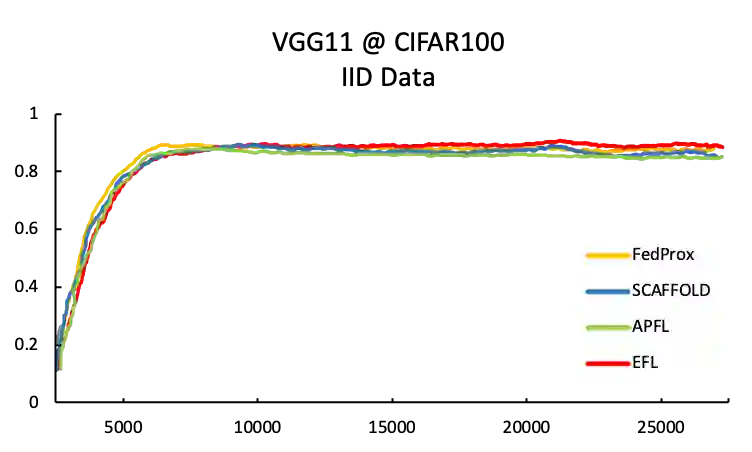

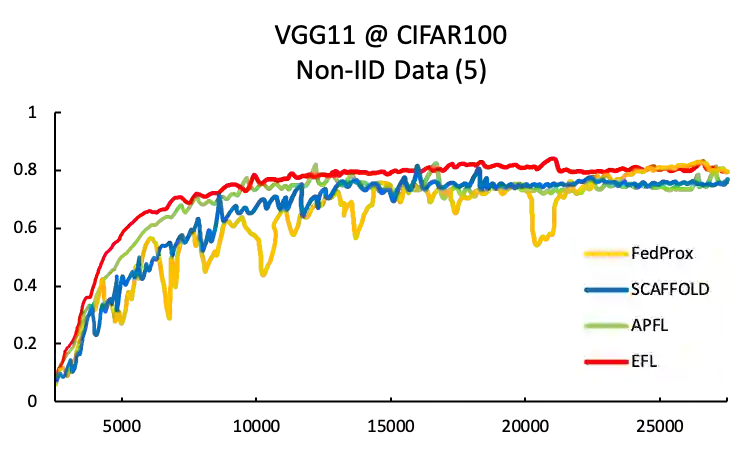

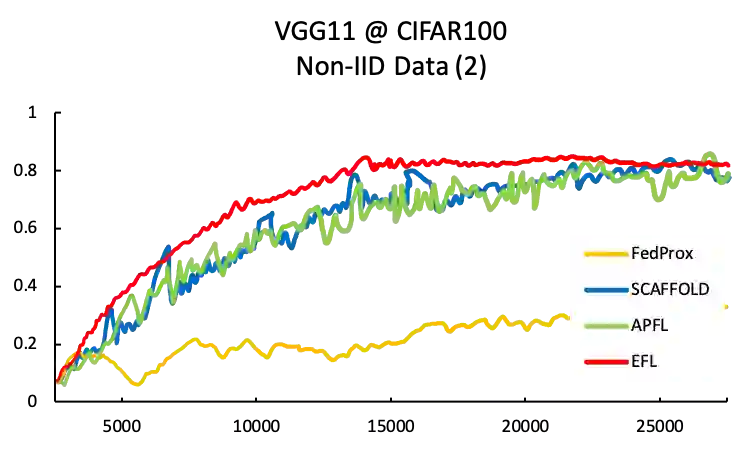

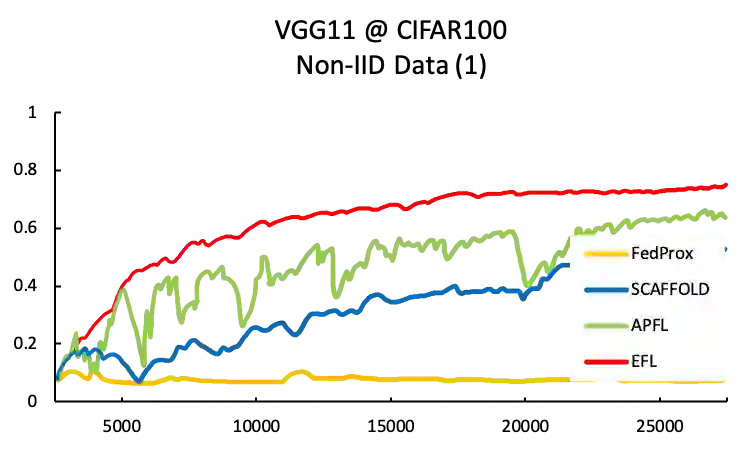

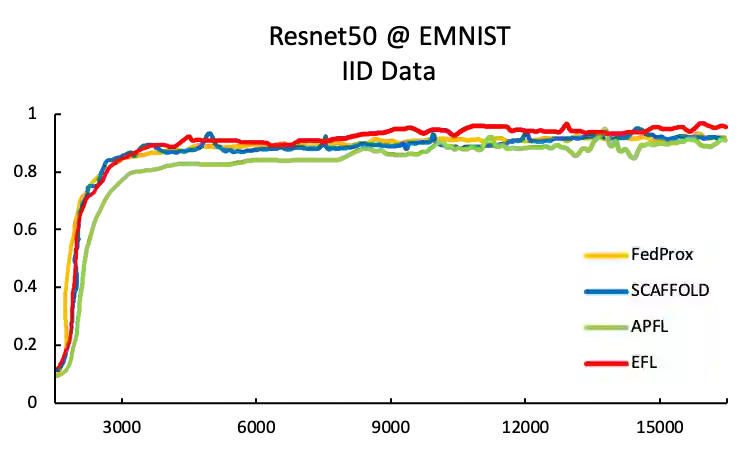

Federated learning involves training machine learning models over devices or data silos, such as edge processors or data warehouses, while keeping the data local. Training in heterogeneous and potentially massive networks introduces bias into the system, which is originated from the non-IID data and the low participation rate in reality. In this paper, we propose Elastic Federated Learning (EFL), an unbiased algorithm to tackle the heterogeneity in the system, which makes the most informative parameters less volatile during training, and utilizes the incomplete local updates. It is an efficient and effective algorithm that compresses both upstream and downstream communications. Theoretically, the algorithm has convergence guarantee when training on the non-IID data at the low participation rate. Empirical experiments corroborate the competitive performance of EFL framework on the robustness and the efficiency.

翻译:联邦学习涉及在设备或数据筒仓(如边缘处理器或数据仓库)上培训机器学习模型,同时将数据保存在本地; 各种网络和潜在大规模网络的培训在系统中引入了偏见,这种偏见源于非IID数据以及实际参与率低; 在本文中,我们建议采用 Elastic Federal Learning(EFL)这一公正算法来解决系统中的不均匀性问题,这种算法使得培训期间信息最丰富的参数不易波动,并利用不完整的当地更新; 这是一种高效和有效的算法,压缩上游和下游的通信。 从理论上讲,算法保证在低参与率下进行关于非IID数据的培训时会趋同。 经验实验证实了EFL框架在稳健性和效率方面的竞争性表现。