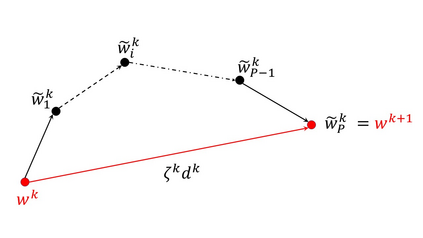

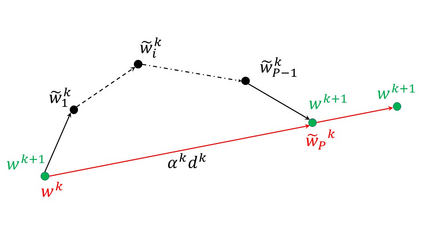

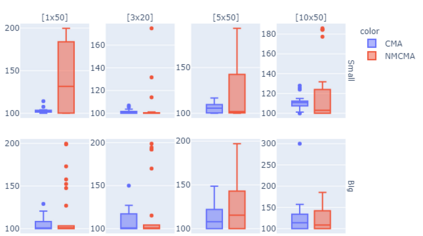

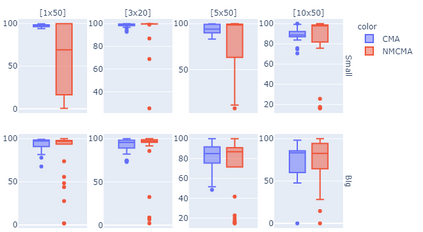

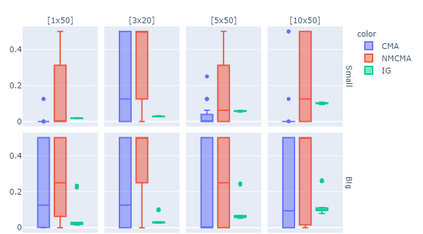

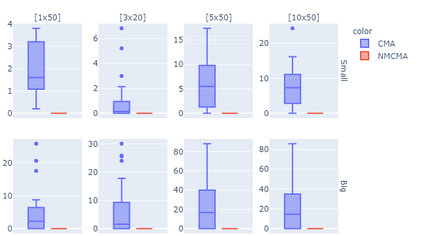

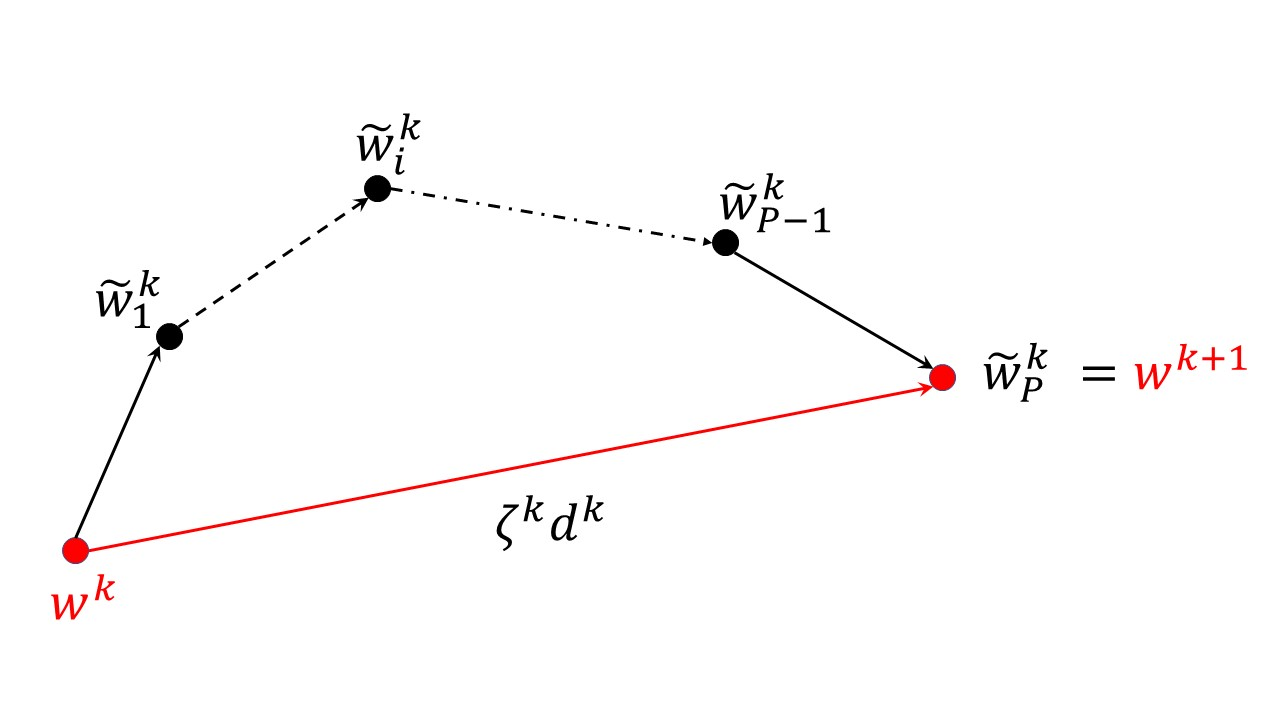

We consider minimizing the average of a very large number of smooth and possibly non-convex functions. This optimization problem has deserved much attention in the past years due to the many applications in different fields, the most challenging being training Machine Learning models. Widely used approaches for solving this problem are mini-batch gradient methods which, at each iteration, update the decision vector moving along the gradient of a mini-batch of the component functions. We consider the Incremental Gradient (IG) and the Random reshuffling (RR) methods which proceed in cycles, picking batches in a fixed order or by reshuffling the order after each epoch. Convergence properties of these schemes have been proved under different assumptions, usually quite strong. We aim to define ease-controlled modifications of the IG/RR schemes, which require a light additional computational effort and can be proved to converge under very weak and standard assumptions. In particular, we define two algorithmic schemes, monotone or non-monotone, in which the IG/RR iteration is controlled by using a watchdog rule and a derivative-free line search that activates only sporadically to guarantee convergence. The two schemes also allow controlling the updating of the stepsize used in the main IG/RR iteration, avoiding the use of preset rules. We prove convergence under the lonely assumption of Lipschitz continuity of the gradients of the component functions and perform extensive computational analysis using Deep Neural Architectures and a benchmark of datasets. We compare our implementation with both full batch gradient methods and online standard implementation of IG/RR methods, proving that the computational effort is comparable with the corresponding online methods and that the control on the learning rate may allow faster decrease.

翻译:我们考虑将大量平稳且可能非康文的功能的平均值降到最低,因为不同领域的应用很多,在过去几年中,这一优化问题值得高度重视,因为不同领域有许多应用,最困难的是培训机器学习模式。解决这一问题的广泛方法是微型批量梯度方法,在每次迭代时,根据一个组件功能小型批次的梯度梯度更新决定矢量。我们考虑周期性递增梯度(IG)和随机重整(RR)方法,按固定顺序分批取批量,或在每个地方之后重新调整顺序。在不同的假设下,这些办法的趋同性特性得到了证明,通常非常强烈。我们的目标是确定对IG/RR计划进行宽松的修改,这些修改需要更简单的计算努力,并证明可以在非常薄弱和标准假设下汇合。特别是,我们定义两种算法方法,即单调或非双轨制方法,其中IG/R(IG/R)方法,通过使用监督规则的全程规则来控制,对内部整流递增线进行重新排序。我们的目标是在使用G/RR(R)规则进行升级后,我们使用规则来保证使用标准执行。</s>