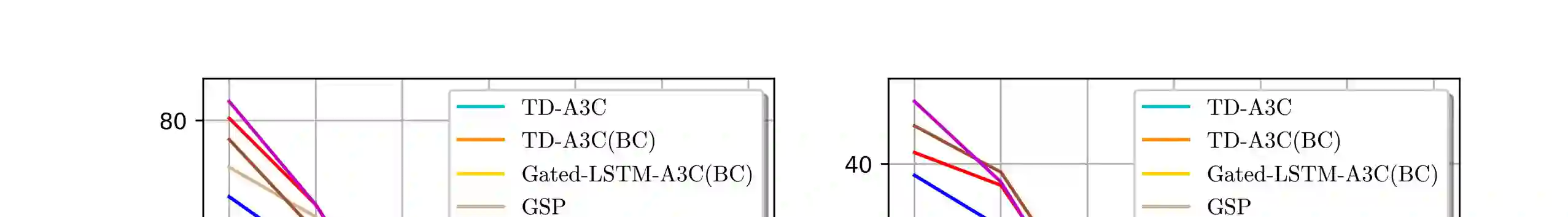

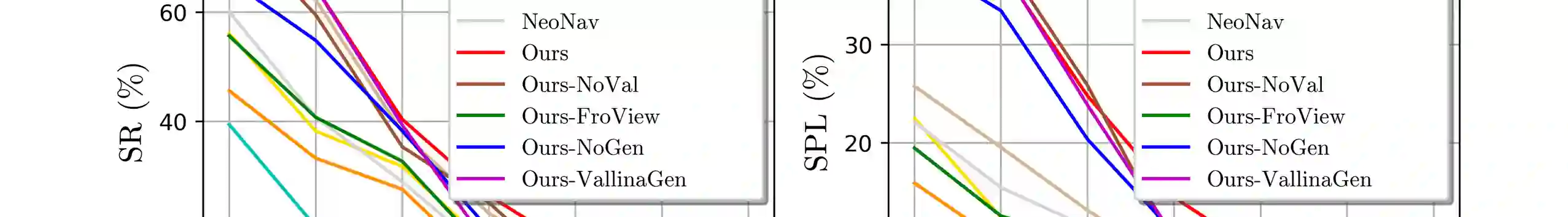

To enhance the cross-target and cross-scene generalization of target-driven visual navigation based on deep reinforcement learning (RL), we introduce an information-theoretic regularization term into the RL objective. The regularization maximizes the mutual information between navigation actions and visual observation transforms of an agent, thus promoting more informed navigation decisions. This way, the agent models the action-observation dynamics by learning a variational generative model. Based on the model, the agent generates (imagines) the next observation from its current observation and navigation target. This way, the agent learns to understand the causality between navigation actions and the changes in its observations, which allows the agent to predict the next action for navigation by comparing the current and the imagined next observations. Cross-target and cross-scene evaluations on the AI2-THOR framework show that our method attains at least a $10\%$ improvement of average success rate over some state-of-the-art models. We further evaluate our model in two real-world settings: navigation in unseen indoor scenes from a discrete Active Vision Dataset (AVD) and continuous real-world environments with a TurtleBot.We demonstrate that our navigation model is able to successfully achieve navigation tasks in these scenarios. Videos and models can be found in the supplementary material.

翻译:为加强基于深层强化学习(RL)的目标驱动视觉导航的跨目标和跨层概括化,我们在RL目标中引入了一个信息理论规范化术语。这种正规化最大限度地扩大了导航行动和代理人视觉观测转换之间的相互信息,从而促进了更知情的导航决定。这样,代理商通过学习变异基因模型,模拟了行动观察动态。根据模型,该代理商从当前观测和导航目标中生成了下一个观测。这样,该代理商学会了理解导航行动和观测变化之间的因果关系,使代理人能够通过比较当前和想象的下一个观测预测下一个导航行动。对AI2-THOR框架的交叉目标和跨曲线评价表明,我们的方法至少比某些状态的遗传模型平均成功率提高了10美元。我们进一步评估了我们的两个现实世界环境中的模型:从离散的视觉数据集(AVD)和连续的地现实世界环境中的导航,通过在海龟导航模型和图像模型中成功地展示了我们的导航任务。