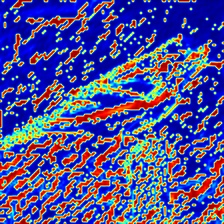

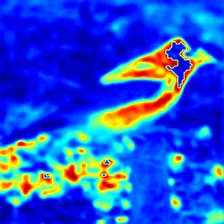

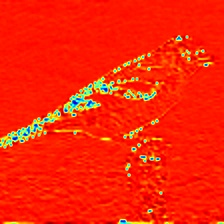

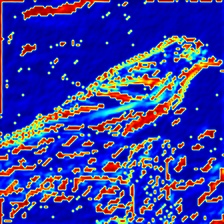

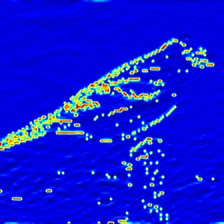

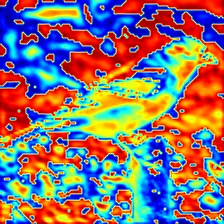

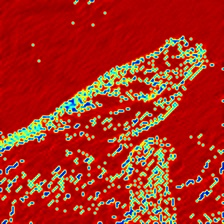

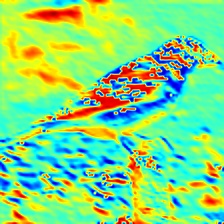

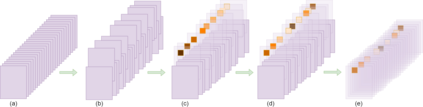

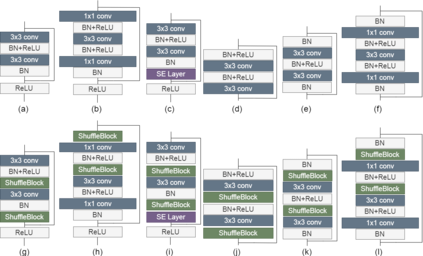

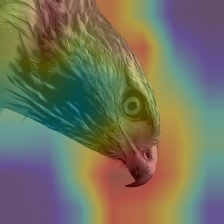

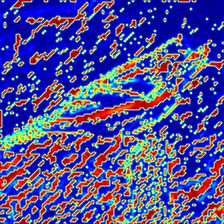

Deep neural networks have enormous representational power which leads them to overfit on most datasets. Thus, regularizing them is important in order to reduce overfitting and enhance their generalization capabilities. Recently, channel shuffle operation has been introduced for mixing channels in group convolutions in resource efficient networks in order to reduce memory and computations. This paper studies the operation of channel shuffle as a regularization technique in deep convolutional networks. We show that while random shuffling of channels during training drastically reduce their performance, however, randomly shuffling small patches between channels significantly improves their performance. The patches to be shuffled are picked from the same spatial locations in the feature maps such that a patch, when transferred from one channel to another, acts as structured noise for the later channel. We call this method "ShuffleBlock". The proposed ShuffleBlock module is easy to implement and improves the performance of several baseline networks on the task of image classification on CIFAR and ImageNet datasets. It also achieves comparable and in many cases better performance than many other regularization methods. We provide several ablation studies on selecting various hyperparameters of the ShuffleBlock module and propose a new scheduling method that further enhances its performance.

翻译:深心神经网络具有巨大的代表力,导致它们过度使用大多数数据集。 因此, 对它们进行正规化非常重要, 以减少过度装配和提高其一般化能力。 最近, 在资源效率高的网络中, 引入了频道洗牌操作, 用于在群体变异中混合频道, 以减少记忆和计算。 本文将频道洗牌作为深进化网络的正规化技术来研究频道洗牌的操作。 我们显示, 在培训过程中随机地对频道进行洗涤, 从而大幅降低其性能, 然而, 随机地在频道间乱晃小块子会大大改善它们的性能。 拟打乱的补丁是从功能图中相同的空间位置中提取的。 这样, 一个补丁在从一个频道转移到另一个频道时, 就会作为后一个频道的结构噪音 。 我们称之为“ shulfleBlock ” 方法。 拟议的 ShuffleBlock 模块易于实施, 并改进几个基准网络在 CIRA和图像网络图像网图像分类任务上的性能大大改进它们的性能。 我们提议了几个模型的性能模型, 。 我们提议进一步改进了各种性能模块。