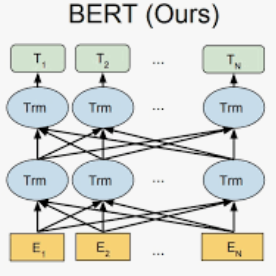

This paper presents raceBERT -- a transformer-based model for predicting race and ethnicity from character sequences in names, and an accompanying python package. Using a transformer-based model trained on a U.S. Florida voter registration dataset, the model predicts the likelihood of a name belonging to 5 U.S. census race categories (White, Black, Hispanic, Asian & Pacific Islander, American Indian & Alaskan Native). I build on Sood and Laohaprapanon (2018) by replacing their LSTM model with transformer-based models (pre-trained BERT model, and a roBERTa model trained from scratch), and compare the results. To the best of my knowledge, raceBERT achieves state-of-the-art results in race prediction using names, with an average f1-score of 0.86 -- a 4.1% improvement over the previous state-of-the-art, and improvements between 15-17% for non-white names.

翻译:本文展示了种族BERT -- -- 一种基于变压器的模型,用于从姓名的字符序列中预测种族和族裔,以及一个配套的皮松包。模型使用一个在美国佛罗里达州选民登记数据集方面受过训练的以变压器为基础的模型,预测了一个属于5个美国人口普查种族类别(白人、黑人、西班牙裔、亚洲及太平洋岛民、美洲印第安人和阿拉斯加土著人)的名字的可能性。我以Sood 和 Laohapapapanon (2018年) 为基础,用基于变压器的模型(经过预先训练的BERT模型和从头到脚训练的ROBERTA模型)取代了他们的LSTM模型,并比较了结果。据我所知,种族BERT在使用地名进行种族预测时取得了最先进的结果,平均F1核心为0.86,比先前的状态改进了4.1%,非白色地名改进了15-17%。