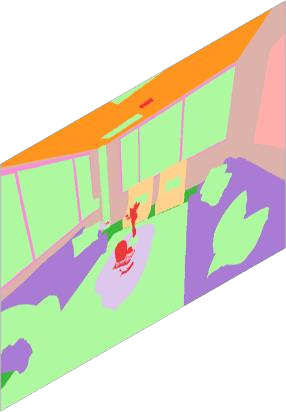

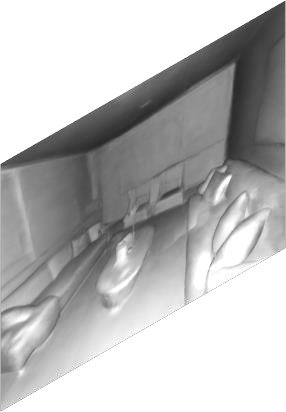

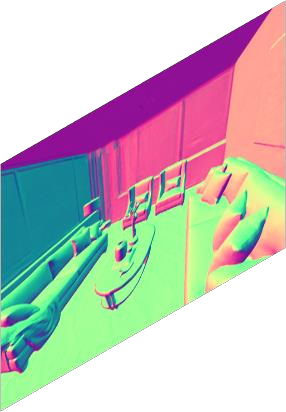

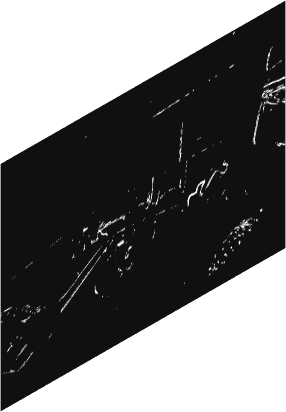

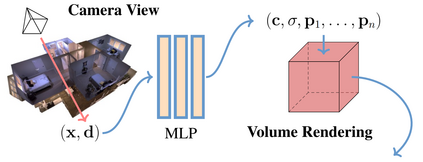

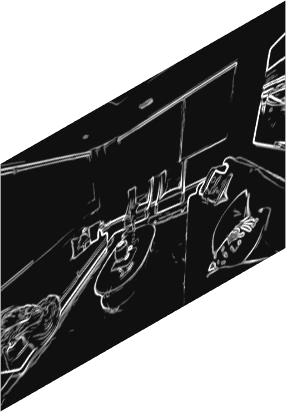

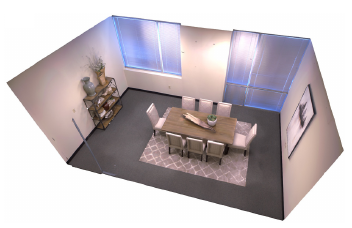

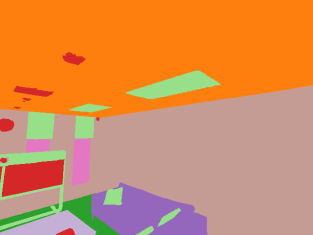

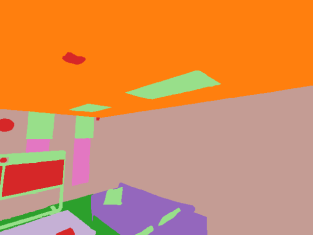

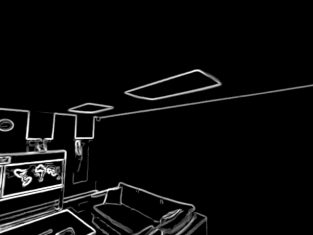

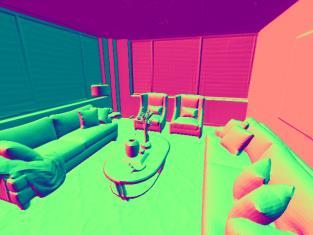

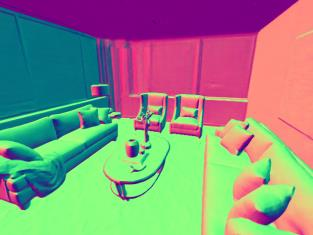

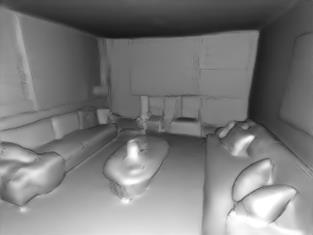

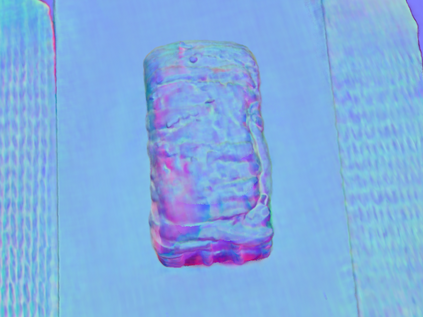

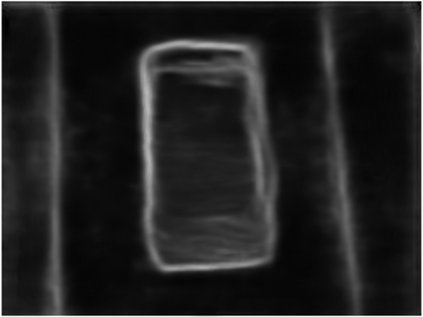

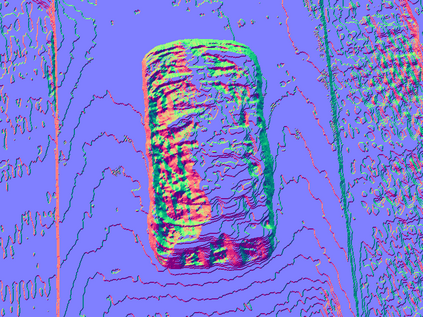

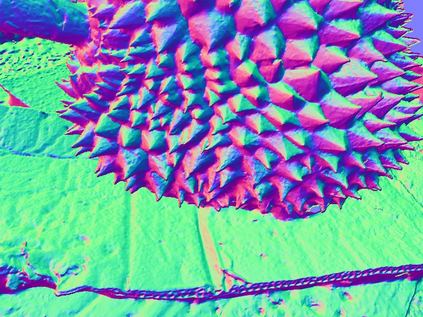

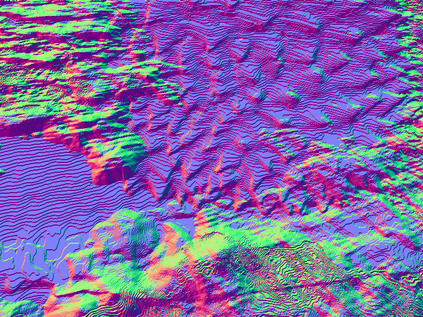

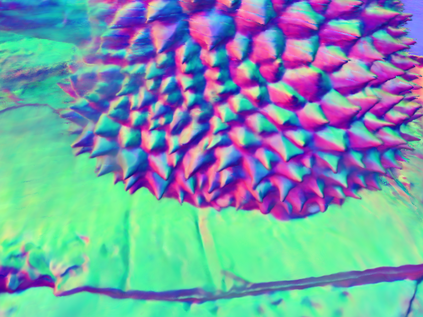

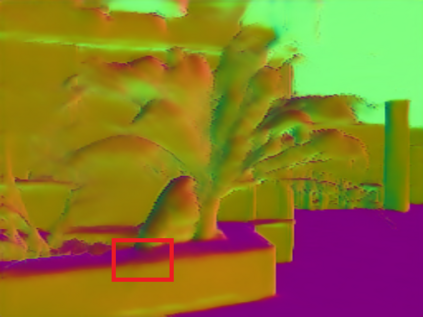

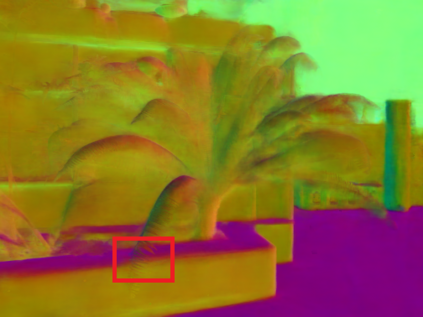

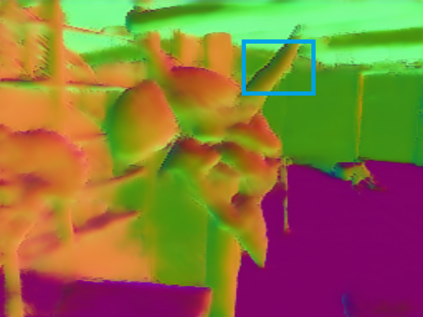

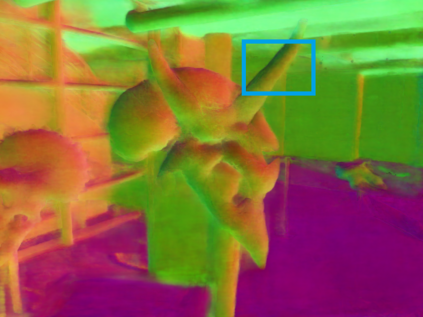

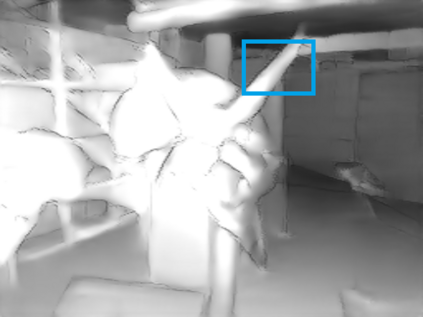

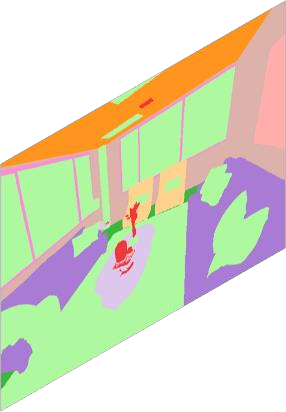

Comprehensive 3D scene understanding, both geometrically and semantically, is important for real-world applications such as robot perception. Most of the existing work has focused on developing data-driven discriminative models for scene understanding. This paper provides a new approach to scene understanding, from a synthesis model perspective, by leveraging the recent progress on implicit 3D representation and neural rendering. Building upon the great success of Neural Radiance Fields (NeRFs), we introduce Scene-Property Synthesis with NeRF (SS-NeRF) that is able to not only render photo-realistic RGB images from novel viewpoints, but also render various accurate scene properties (e.g., appearance, geometry, and semantics). By doing so, we facilitate addressing a variety of scene understanding tasks under a unified framework, including semantic segmentation, surface normal estimation, reshading, keypoint detection, and edge detection. Our SS-NeRF framework can be a powerful tool for bridging generative learning and discriminative learning, and thus be beneficial to the investigation of a wide range of interesting problems, such as studying task relationships within a synthesis paradigm, transferring knowledge to novel tasks, facilitating downstream discriminative tasks as ways of data augmentation, and serving as auto-labeller for data creation.

翻译:3D综合场面理解,从几何学和语义学角度来说,对于机器人感知等现实世界应用来说都很重要。大部分现有工作都侧重于开发数据驱动的差别化模型以了解场面。本文从综合模型角度提供了一种新的场面理解方法,利用最近隐含的 3D 代表面和神经造影的进展。在神经辐射场(NeRFs)的巨大成功的基础上,我们引入了与NERF(SS-NERF)的S-Property合成(SS-NERF),它不仅能够从新视角中产生摄影现实的 RGB图像,而且能够产生各种准确的场面属性(如外观、几何形状和语义学 ) 。 通过这样做,我们在一个统一的框架内促进各种场面理解任务,包括语义分解、表面正常估计、反光谱、重现、关键点探测和边缘探测。我们的SS-NERF框架可以成为一个强有力的工具,用于连接归正学习和歧视性学习,从而有利于调查一系列广泛的有趣问题,例如研究在合成范式内研究任务中的任务关系,将数据转换成一个新的数据,将数据转换成为新的数据,将数据转换为数据创建任务。