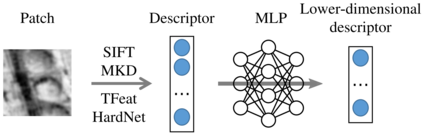

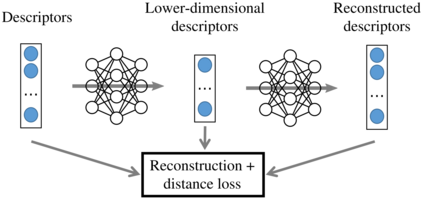

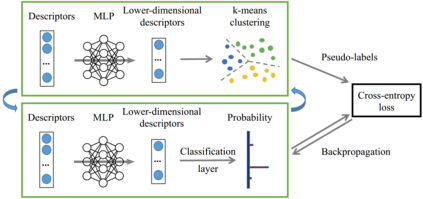

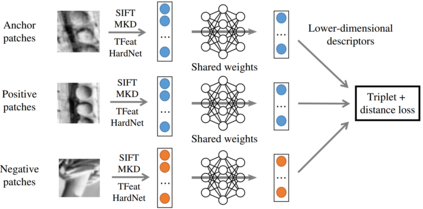

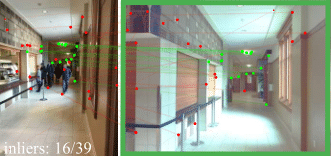

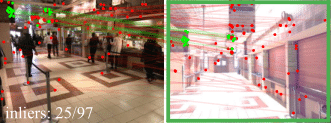

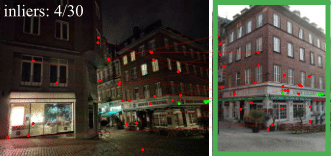

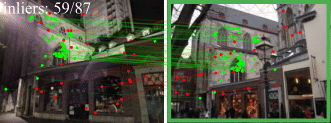

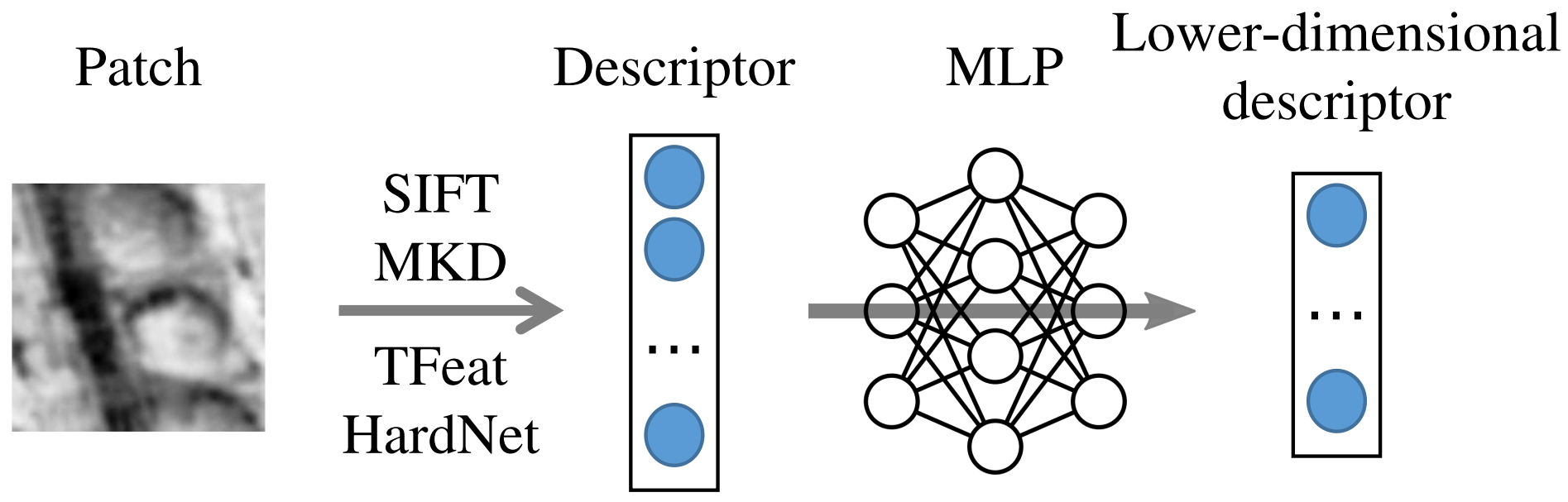

A distinctive representation of image patches in form of features is a key component of many computer vision and robotics tasks, such as image matching, image retrieval, and visual localization. State-of-the-art descriptors, from hand-crafted descriptors such as SIFT to learned ones such as HardNet, are usually high dimensional; 128 dimensions or even more. The higher the dimensionality, the larger the memory consumption and computational time for approaches using such descriptors. In this paper, we investigate multi-layer perceptrons (MLPs) to extract low-dimensional but high-quality descriptors. We thoroughly analyze our method in unsupervised, self-supervised, and supervised settings, and evaluate the dimensionality reduction results on four representative descriptors. We consider different applications, including visual localization, patch verification, image matching and retrieval. The experiments show that our lightweight MLPs achieve better dimensionality reduction than PCA. The lower-dimensional descriptors generated by our approach outperform the original higher-dimensional descriptors in downstream tasks, especially for the hand-crafted ones. The code will be available at https://github.com/PRBonn/descriptor-dr.

翻译:以特征形式呈现图像的明显特征是许多计算机视觉和机器人任务的关键组成部分,如图像匹配、图像检索和视觉定位等。从SSIFT等手制描述器到HardNet等学习的描述器,最先进的描述器通常是高维;128维度甚至更高。维度越高,使用这种描述器的方法的内存消耗和计算时间就越大。在本文中,我们调查多层透视器(MLPs)以提取低维但高质量的描述器。我们彻底分析我们的方法,在不受监督、自我监督和监督的环境中分析我们的方法,并评估四个具有代表性的描述器的维度减少结果。我们考虑不同的应用,包括视觉定位、补丁核查、图像匹配和检索。实验显示,我们的轻度 MLPs比常设仲裁法院更能减少维度。我们的方法产生的较低维度描述器比下游任务(特别是手制的压质/制式)原始的高度描述器要优。