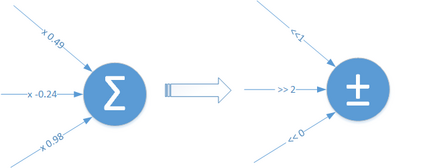

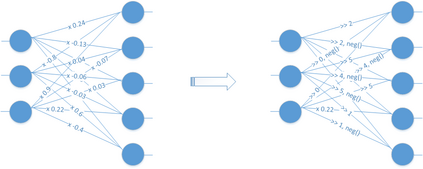

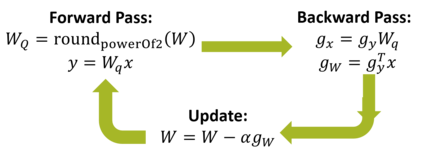

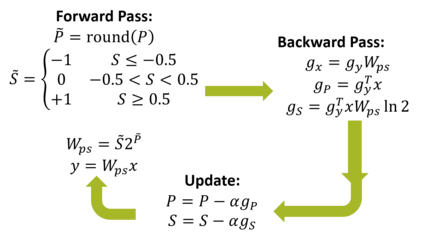

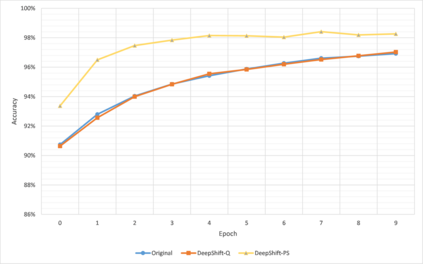

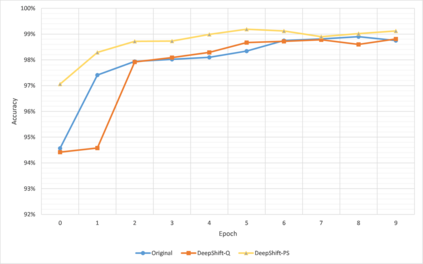

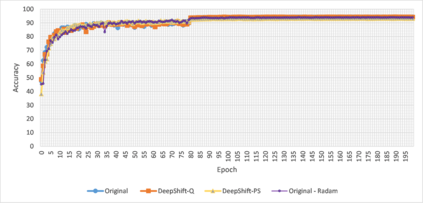

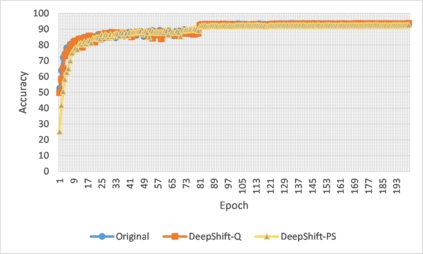

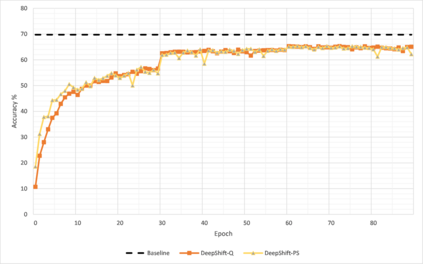

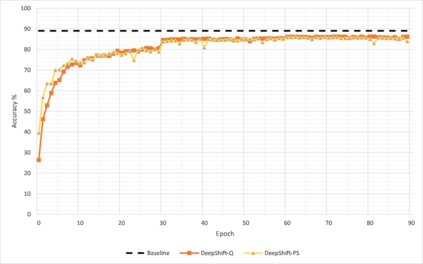

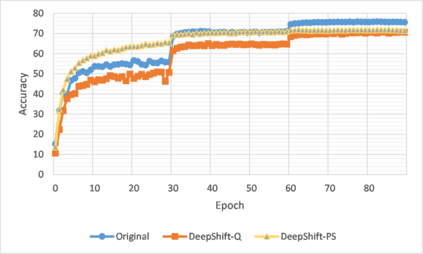

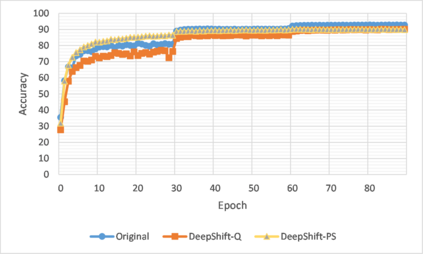

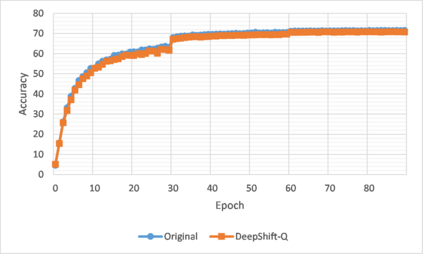

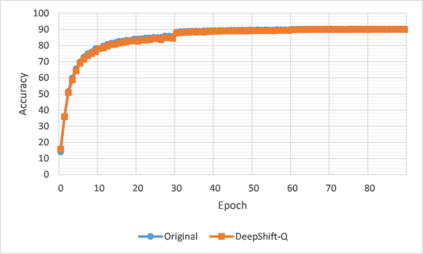

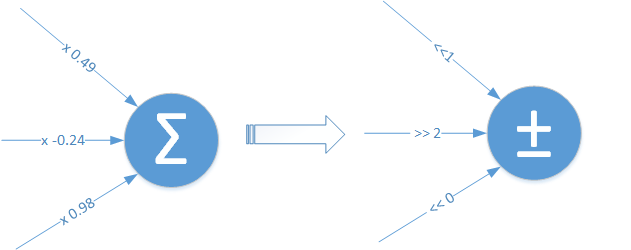

The high computation, memory, and power budgets of inferring convolutional neural networks (CNNs) are major bottlenecks of model deployment to edge computing platforms, e.g., mobile devices and IoT. Moreover, training CNNs is time and energy-intensive even on high-grade servers. Convolution layers and fully connected layers, because of their intense use of multiplications, are the dominant contributor to this computation budget. We propose to alleviate this problem by introducing two new operations: convolutional shifts and fully-connected shifts which replace multiplications with bitwise shift and sign flipping during both training and inference. During inference, both approaches require only 5 bits (or less) to represent the weights. This family of neural network architectures (that use convolutional shifts and fully connected shifts) is referred to as DeepShift models. We propose two methods to train DeepShift models: DeepShift-Q which trains regular weights constrained to powers of 2, and DeepShift-PS that trains the values of the shifts and sign flips directly. Very close accuracy, and in some cases higher accuracy, to baselines are achieved. Converting pre-trained 32-bit floating-point baseline models of ResNet18, ResNet50, VGG16, and GoogleNet to DeepShift and training them for 15 to 30 epochs, resulted in Top-1/Top-5 accuracies higher than that of the original model. Last but not least, we implemented the convolutional shifts and fully connected shift GPU kernels and showed a reduction in latency time of 25% when inferring ResNet18 compared to unoptimized multiplication-based GPU kernels. The code can be found at https://github.com/mostafaelhoushi/DeepShift.

翻译:计算进化神经网络(CNNs)的高计算、 记忆和动力预算是模型部署的主要瓶颈。 此外, CNN 培训的时间和能量密集度甚至高端服务器上也是时间和能源密集型的。 熔化层和完全连接的层由于大量使用乘数,是本计算预算的主要促成者。 我们提议通过引入两个新操作来缓解这一问题: 螺旋转移和完全连通的转换, 取代倍增, 在培训和感应期间, 以比对更明智的转换和标记翻转。 在推断期间, 两种方法只需要5位( 或更少) 来代表重量。 这种神经网络结构( 使用革命性转变和完全连通的转换) 被称为“ 深希夫特模型 ” 。 我们提出两种方法来培训深希夫特模型: 深希夫特- Q 将常规重量提高到2级, 而 Deep Shift- PSBS, 则在培训和直接显示变换值的时候, 深思托( 更精确性) 18 和 底基底基底基底的GGFIFIB) 模型, 开始后, 开始。