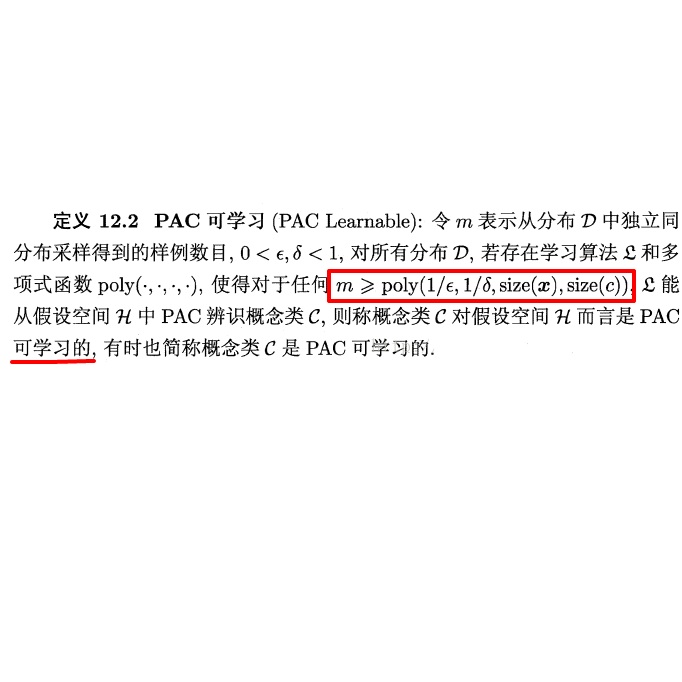

To study the resilience of distributed learning, the "Byzantine" literature considers a strong threat model where workers can report arbitrary gradients to the parameter server. Whereas this model helped obtain several fundamental results, it has sometimes been considered unrealistic, when the workers are mostly trustworthy machines. In this paper, we show a surprising equivalence between this model and data poisoning, a threat considered much more realistic. More specifically, we prove that every gradient attack can be reduced to data poisoning, in any personalized federated learning system with PAC guarantees (which we show are both desirable and realistic). This equivalence makes it possible to obtain new impossibility results on the resilience to data poisoning as corollaries of existing impossibility theorems on Byzantine machine learning. Moreover, using our equivalence, we derive a practical attack that we show (theoretically and empirically) can be very effective against classical personalized federated learning models.

翻译:为了研究分布式学习的复原力,“Byzantine”文献认为“Byzantine”文献是一种强大的威胁模型,工人可以在这个模型中向参数服务器报告任意梯度。虽然该模型有助于取得一些基本结果,但当工人大多是可信赖的机器时,它有时被认为是不现实的。在本文中,我们显示了这种模型与数据中毒的惊人等同,这种威胁被认为更加现实。更具体地说,我们证明在任何带有PAC保证的个人化联合会学习系统中,每一次梯度攻击都可以降低为数据中毒(我们证明,这种担保既可取又现实 ) 。 这种等同使得有可能获得数据中毒弹性方面新的不可能的结果,作为Byzantine机器学习的现有不可能的理论的结合体。 此外,利用我们的等同,我们所展示的(理论性和经验上的)实际攻击可以对传统的个人化联合学习模式产生非常有效的效果。