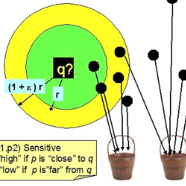

Recently, inspired by Transformer, self-attention-based scene text recognition approaches have achieved outstanding performance. However, we find that the size of model expands rapidly with the lexicon increasing. Specifically, the number of parameters for softmax classification layer and output embedding layer are proportional to the vocabulary size. It hinders the development of a lightweight text recognition model especially applied for Chinese and multiple languages. Thus, we propose a lightweight scene text recognition model named Hamming OCR. In this model, a novel Hamming classifier, which adopts locality sensitive hashing (LSH) algorithm to encode each character, is proposed to replace the softmax regression and the generated LSH code is directly employed to replace the output embedding. We also present a simplified transformer decoder to reduce the number of parameters by removing the feed-forward network and using cross-layer parameter sharing technique. Compared with traditional methods, the number of parameters in both classification and embedding layers is independent on the size of vocabulary, which significantly reduces the storage requirement without loss of accuracy. Experimental results on several datasets, including four public benchmaks and a Chinese text dataset synthesized by SynthText with more than 20,000 characters, shows that Hamming OCR achieves competitive results.

翻译:最近,在变异器的启发下,基于自我注意的场景文本识别方法取得了杰出的成绩。然而,我们发现模型的大小随着词典的增加而迅速扩大。具体地说,软式马克思分类层和输出嵌入层的参数数量与词汇的大小成比例。这阻碍了特别适用于中文和多种语言的轻量级文本识别模型的开发。因此,我们提议了一个名为Hamming OCR的轻量级场景文本识别模型。在这个模型中,一个采用对地敏感散列算法(LSH)对每个字符编码进行编码的新哈姆明分类器,以取代软式负重回归,而生成的LSH代码直接用于替换输出嵌入层。我们还提出了一个简化变异器解码器,通过删除向向上网络的进料和使用跨层参数共享技术来减少参数数量。与传统方法相比,分类和嵌入层的参数数量取决于词汇的大小,这大大降低了存储要求,而不会失去准确性。在几个数据集上,包括四个具有竞争力的硬体模型,能够通过Syal 20000 和中国的文本合成,通过Sy 。