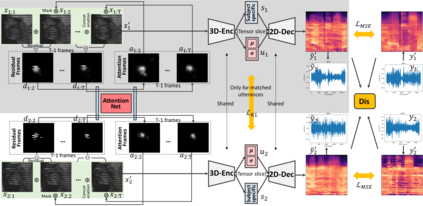

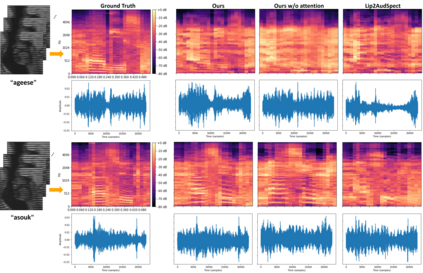

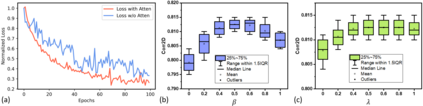

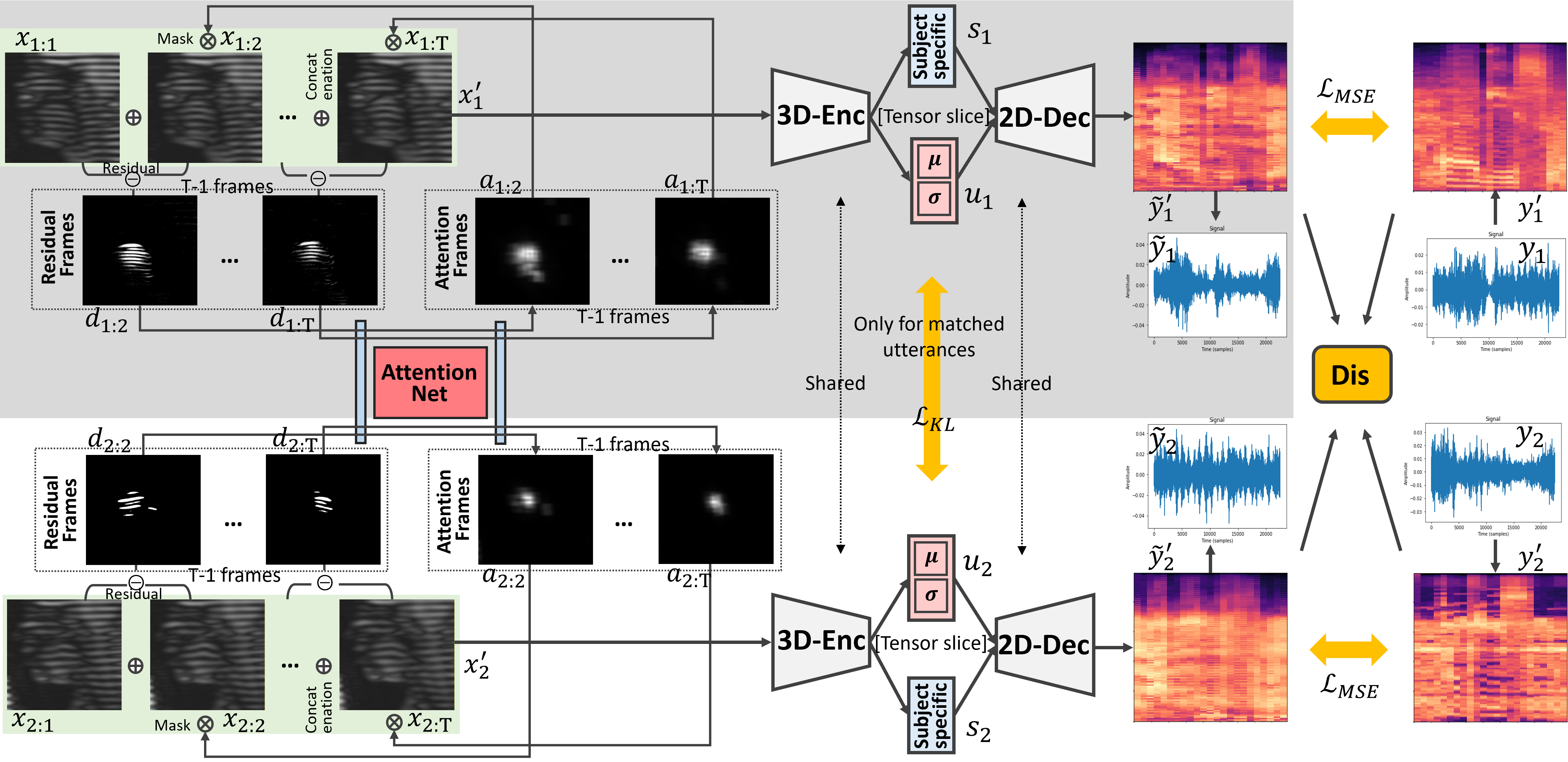

Understanding the underlying relationship between tongue and oropharyngeal muscle deformation seen in tagged-MRI and intelligible speech plays an important role in advancing speech motor control theories and treatment of speech related-disorders. Because of their heterogeneous representations, however, direct mapping between the two modalities -- i.e., two-dimensional (mid-sagittal slice) plus time tagged-MRI sequence and its corresponding one-dimensional waveform -- is not straightforward. Instead, we resort to two-dimensional spectrograms as an intermediate representation, which contains both pitch and resonance, from which to develop an end-to-end deep learning framework to translate from a sequence of tagged-MRI to its corresponding audio waveform with limited dataset size.~Our framework is based on a novel fully convolutional asymmetry translator with guidance of a self residual attention strategy to specifically exploit the moving muscular structures during speech.~In addition, we leverage a pairwise correlation of the samples with the same utterances with a latent space representation disentanglement strategy.~Furthermore, we incorporate an adversarial training approach with generative adversarial networks to offer improved realism on our generated spectrograms.~Our experimental results, carried out with a total of 63 tagged-MRI sequences alongside speech acoustics, showed that our framework enabled the generation of clear audio waveforms from a sequence of tagged-MRI, surpassing competing methods. Thus, our framework provides the great potential to help better understand the relationship between the two modalities.

翻译:理解在标记的磁共振和感知性演讲中看到的舌头和眼部肌肉变形之间的根本关系。但是,由于两种模式(即二维(中成片)加上时间标记的磁共振序列及其相应的一维波形)之间的直接映射,并非直截了当。相反,我们采用两维光谱图作为中间代表,其中包括投影和共振,从而在推进语音发动机控制理论和处理与语音有关的病症方面发挥着重要作用。然而,由于这两种模式(即二维(中成片)加上时间标记的磁共振动肌肉变形序列)与相应的一维波形波形序列之间的直接映射图,我们采用两维对立式的深层次学习框架,从标记-磁共振的序列转换成相应的声波变形模型。 我们的框架基于一种新型的对立式辩论式训练方法, 与更清晰的磁共振动式的图像框架相比, 提供了更清晰的磁共振动模型, 展示了更清晰的图像结构框架。